Staci Weiss

Towards early prediction of neurodevelopmental disorders: Computational model for Face Touch and Self-adaptors in Infants

Jan 15, 2023

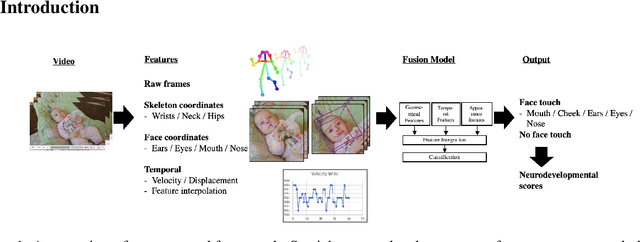

Abstract:Infants' neurological development is heavily influenced by their motor skills. Evaluating a baby's movements is key to understanding possible risks of developmental disorders in their growth. Previous research in psychology has shown that measuring specific movements or gestures such as face touches in babies is essential to analyse how babies understand themselves and their context. This research proposes the first automatic approach that detects face touches from video recordings by tracking infants' movements and gestures. The study uses a multimodal feature fusion approach mixing spatial and temporal features and exploits skeleton tracking information to generate more than 170 aggregated features of hand, face and body. This research proposes data-driven machine learning models for the detection and classification of face touch in infants. We used cross dataset testing to evaluate our proposed models. The models achieved 87.0% accuracy in detecting face touches and 71.4% macro-average accuracy in detecting specific face touch locations with significant improvements over Zero Rule and uniform random chance baselines. Moreover, we show that when we run our model to extract face touch frequencies of a larger dataset, we can predict the development of fine motor skills during the first 5 months after birth.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge