Sophie Chabridon

IP Paris, INF, ACMES-SAMOVAR

Combining Federated and Active Learning for Communication-efficient Distributed Failure Prediction in Aeronautics

Jan 21, 2020

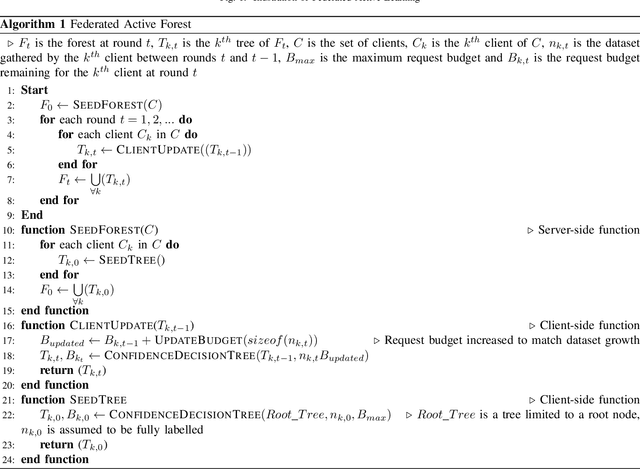

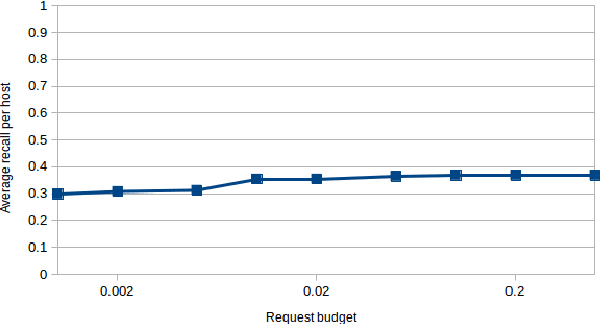

Abstract:Machine Learning has proven useful in the recent years as a way to achieve failure prediction for industrial systems. However, the high computational resources necessary to run learning algorithms are an obstacle to its widespread application. The sub-field of Distributed Learning offers a solution to this problem by enabling the use of remote resources but at the expense of introducing communication costs in the application that are not always acceptable. In this paper, we propose a distributed learning approach able to optimize the use of computational and communication resources to achieve excellent learning model performances through a centralized architecture. To achieve this, we present a new centralized distributed learning algorithm that relies on the learning paradigms of Active Learning and Federated Learning to offer a communication-efficient method that offers guarantees of model precision on both the clients and the central server. We evaluate this method on a public benchmark and show that its performances in terms of precision are very close to state-of-the-art performance level of non-distributed learning despite additional constraints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge