Solvi Arnold

Shinshu University

Flow-Lenia.png: Evolving Multi-Scale Complexity by Means of Compression

Aug 08, 2024

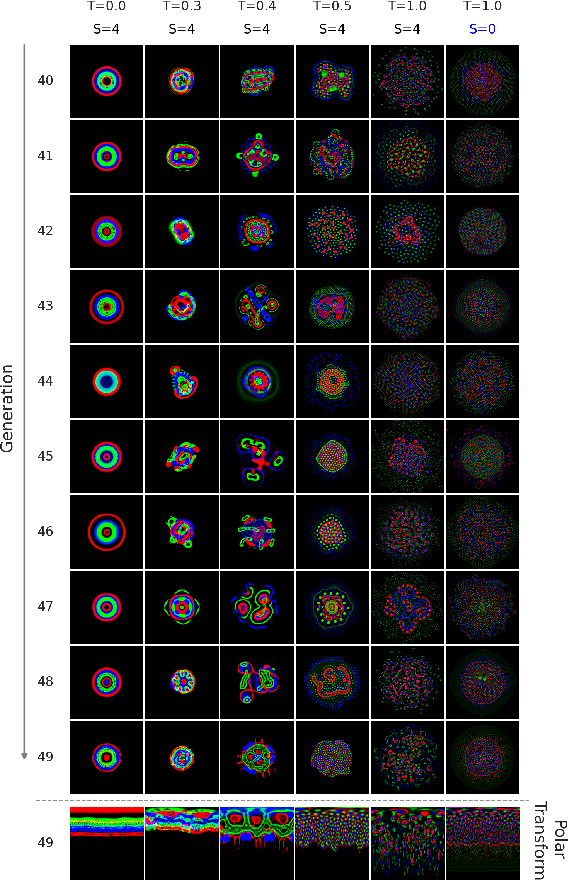

Abstract:We propose a fitness measure quantifying multi-scale complexity for cellular automaton states, using compressibility as a proxy for complexity. The use of compressibility is grounded in the concept of Kolmogorov complexity, which defines the complexity of an object by the size of its smallest representation. With this fitness function, we explore the complexity range accessible to the well-known Flow Lenia cellular automaton, using image compression algorithms to assess state compressibility. Using a Genetic Algorithm to evolve Flow Lenia patterns, we conduct experiments with two primary objectives: 1) generating patterns of specific complexity levels, and 2) exploring the extrema of Flow Lenia's complexity domain. Evolved patterns reflect the complexity targets, with higher complexity targets yielding more intricate patterns, consistent with human perceptions of complexity. This demonstrates that our fitness function can effectively evolve patterns that match specific complexity objectives within the bounds of the complexity range accessible to Flow Lenia under a given hyperparameter configuration.

Breaching the Bottleneck: Evolutionary Transition from Reward-Driven Learning to Reward-Agnostic Domain-Adapted Learning in Neuromodulated Neural Nets

Apr 19, 2024

Abstract:Advanced biological intelligence learns efficiently from an information-rich stream of stimulus information, even when feedback on behaviour quality is sparse or absent. Such learning exploits implicit assumptions about task domains. We refer to such learning as Domain-Adapted Learning (DAL). In contrast, AI learning algorithms rely on explicit externally provided measures of behaviour quality to acquire fit behaviour. This imposes an information bottleneck that precludes learning from diverse non-reward stimulus information, limiting learning efficiency. We consider the question of how biological evolution circumvents this bottleneck to produce DAL. We propose that species first evolve the ability to learn from reward signals, providing inefficient (bottlenecked) but broad adaptivity. From there, integration of non-reward information into the learning process can proceed via gradual accumulation of biases induced by such information on specific task domains. This scenario provides a biologically plausible pathway towards bottleneck-free, domain-adapted learning. Focusing on the second phase of this scenario, we set up a population of NNs with reward-driven learning modelled as Reinforcement Learning (A2C), and allow evolution to improve learning efficiency by integrating non-reward information into the learning process using a neuromodulatory update mechanism. On a navigation task in continuous 2D space, evolved DAL agents show a 300-fold increase in learning speed compared to pure RL agents. Evolution is found to eliminate reliance on reward information altogether, allowing DAL agents to learn from non-reward information exclusively, using local neuromodulation-based connection weight updates only.

Recognising Affordances in Predicted Futures to Plan with Consideration of Non-canonical Affordance Effects

Jun 22, 2022

Abstract:We propose a novel system for action sequence planning based on a combination of affordance recognition and a neural forward model predicting the effects of affordance execution. By performing affordance recognition on predicted futures, we avoid reliance on explicit affordance effect definitions for multi-step planning. Because the system learns affordance effects from experience data, the system can foresee not just the canonical effects of an affordance, but also situation-specific side-effects. This allows the system to avoid planning failures due to such non-canonical effects, and makes it possible to exploit non-canonical effects for realising a given goal. We evaluate the system in simulation, on a set of test tasks that require consideration of canonical and non-canonical affordance effects.

Cloth Manipulation Planning on Basis of Mesh Representations with Incomplete Domain Knowledge and Voxel-to-Mesh Estimation

Mar 15, 2021

Abstract:We consider the problem of open-goal planning for robotic cloth manipulation. Core of our system is a neural network trained as a forward model of cloth behaviour under manipulation, with planning performed through backpropagation. We introduce a neural network-based routine for estimating mesh representations from voxel input, and perform planning in mesh format internally. We address the problem of planning with incomplete domain knowledge by means of an explicit epistemic uncertainty signal. This signal is calculated from prediction divergence between two instances of the forward model network and used to avoid epistemic uncertainty during planning. Finally, we introduce logic for handling restriction of grasp points to a discrete set of candidates, in order to accommodate graspability constraints imposed by robotic hardware. We evaluate the system's mesh estimation, prediction, and planning ability on simulated cloth for sequences of one to three manipulations. Comparative experiments confirm that planning on basis of estimated meshes improves accuracy compared to voxel-based planning, and that epistemic uncertainty avoidance improves performance under conditions of incomplete domain knowledge. We additionally present qualitative results on robot hardware.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge