Sokhna Diarra Mbacke

A Note on the Convergence of Denoising Diffusion Probabilistic Models

Dec 10, 2023

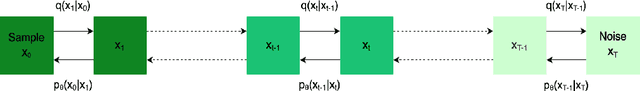

Abstract:Diffusion models are one of the most important families of deep generative models. In this note, we derive a quantitative upper bound on the Wasserstein distance between the data-generating distribution and the distribution learned by a diffusion model. Unlike previous works in this field, our result does not make assumptions on the learned score function. Moreover, our bound holds for arbitrary data-generating distributions on bounded instance spaces, even those without a density w.r.t. the Lebesgue measure, and the upper bound does not suffer from exponential dependencies. Our main result builds upon the recent work of Mbacke et al. (2023) and our proofs are elementary.

Statistical Guarantees for Variational Autoencoders using PAC-Bayesian Theory

Oct 07, 2023

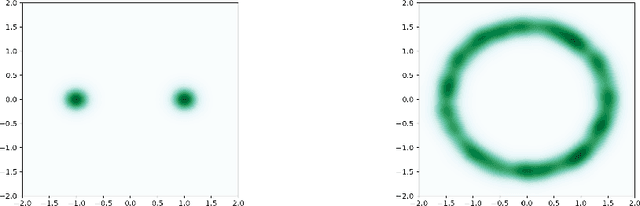

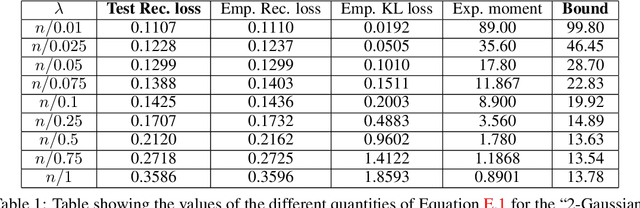

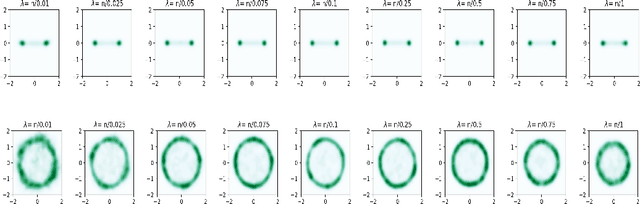

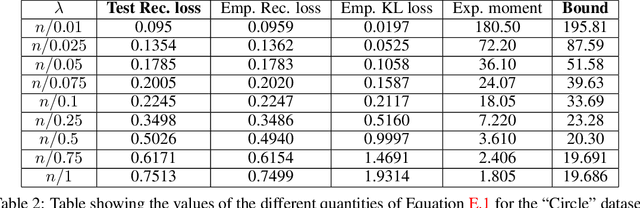

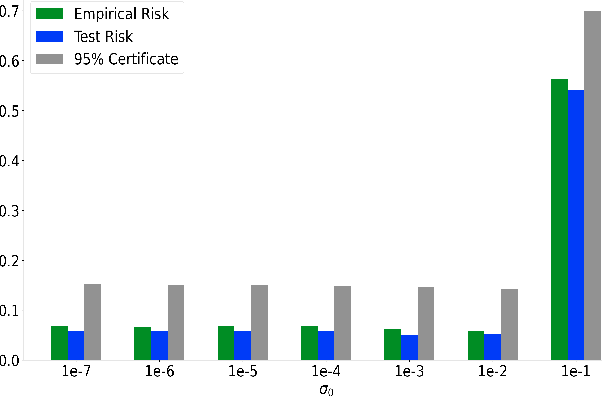

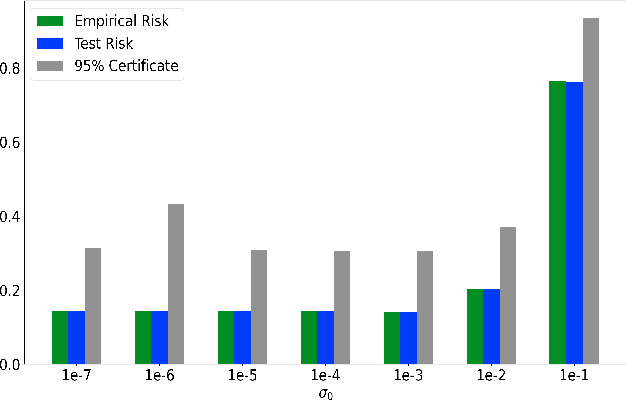

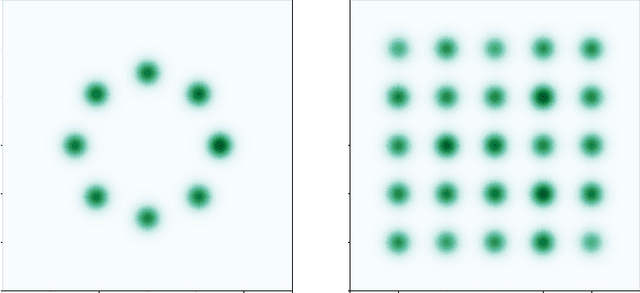

Abstract:Since their inception, Variational Autoencoders (VAEs) have become central in machine learning. Despite their widespread use, numerous questions regarding their theoretical properties remain open. Using PAC-Bayesian theory, this work develops statistical guarantees for VAEs. First, we derive the first PAC-Bayesian bound for posterior distributions conditioned on individual samples from the data-generating distribution. Then, we utilize this result to develop generalization guarantees for the VAE's reconstruction loss, as well as upper bounds on the distance between the input and the regenerated distributions. More importantly, we provide upper bounds on the Wasserstein distance between the input distribution and the distribution defined by the VAE's generative model.

PAC-Bayesian Generalization Bounds for Adversarial Generative Models

Feb 17, 2023

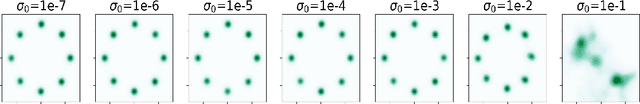

Abstract:We extend PAC-Bayesian theory to generative models and develop generalization bounds for models based on the Wasserstein distance and the total variation distance. Our first result on the Wasserstein distance assumes the instance space is bounded, while our second result takes advantage of dimensionality reduction. Our results naturally apply to Wasserstein GANs and Energy-Based GANs, and our bounds provide new training objectives for these two. Although our work is mainly theoretical, we perform numerical experiments showing non-vacuous generalization bounds for Wasserstein GANs on synthetic datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge