Siddhant Srivastava

BotNet Detection On Social Media

Oct 12, 2021

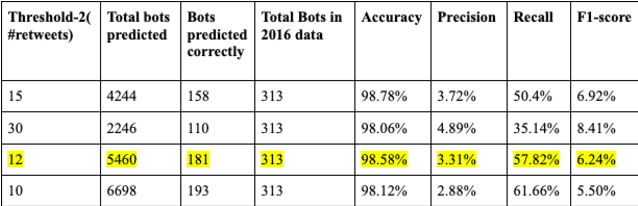

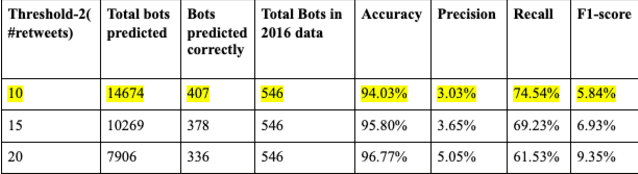

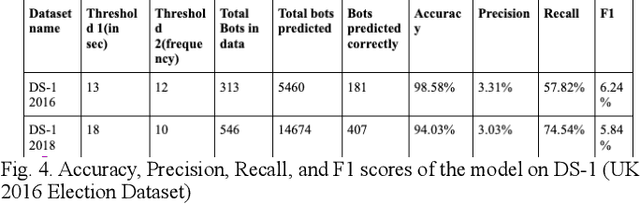

Abstract:Given the popularity of social media and the notion of it being a platform encouraging free speech, it has become an open playground for user (bot) accounts trying to manipulate other users using these platforms. Social bots not only learn human conversations, manners, and presence but also manipulate public opinion, act as scammers, manipulate stock markets, etc. There has been evidence of bots manipulating the election results which can be a great threat to the whole nation and hence the whole world. So identification and prevention of such campaigns that release or create the bots have become critical to tackling it at its source of origin. Our goal is to leverage semantic web mining techniques to identify fake bots or accounts involved in these activities.

Self-attention based end-to-end Hindi-English Neural Machine Translation

Sep 21, 2019

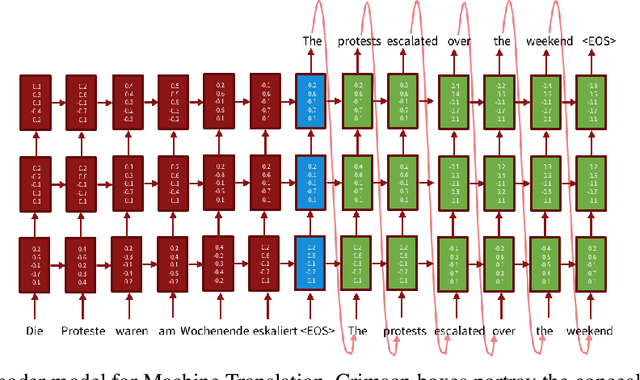

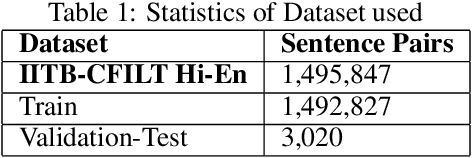

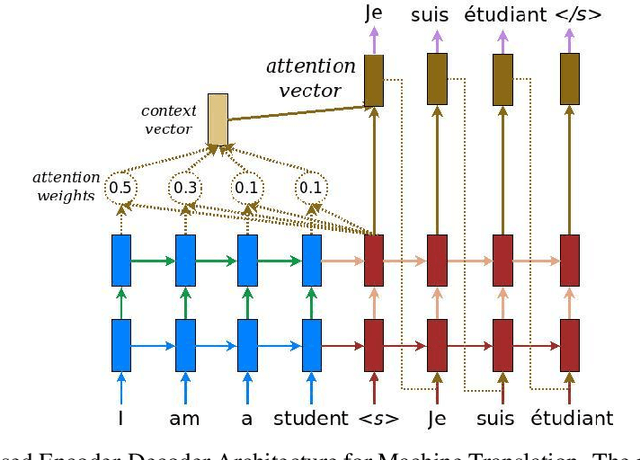

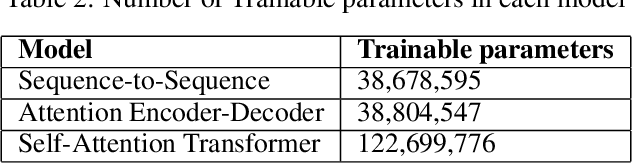

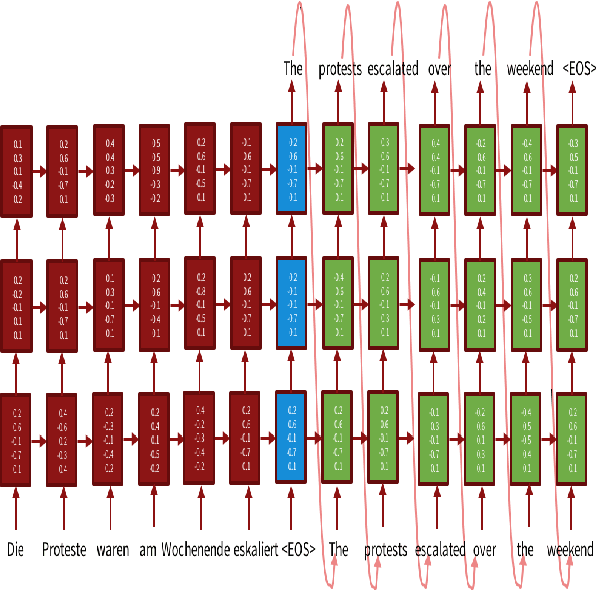

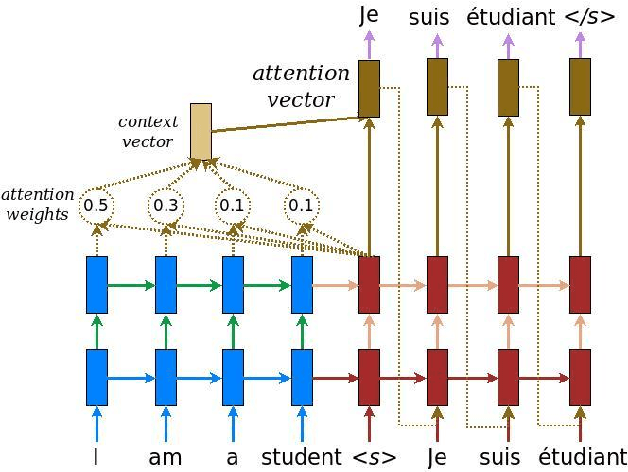

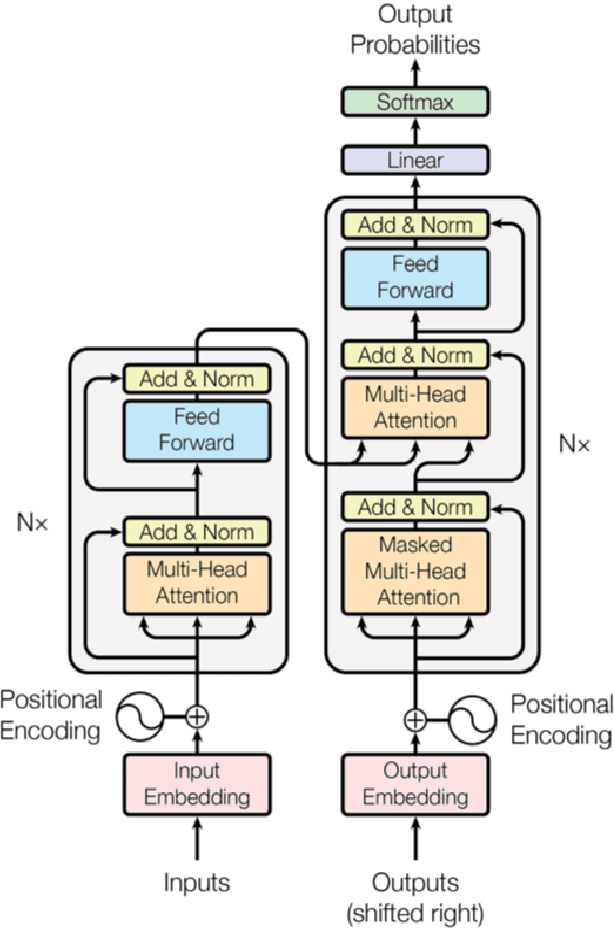

Abstract:Machine Translation (MT) is a zone of concentrate in Natural Language processing which manages the programmed interpretation of human language, starting with one language then onto the next by the PC. Having a rich research history spreading over about three decades, Machine interpretation is a standout amongst the most looked for after region of research in the computational linguistics network. As a piece of this current ace's proposal, the fundamental center examines the Deep-learning based strategies that have gained critical ground as of late and turning into the de facto strategy in MT. We would like to point out the recent advances that have been put forward in the field of Neural Translation models, different domains under which NMT has replaced conventional SMT models and would also like to mention future avenues in the field. Consequently, we propose an end-to-end self-attention transformer network for Neural Machine Translation, trained on Hindi-English parallel corpus and compare the model's efficiency with other state of art models like encoder-decoder and attention-based encoder-decoder neural models on the basis of BLEU. We conclude this paper with a comparative analysis of the three proposed models.

Machine Translation : From Statistical to modern Deep-learning practices

Dec 11, 2018

Abstract:Machine translation (MT) is an area of study in Natural Language processing which deals with the automatic translation of human language, from one language to another by the computer. Having a rich research history spanning nearly three decades, Machine translation is one of the most sought after area of research in the linguistics and computational community. In this paper, we investigate the models based on deep learning that have achieved substantial progress in recent years and becoming the prominent method in MT. We shall discuss the two main deep-learning based Machine Translation methods, one at component or domain level which leverages deep learning models to enhance the efficacy of Statistical Machine Translation (SMT) and end-to-end deep learning models in MT which uses neural networks to find correspondence between the source and target languages using the encoder-decoder architecture. We conclude this paper by providing a time line of the major research problems solved by the researchers and also provide a comprehensive overview of present areas of research in Neural Machine Translation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge