Shweta Patel

Improving Bias in Facial Attribute Classification: A Combined Impact of KL Divergence induced Loss Function and Dual Attention

Oct 15, 2024

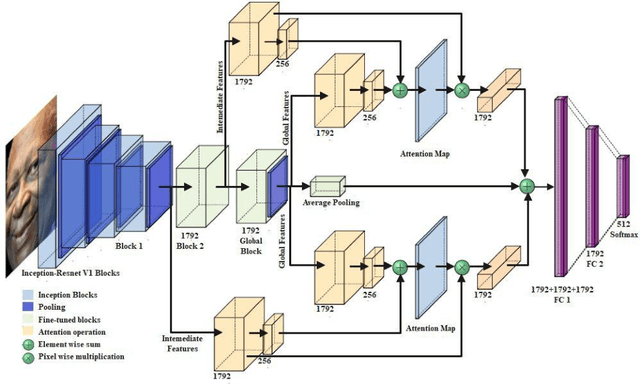

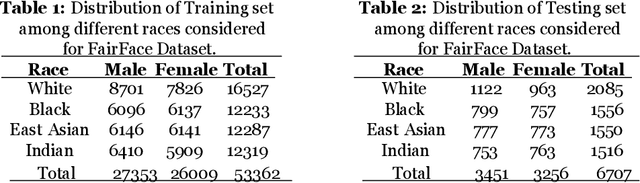

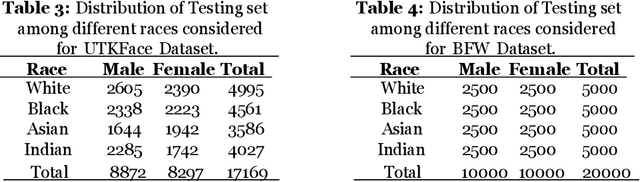

Abstract:Ensuring that AI-based facial recognition systems produce fair predictions and work equally well across all demographic groups is crucial. Earlier systems often exhibited demographic bias, particularly in gender and racial classification, with lower accuracy for women and individuals with darker skin tones. To tackle this issue and promote fairness in facial recognition, researchers have introduced several bias-mitigation techniques for gender classification and related algorithms. However, many challenges remain, such as data diversity, balancing fairness with accuracy, disparity, and bias measurement. This paper presents a method using a dual attention mechanism with a pre-trained Inception-ResNet V1 model, enhanced by KL-divergence regularization and a cross-entropy loss function. This approach reduces bias while improving accuracy and computational efficiency through transfer learning. The experimental results show significant improvements in both fairness and classification accuracy, providing promising advances in addressing bias and enhancing the reliability of facial recognition systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge