Shiyi Jiang

Exploration of Low-Power Flexible Stress Monitoring Classifiers for Conformal Wearables

Aug 27, 2025Abstract:Conventional stress monitoring relies on episodic, symptom-focused interventions, missing the need for continuous, accessible, and cost-efficient solutions. State-of-the-art approaches use rigid, silicon-based wearables, which, though capable of multitasking, are not optimized for lightweight, flexible wear, limiting their practicality for continuous monitoring. In contrast, flexible electronics (FE) offer flexibility and low manufacturing costs, enabling real-time stress monitoring circuits. However, implementing complex circuits like machine learning (ML) classifiers in FE is challenging due to integration and power constraints. Previous research has explored flexible biosensors and ADCs, but classifier design for stress detection remains underexplored. This work presents the first comprehensive design space exploration of low-power, flexible stress classifiers. We cover various ML classifiers, feature selection, and neural simplification algorithms, with over 1200 flexible classifiers. To optimize hardware efficiency, fully customized circuits with low-precision arithmetic are designed in each case. Our exploration provides insights into designing real-time stress classifiers that offer higher accuracy than current methods, while being low-cost, conformable, and ensuring low power and compact size.

Defending Against Gradient Inversion Attacks for Biomedical Images via Learnable Data Perturbation

Mar 19, 2025

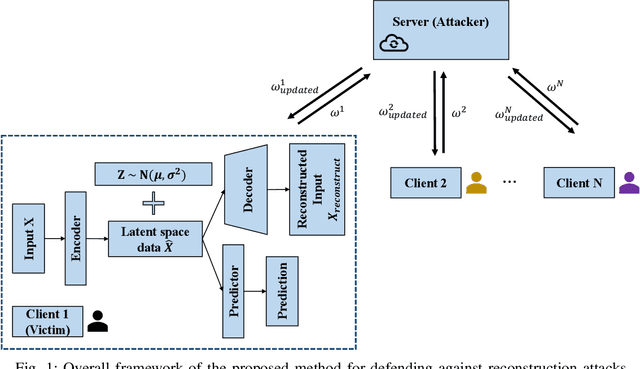

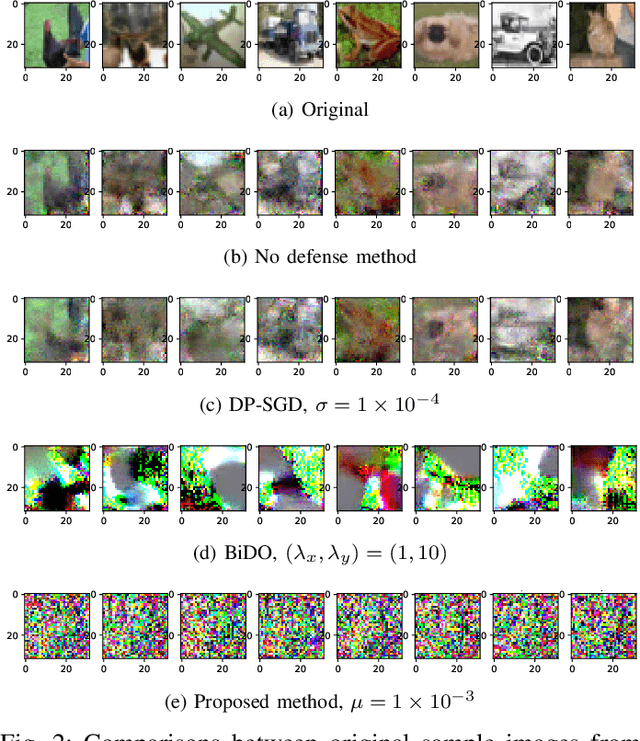

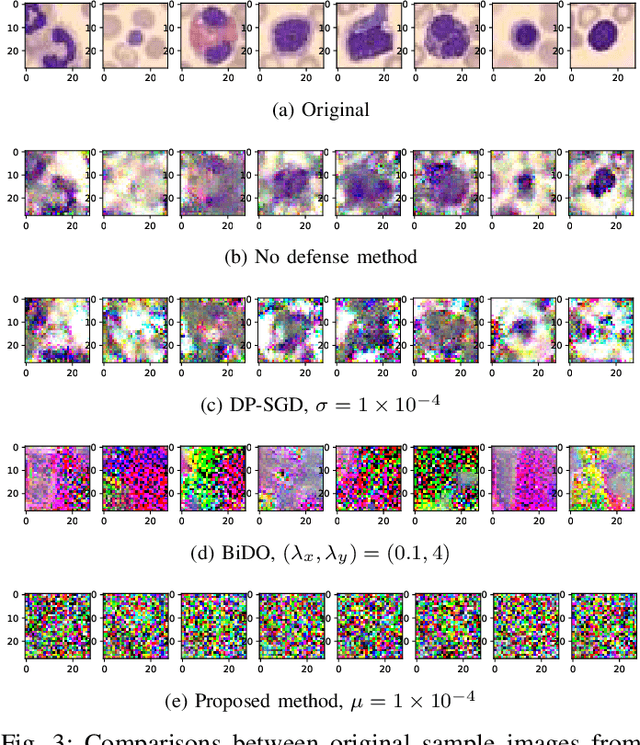

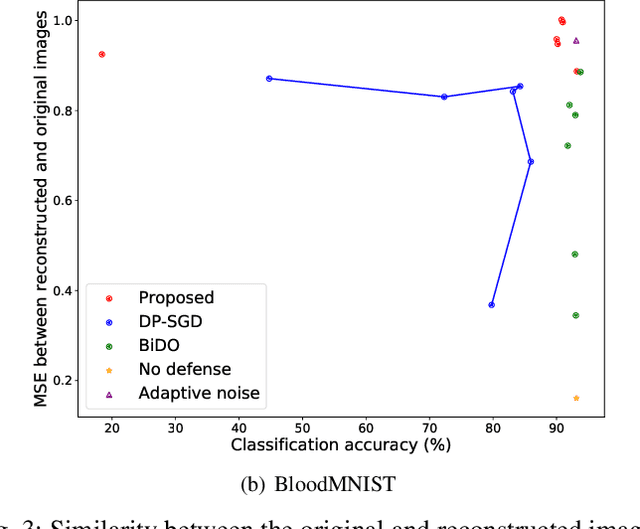

Abstract:The increasing need for sharing healthcare data and collaborating on clinical research has raised privacy concerns. Health information leakage due to malicious attacks can lead to serious problems such as misdiagnoses and patient identification issues. Privacy-preserving machine learning (PPML) and privacy-enhancing technologies, particularly federated learning (FL), have emerged in recent years as innovative solutions to balance privacy protection with data utility; however, they also suffer from inherent privacy vulnerabilities. Gradient inversion attacks constitute major threats to data sharing in federated learning. Researchers have proposed many defenses against gradient inversion attacks. However, current defense methods for healthcare data lack generalizability, i.e., existing solutions may not be applicable to data from a broader range of populations. In addition, most existing defense methods are tested using non-healthcare data, which raises concerns about their applicability to real-world healthcare systems. In this study, we present a defense against gradient inversion attacks in federated learning. We achieve this using latent data perturbation and minimax optimization, utilizing both general and medical image datasets. Our method is compared to two baselines, and the results show that our approach can outperform the baselines with a reduction of 12.5% in the attacker's accuracy in classifying reconstructed images. The proposed method also yields an increase of over 12.4% in Mean Squared Error (MSE) between the original and reconstructed images at the same level of model utility of around 90% client classification accuracy. The results suggest the potential of a generalizable defense for healthcare data.

Fast and Reliable Generation of EHR Time Series via Diffusion Models

Oct 23, 2023

Abstract:Electronic Health Records (EHRs) are rich sources of patient-level data, including laboratory tests, medications, and diagnoses, offering valuable resources for medical data analysis. However, concerns about privacy often restrict access to EHRs, hindering downstream analysis. Researchers have explored various methods for generating privacy-preserving EHR data. In this study, we introduce a new method for generating diverse and realistic synthetic EHR time series data using Denoising Diffusion Probabilistic Models (DDPM). We conducted experiments on six datasets, comparing our proposed method with seven existing methods. Our results demonstrate that our approach significantly outperforms all existing methods in terms of data utility while requiring less training effort. Our approach also enhances downstream medical data analysis by providing diverse and realistic synthetic EHR data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge