Shirley Moore

Reducing Communication Overhead in Federated Learning for Network Anomaly Detection with Adaptive Client Selection

Mar 19, 2025

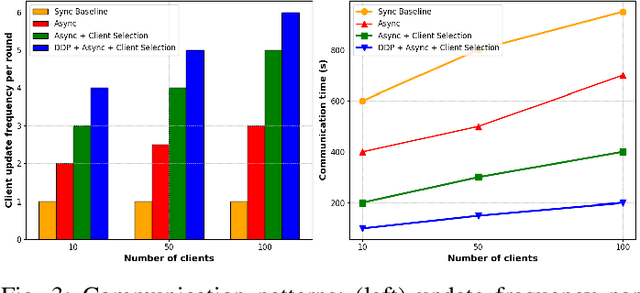

Abstract:Communication overhead in federated learning (FL) poses a significant challenge for network anomaly detection systems, where diverse client configurations and network conditions impact efficiency and detection accuracy. Existing approaches attempt optimization individually but struggle to balance reduced overhead with performance. This paper presents an adaptive FL framework combining batch size optimization, client selection, and asynchronous updates for efficient anomaly detection. Using UNSW-NB15 for general network traffic and ROAD for automotive networks, our framework reduces communication overhead by 97.6% (700.0s to 16.8s) while maintaining comparable accuracy (95.10% vs. 95.12%). The Mann-Whitney U test confirms significant improvements (p < 0.05). Profiling analysis reveals efficiency gains via reduced GPU operations and memory transfers, ensuring robust detection across varying client conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge