Sharmin Majumder

Multitasking Deep Learning Model for Detection of Five Stages of Diabetic Retinopathy

Mar 06, 2021

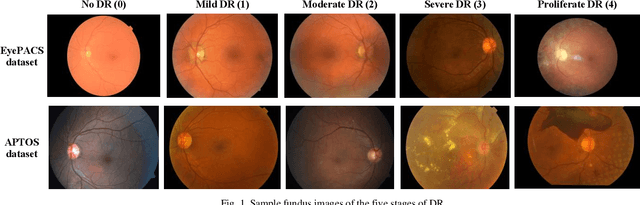

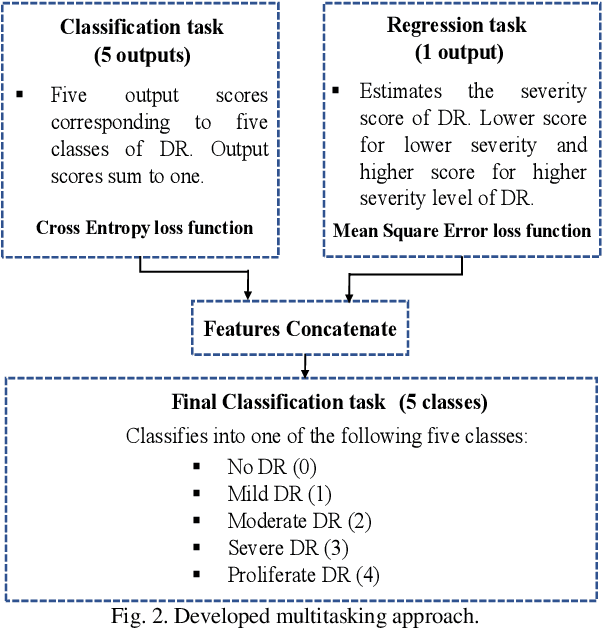

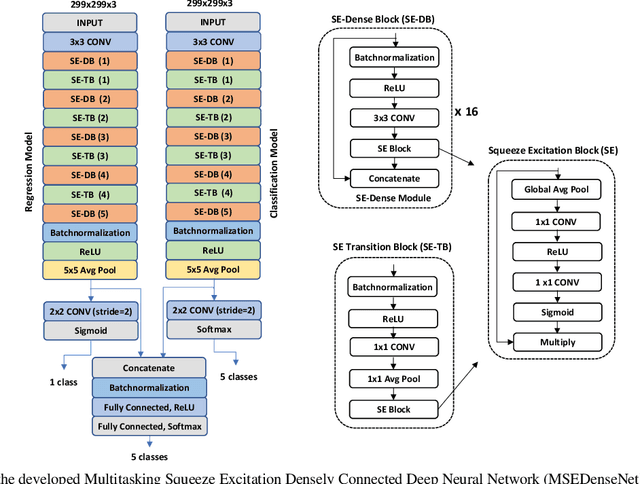

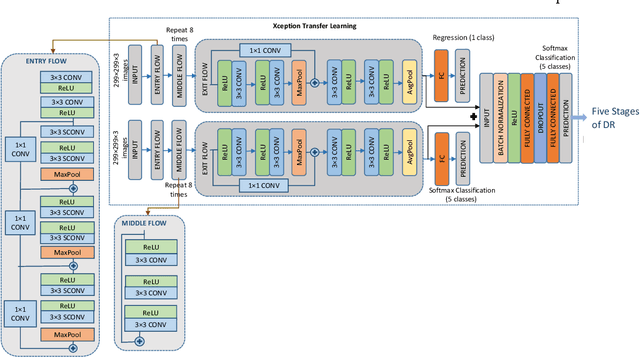

Abstract:This paper presents a multitask deep learning model to detect all the five stages of diabetic retinopathy (DR) consisting of no DR, mild DR, moderate DR, severe DR, and proliferate DR. This multitask model consists of one classification model and one regression model, each with its own loss function. Noting that a higher severity level normally occurs after a lower severity level, this dependency is taken into consideration by concatenating the classification and regression models. The regression model learns the inter-dependency between the stages and outputs a score corresponding to the severity level of DR generating a higher score for a higher severity level. After training the regression model and the classification model separately, the features extracted by these two models are concatenated and inputted to a multilayer perceptron network to classify the five stages of DR. A modified Squeeze Excitation Densely Connected deep neural network is developed to implement this multitasking approach. The developed multitask model is then used to detect the five stages of DR by examining the two large Kaggle datasets of APTOS and EyePACS. A multitasking transfer learning model based on Xception network is also developed to evaluate the proposed approach by classifying DR into five stages. It is found that the developed model achieves a weighted Kappa score of 0.90 and 0.88 for the APTOS and EyePACS datasets, respectively, higher than any existing methods for detection of the five stages of DR

Vision and Inertial Sensing Fusion for Human Action Recognition : A Review

Aug 02, 2020

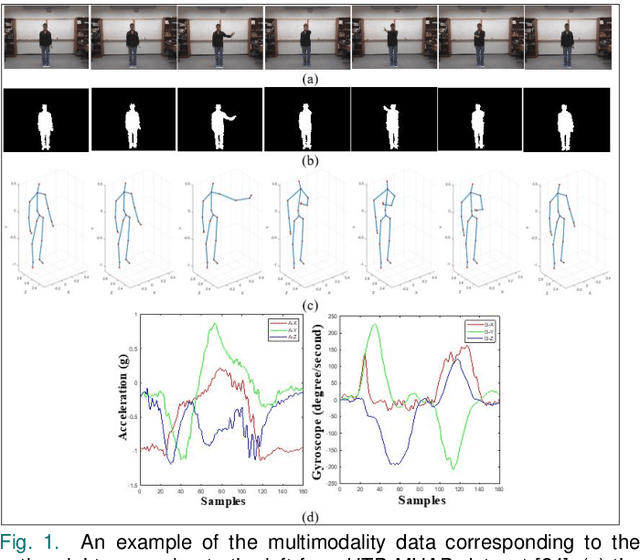

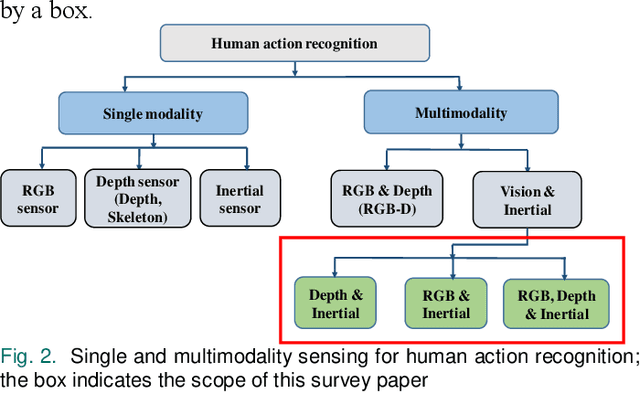

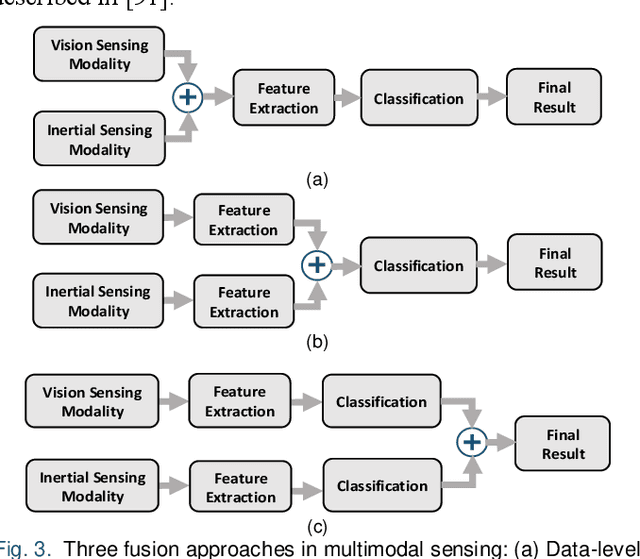

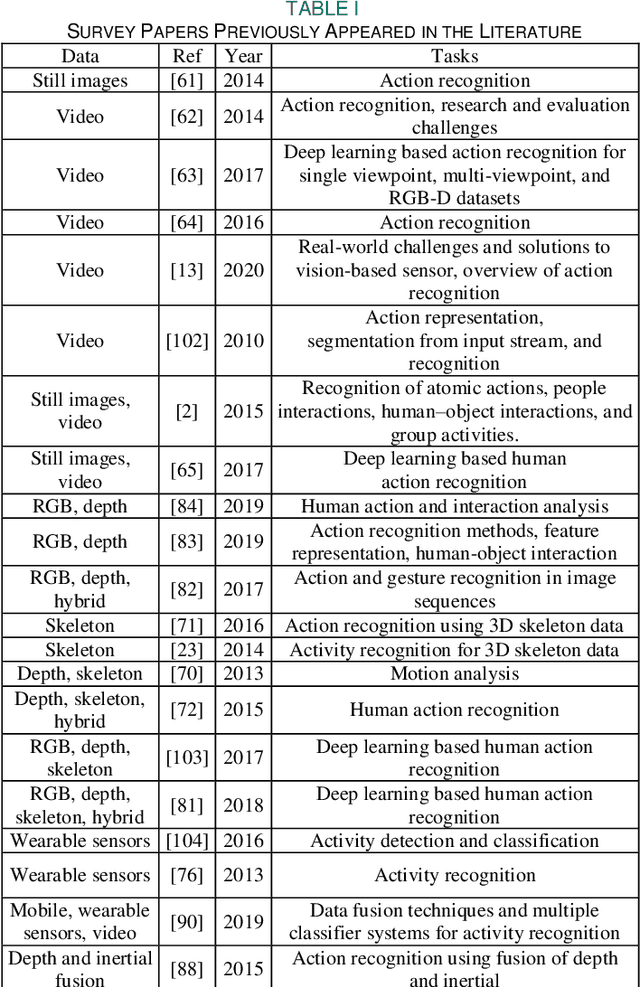

Abstract:Human action recognition is used in many applications such as video surveillance, human computer interaction, assistive living, and gaming. Many papers have appeared in the literature showing that the fusion of vision and inertial sensing improves recognition accuracies compared to the situations when each sensing modality is used individually. This paper provides a survey of the papers in which vision and inertial sensing are used simultaneously within a fusion framework in order to perform human action recognition. The surveyed papers are categorized in terms of fusion approaches, features, classifiers, as well as multimodality datasets considered. Challenges as well as possible future directions are also stated for deploying the fusion of these two sensing modalities under realistic conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge