Shahriar Rezghi Shirsavar

University of Tehran, Iran

Spyker: High-performance Library for Spiking Deep Neural Networks

Jan 31, 2023

Abstract:Spiking neural networks (SNNs) have been recently brought to light due to their promising capabilities. SNNs simulate the brain with higher biological plausibility compared to previous generations of neural networks. Learning with fewer samples and consuming less power are among the key features of these networks. However, the theoretical advantages of SNNs have not been seen in practice due to the slowness of simulation tools and the impracticality of the proposed network structures. In this work, we implement a high-performance library named Spyker using C++/CUDA from scratch that outperforms its predecessor. Several SNNs are implemented in this work with different learning rules (spike-timing-dependent plasticity and reinforcement learning) using Spyker that achieve significantly better runtimes, to prove the practicality of the library in the simulation of large-scale networks. To our knowledge, no such tools have been developed to simulate large-scale spiking neural networks with high performance using a modular structure. Furthermore, a comparison of the represented stimuli extracted from Spyker to recorded electrophysiology data is performed to demonstrate the applicability of SNNs in describing the underlying neural mechanisms of the brain functions. The aim of this library is to take a significant step toward uncovering the true potential of the brain computations using SNNs.

Models Developed for Spiking Neural Networks

Dec 08, 2022

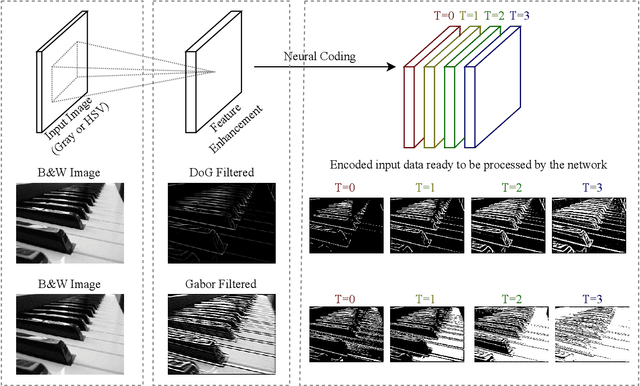

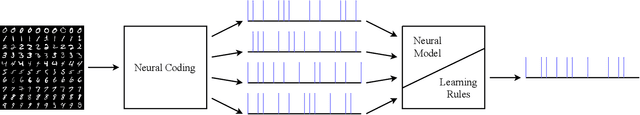

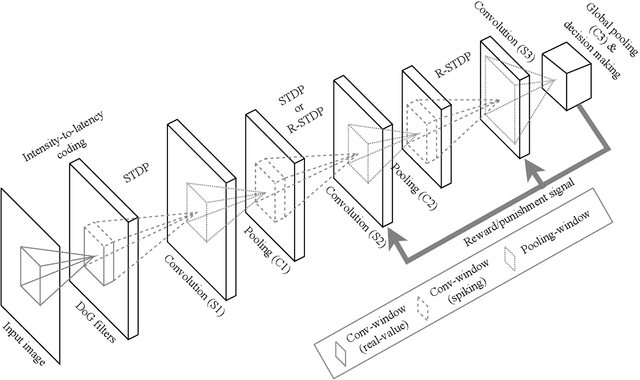

Abstract:Emergence of deep neural networks (DNNs) has raised enormous attention towards artificial neural networks (ANNs) once again. They have become the state-of-the-art models and have won different machine learning challenges. Although these networks are inspired by the brain, they lack biological plausibility, and they have structural differences compared to the brain. Spiking neural networks (SNNs) have been around for a long time, and they have been investigated to understand the dynamics of the brain. However, their application in real-world and complicated machine learning tasks were limited. Recently, they have shown great potential in solving such tasks. Due to their energy efficiency and temporal dynamics there are many promises in their future development. In this work, we reviewed the structures and performances of SNNs on image classification tasks. The comparisons illustrate that these networks show great capabilities for more complicated problems. Furthermore, the simple learning rules developed for SNNs, such as STDP and R-STDP, can be a potential alternative to replace the backpropagation algorithm used in DNNs.

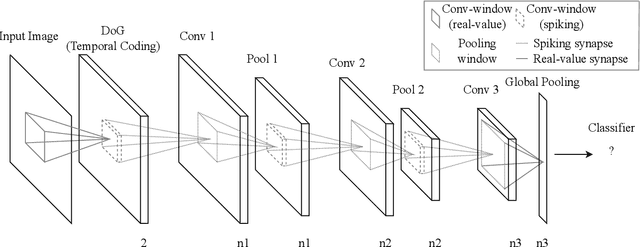

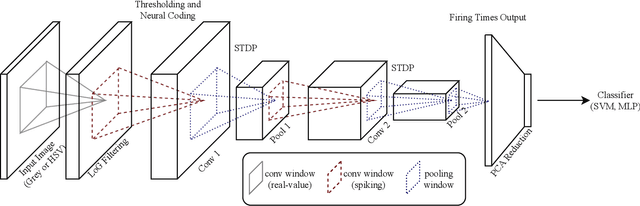

A Faster Approach to Spiking Deep Convolutional Neural Networks

Oct 31, 2022Abstract:Spiking neural networks (SNNs) have closer dynamics to the brain than current deep neural networks. Their low power consumption and sample efficiency make these networks interesting. Recently, several deep convolutional spiking neural networks have been proposed. These networks aim to increase biological plausibility while creating powerful tools to be applied to machine learning tasks. Here, we suggest a network structure based on previous work to improve network runtime and accuracy. Improvements to the network include reducing training iterations to only once, effectively using principal component analysis (PCA) dimension reduction, weight quantization, timed outputs for classification, and better hyperparameter tuning. Furthermore, the preprocessing step is changed to allow the processing of colored images instead of only black and white to improve accuracy. The proposed structure fractionalizes runtime and introduces an efficient approach to deep convolutional SNNs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge