Shahad Al-Khalifa

A Survey of Large Language Models for Arabic Language and its Dialects

Oct 26, 2024

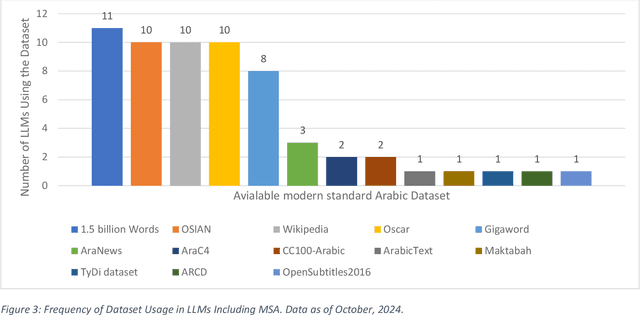

Abstract:This survey offers a comprehensive overview of Large Language Models (LLMs) designed for Arabic language and its dialects. It covers key architectures, including encoder-only, decoder-only, and encoder-decoder models, along with the datasets used for pre-training, spanning Classical Arabic, Modern Standard Arabic, and Dialectal Arabic. The study also explores monolingual, bilingual, and multilingual LLMs, analyzing their architectures and performance across downstream tasks, such as sentiment analysis, named entity recognition, and question answering. Furthermore, it assesses the openness of Arabic LLMs based on factors, such as source code availability, training data, model weights, and documentation. The survey highlights the need for more diverse dialectal datasets and attributes the importance of openness for research reproducibility and transparency. It concludes by identifying key challenges and opportunities for future research and stressing the need for more inclusive and representative models.

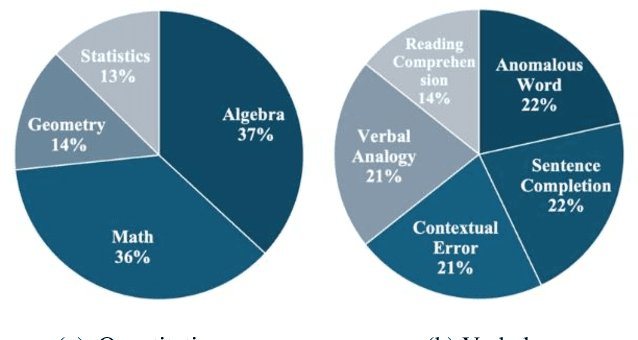

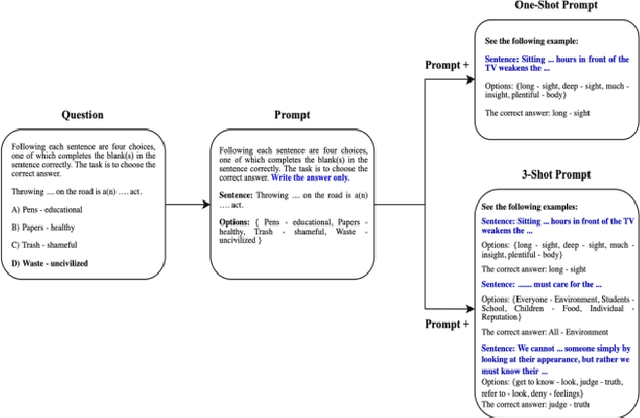

The Qiyas Benchmark: Measuring ChatGPT Mathematical and Language Understanding in Arabic

Jun 28, 2024

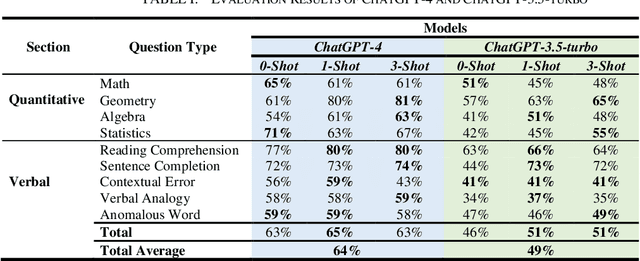

Abstract:Despite the growing importance of Arabic as a global language, there is a notable lack of language models pre-trained exclusively on Arabic data. This shortage has led to limited benchmarks available for assessing language model performance in Arabic. To address this gap, we introduce two novel benchmarks designed to evaluate models' mathematical reasoning and language understanding abilities in Arabic. These benchmarks are derived from a General Aptitude Test (GAT) called Qiyas exam, a standardized test widely used for university admissions in Saudi Arabia. For validation purposes, we assess the performance of ChatGPT-3.5-trubo and ChatGPT-4 on our benchmarks. Our findings reveal that these benchmarks pose a significant challenge, with ChatGPT-4 achieving an overall average accuracy of 64%, while ChatGPT-3.5-trubo achieved an overall accuracy of 49% across the various question types in the Qiyas benchmark. We believe the release of these benchmarks will pave the way for enhancing the mathematical reasoning and language understanding capabilities of future models tailored for the low-resource Arabic language.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge