Serge Wich

AI-Driven Real-Time Monitoring of Ground-Nesting Birds: A Case Study on Curlew Detection Using YOLOv10

Nov 22, 2024

Abstract:Effective monitoring of wildlife is critical for assessing biodiversity and ecosystem health, as declines in key species often signal significant environmental changes. Birds, particularly ground-nesting species, serve as important ecological indicators due to their sensitivity to environmental pressures. Camera traps have become indispensable tools for monitoring nesting bird populations, enabling data collection across diverse habitats. However, the manual processing and analysis of such data are resource-intensive, often delaying the delivery of actionable conservation insights. This study presents an AI-driven approach for real-time species detection, focusing on the curlew (Numenius arquata), a ground-nesting bird experiencing significant population declines. A custom-trained YOLOv10 model was developed to detect and classify curlews and their chicks using 3/4G-enabled cameras linked to the Conservation AI platform. The system processes camera trap data in real-time, significantly enhancing monitoring efficiency. Across 11 nesting sites in Wales, the model achieved high performance, with a sensitivity of 90.56%, specificity of 100%, and F1-score of 95.05% for curlew detections, and a sensitivity of 92.35%, specificity of 100%, and F1-score of 96.03% for curlew chick detections. These results demonstrate the capability of AI-driven monitoring systems to deliver accurate, timely data for biodiversity assessments, facilitating early conservation interventions and advancing the use of technology in ecological research.

Towards Context-Rich Automated Biodiversity Assessments: Deriving AI-Powered Insights from Camera Trap Data

Nov 21, 2024

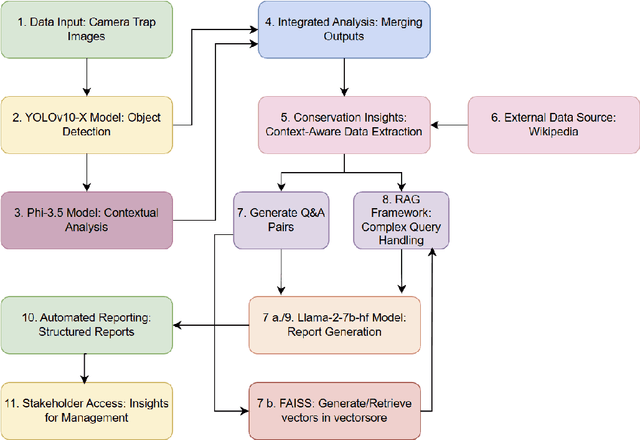

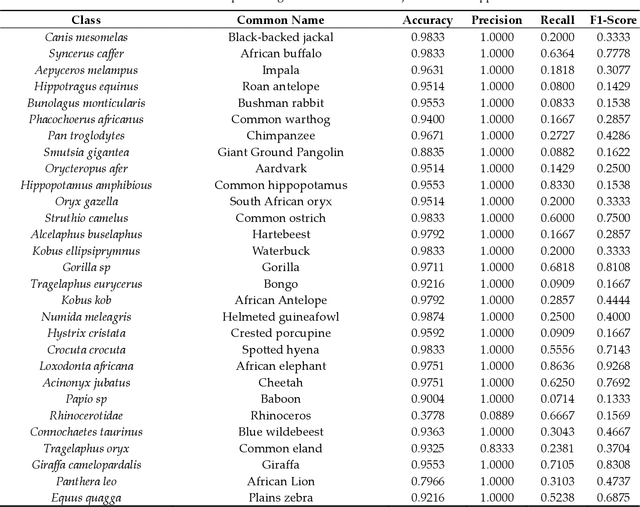

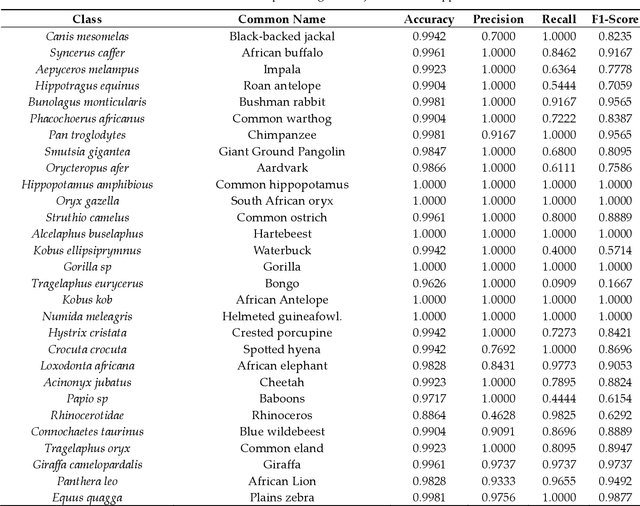

Abstract:Camera traps offer enormous new opportunities in ecological studies, but current automated image analysis methods often lack the contextual richness needed to support impactful conservation outcomes. Here we present an integrated approach that combines deep learning-based vision and language models to improve ecological reporting using data from camera traps. We introduce a two-stage system: YOLOv10-X to localise and classify species (mammals and birds) within images, and a Phi-3.5-vision-instruct model to read YOLOv10-X binding box labels to identify species, overcoming its limitation with hard to classify objects in images. Additionally, Phi-3.5 detects broader variables, such as vegetation type, and time of day, providing rich ecological and environmental context to YOLO's species detection output. When combined, this output is processed by the model's natural language system to answer complex queries, and retrieval-augmented generation (RAG) is employed to enrich responses with external information, like species weight and IUCN status (information that cannot be obtained through direct visual analysis). This information is used to automatically generate structured reports, providing biodiversity stakeholders with deeper insights into, for example, species abundance, distribution, animal behaviour, and habitat selection. Our approach delivers contextually rich narratives that aid in wildlife management decisions. By providing contextually rich insights, our approach not only reduces manual effort but also supports timely decision-making in conservation, potentially shifting efforts from reactive to proactive management.

Removing Human Bottlenecks in Bird Classification Using Camera Trap Images and Deep Learning

May 03, 2023

Abstract:Birds are important indicators for monitoring both biodiversity and habitat health; they also play a crucial role in ecosystem management. Decline in bird populations can result in reduced eco-system services, including seed dispersal, pollination and pest control. Accurate and long-term monitoring of birds to identify species of concern while measuring the success of conservation interventions is essential for ecologists. However, monitoring is time consuming, costly and often difficult to manage over long durations and at meaningfully large spatial scales. Technology such as camera traps, acoustic monitors and drones provide methods for non-invasive monitoring. There are two main problems with using camera traps for monitoring: a) cameras generate many images, making it difficult to process and analyse the data in a timely manner; and b) the high proportion of false positives hinders the processing and analysis for reporting. In this paper, we outline an approach for overcoming these issues by utilising deep learning for real-time classi-fication of bird species and automated removal of false positives in camera trap data. Images are classified in real-time using a Faster-RCNN architecture. Images are transmitted over 3/4G cam-eras and processed using Graphical Processing Units (GPUs) to provide conservationists with key detection metrics therefore removing the requirement for manual observations. Our models achieved an average sensitivity of 88.79%, a specificity of 98.16% and accuracy of 96.71%. This demonstrates the effectiveness of using deep learning for automatic bird monitoring.

Empowering Wildlife Guardians: An Equitable Digital Stewardship and Reward System for Biodiversity Conservation using Deep Learning and 3/4G Camera Traps

Apr 25, 2023

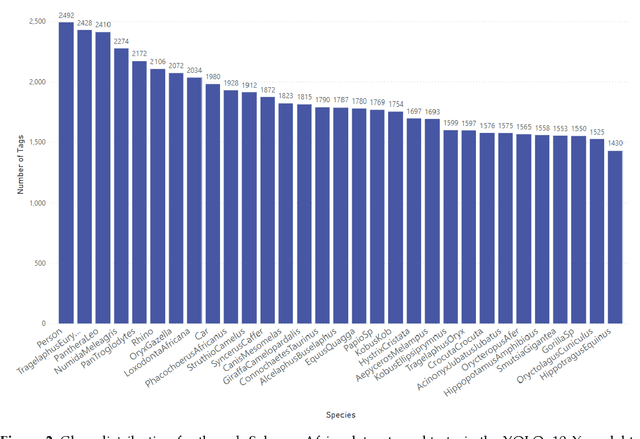

Abstract:The biodiversity of our planet is under threat, with approximately one million species expected to become extinct within decades. The reason; negative human actions, which include hunting, overfishing, pollution, and the conversion of land for urbanisation and agricultural purposes. Despite significant investment from charities and governments for activities that benefit nature, global wildlife populations continue to decline. Local wildlife guardians have historically played a critical role in global conservation efforts and have shown their ability to achieve sustainability at various levels. In 2021, COP26 recognised their contributions and pledged US$1.7 billion per year; however, this is a fraction of the global biodiversity budget available (between US$124 billion and US$143 billion annually) given they protect 80% of the planets biodiversity. This paper proposes a radical new solution based on "Interspecies Money," where animals own their own money. Creating a digital twin for each species allows animals to dispense funds to their guardians for the services they provide. For example, a rhinoceros may release a payment to its guardian each time it is detected in a camera trap as long as it remains alive and well. To test the efficacy of this approach 27 camera traps were deployed over a 400km2 area in Welgevonden Game Reserve in Limpopo Province in South Africa. The motion-triggered camera traps were operational for ten months and, using deep learning, we managed to capture images of 12 distinct animal species. For each species, a makeshift bank account was set up and credited with {\pounds}100. Each time an animal was captured in a camera and successfully classified, 1 penny (an arbitrary amount - mechanisms still need to be developed to determine the real value of species) was transferred from the animal account to its associated guardian.

Conservation AI: Live Stream Analysis for the Detection of Endangered Species Using Convolutional Neural Networks and Drone Technology

Oct 16, 2019

Abstract:Many different species are adversely affected by poaching. In response to this escalating crisis, efforts to stop poaching using hidden cameras, drones and DNA tracking have been implemented with varying degrees of success. Limited resources, costs and logistical limitations are often the cause of most unsuccessful poaching interventions. The study presented in this paper outlines a flexible and interoperable framework for the automatic detection of animals and poaching activity to facilitate early intervention practices. Using a robust deep learning pipeline, a convolutional neural network is trained and implemented to detect rhinos and cars (considered an important tool in poaching for fast access and artefact transportation in natural habitats) in the study, that are found within live video streamed from drones Transfer learning with the Faster RCNN Resnet 101 is performed to train a custom model with 350 images of rhinos and 350 images of cars. Inference is performed using a frame sampling technique to address the required trade-off control precision and processing speed and maintain synchronisation with the live feed. Inference models are hosted on a web platform using flask web serving, OpenCV and TensorFlow 1.13. Video streams are transmitted from a DJI Mavic Pro 2 drone using the Real-Time Messaging Protocol (RMTP). The best trained Faster RCNN model achieved a mAP of 0.83 @IOU 0.50 and 0.69 @IOU 0.75 respectively. In comparison an SSD-mobilenetmodel trained under the same experimental conditions achieved a mAP of 0.55 @IOU .50 and 0.27 @IOU 0.75.The results demonstrate that using a FRCNN and off-the-shelf drones is a promising and scalable option for a range of conservation projects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge