Seif-Eddine Benkabou

Fréchet regression for multi-label feature selection with implicit regularization

Dec 24, 2024Abstract:Fr\'echet regression extends linear regression to model complex responses in metric spaces, making it particularly relevant for multi-label regression, where each instance can have multiple associated labels. However, variable selection within this framework remains underexplored. In this paper, we pro pose a novel variable selection method that employs implicit regularization instead of traditional explicit regularization approaches, which can introduce bias. Our method effectively captures nonlinear interactions between predic tors and responses while promoting model sparsity. We provide theoretical results demonstrating selection consistency and illustrate the performance of our approach through numerical examples

A Two-Fold Patch Selection Approach for Improved 360-Degree Image Quality Assessment

Dec 17, 2024

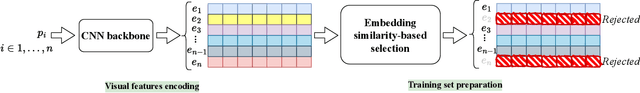

Abstract:This article presents a novel approach to improving the accuracy of 360-degree perceptual image quality assessment (IQA) through a two-fold patch selection process. Our methodology combines visual patch selection with embedding similarity-based refinement. The first stage focuses on selecting patches from 360-degree images using three distinct sampling methods to ensure comprehensive coverage of visual content for IQA. The second stage, which is the core of our approach, employs an embedding similarity-based selection process to filter and prioritize the most informative patches based on their embeddings similarity distances. This dual selection mechanism ensures that the training data is both relevant and informative, enhancing the model's learning efficiency. Extensive experiments and statistical analyses using three distance metrics across three benchmark datasets validate the effectiveness of our selection algorithm. The results highlight its potential to deliver robust and accurate 360-degree IQA, with performance gains of up to 4.5% in accuracy and monotonicity of quality score prediction, while using only 40% to 50% of the training patches. These improvements are consistent across various configurations and evaluation metrics, demonstrating the strength of the proposed method. The code for the selection process is available at: https://github.com/sendjasni/patch-selection-360-image-quality.

Implicit Regularization for Multi-label Feature Selection

Nov 18, 2024Abstract:In this paper, we address the problem of feature selection in the context of multi-label learning, by using a new estimator based on implicit regularization and label embedding. Unlike the sparse feature selection methods that use a penalized estimator with explicit regularization terms such as $l_{2,1}$-norm, MCP or SCAD, we propose a simple alternative method via Hadamard product parameterization. In order to guide the feature selection process, a latent semantic of multi-label information method is adopted, as a label embedding. Experimental results on some known benchmark datasets suggest that the proposed estimator suffers much less from extra bias, and may lead to benign overfitting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge