Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Satendra Kumar

Multi-Domain Adaptation in Neural Machine Translation Through Multidimensional Tagging

Feb 19, 2021Figures and Tables:

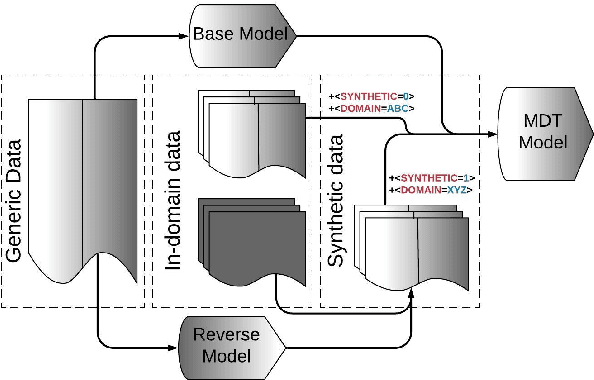

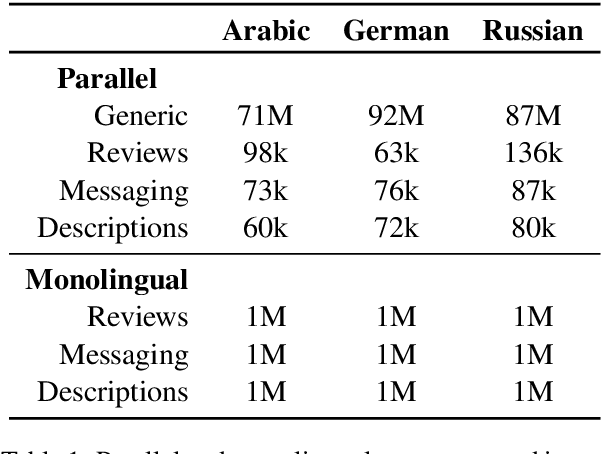

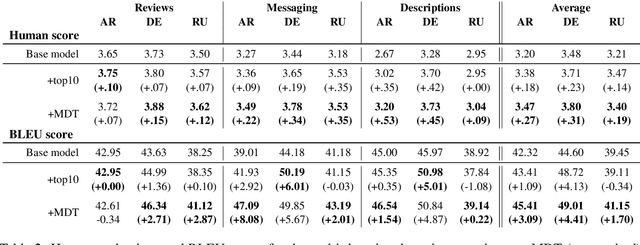

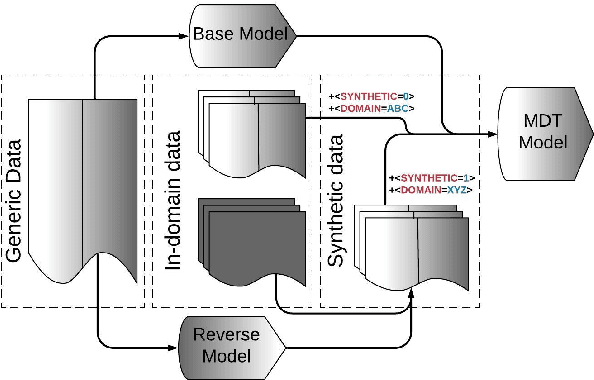

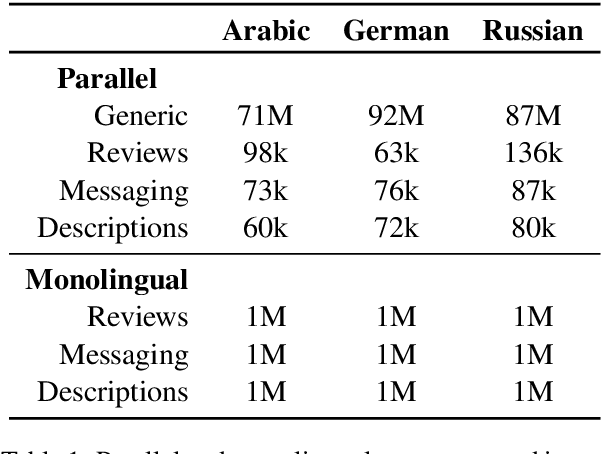

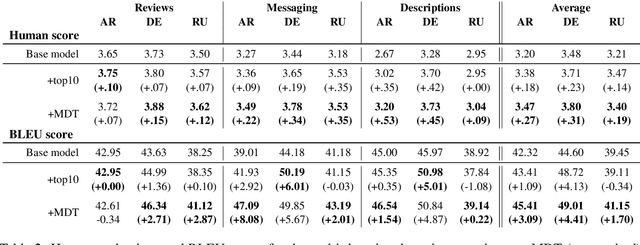

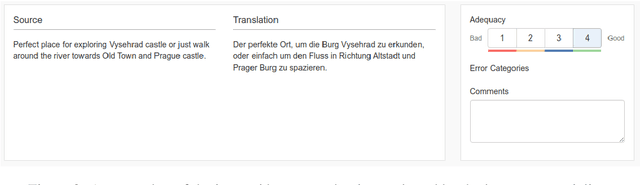

Abstract:Many modern Neural Machine Translation (NMT) systems are trained on nonhomogeneous datasets with several distinct dimensions of variation (e.g. domain, source, generation method, style, etc.). We describe and empirically evaluate multidimensional tagging (MDT), a simple yet effective method for passing sentence-level information to the model. Our human and BLEU evaluation results show that MDT can be applied to the problem of multi-domain adaptation and significantly reduce training costs without sacrificing the translation quality on any of the constituent domains.

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge