Sat Chatterjee

Circuit-Based Intrinsic Methods to Detect Overfitting

Jul 03, 2019

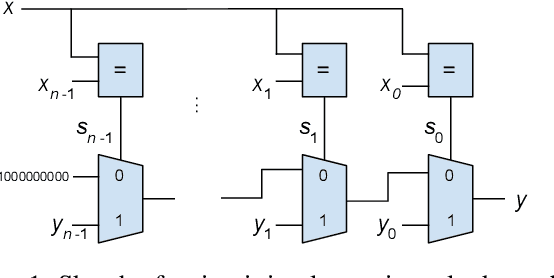

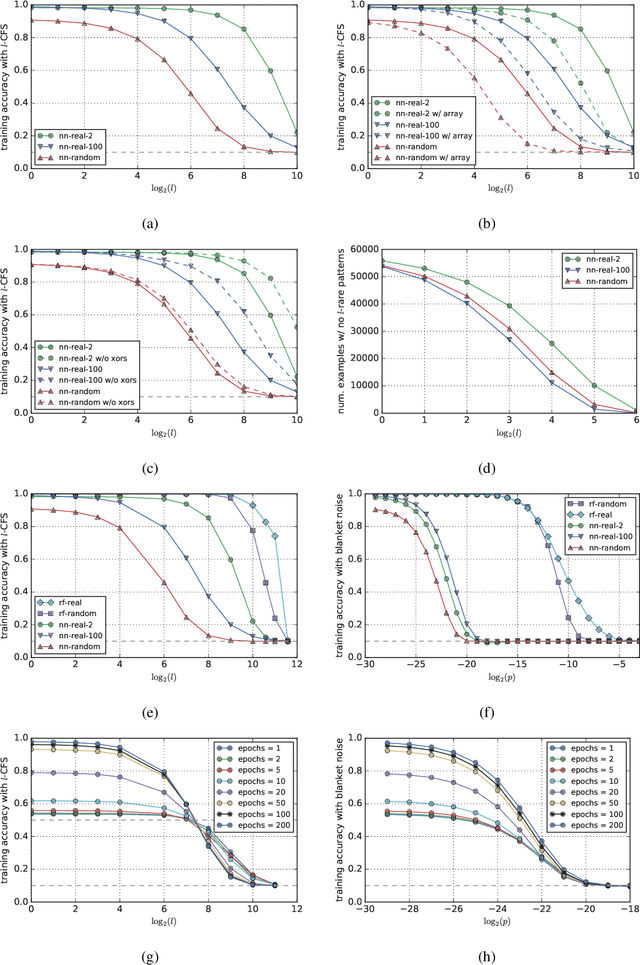

Abstract:The focus of this paper is on intrinsic methods to detect overfitting. These rely only on the model and the training data, as opposed to traditional extrinsic methods that rely on performance on a test set or on bounds from model complexity. We propose a family of intrinsic methods called Counterfactual Simulation (CFS) which analyze the flow of training examples through the model by identifying and perturbing rare patterns. By applying CFS to logic circuits we get a method that has no hyper-parameters and works uniformly across different types of models such as neural networks, random forests and lookup tables. Experimentally, CFS can separate models with different levels of overfit using only their logic circuit representations without any access to the high level structure. By comparing lookup tables, neural networks, and random forests using CFS, we get insight into why neural networks generalize. In particular, we find that stochastic gradient descent in neural nets does not lead to "brute force" memorization, but finds common patterns (whether we train with actual or randomized labels), and neural networks are not unlike forests in this regard. Finally, we identify a limitation with our proposal that makes it unsuitable in an adversarial setting, but points the way to future work on robust intrinsic methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge