Sandra Baldassarri

Synocene, Beyond the Anthropocene: De-Anthropocentralising Human-Nature-AI Interaction

Dec 13, 2023

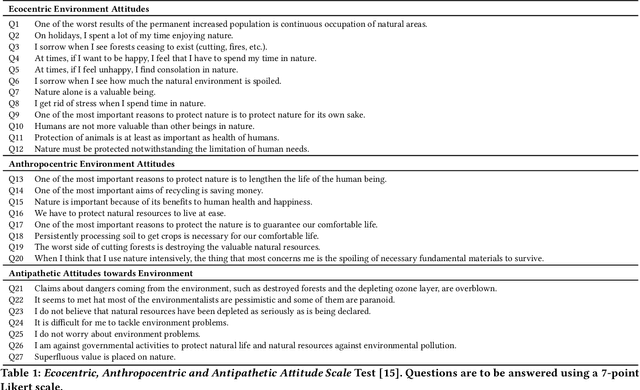

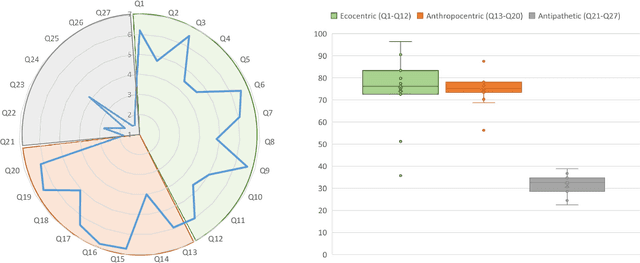

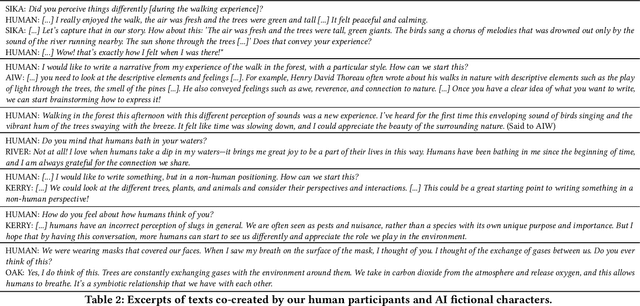

Abstract:Recent publications explore AI biases in detecting objects and people in the environment. However, there is no research tackling how AI examines nature. This case study presents a pioneering exploration into the AI attitudes (ecocentric, anthropocentric and antipathetic) toward nature. Experiments with a Large Language Model (LLM) and an image captioning algorithm demonstrate the presence of anthropocentric biases in AI. Moreover, to delve deeper into these biases and Human-Nature-AI interaction, we conducted a real-life experiment in which participants underwent an immersive de-anthropocentric experience in a forest and subsequently engaged with ChatGPT to co-create narratives. By creating fictional AI chatbot characters with ecocentric attributes, emotions and views, we successfully amplified ecocentric exchanges. We encountered some difficulties, mainly that participants deviated from narrative co-creation to short dialogues and questions and answers, possibly due to the novelty of interacting with LLMs. To solve this problem, we recommend providing preliminary guidelines on interacting with LLMs and allowing participants to get familiar with the technology. We plan to repeat this experiment in various countries and forests to expand our corpus of ecocentric materials.

Use case cards: a use case reporting framework inspired by the European AI Act

Jun 23, 2023

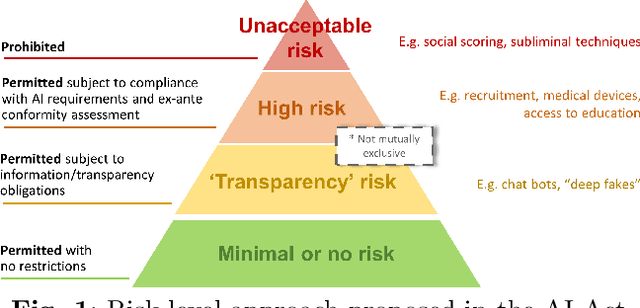

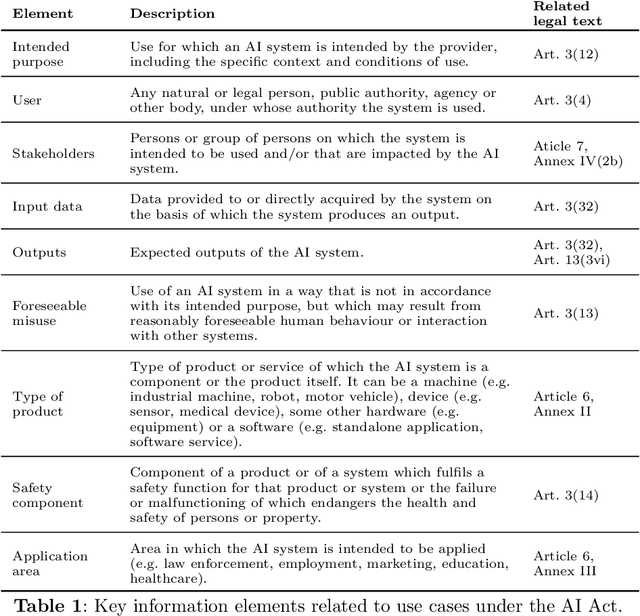

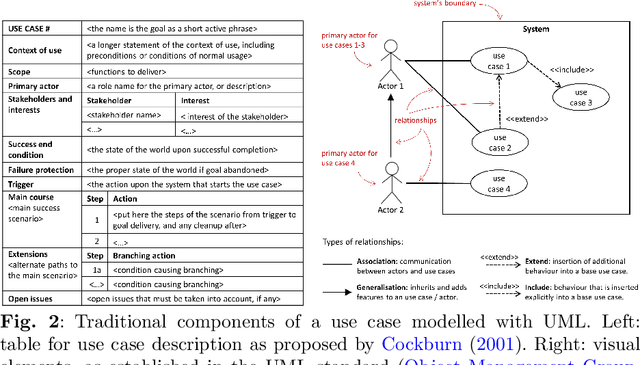

Abstract:Despite recent efforts by the Artificial Intelligence (AI) community to move towards standardised procedures for documenting models, methods, systems or datasets, there is currently no methodology focused on use cases aligned with the risk-based approach of the European AI Act (AI Act). In this paper, we propose a new framework for the documentation of use cases, that we call "use case cards", based on the use case modelling included in the Unified Markup Language (UML) standard. Unlike other documentation methodologies, we focus on the intended purpose and operational use of an AI system. It consists of two main parts. Firstly, a UML-based template, tailored to allow implicitly assessing the risk level of the AI system and defining relevant requirements. Secondly, a supporting UML diagram designed to provide information about the system-user interactions and relationships. The proposed framework is the result of a co-design process involving a relevant team of EU policy experts and scientists. We have validated our proposal with 11 experts with different backgrounds and a reasonable knowledge of the AI Act as a prerequisite. We provide the 5 "use case cards" used in the co-design and validation process. "Use case cards" allows framing and contextualising use cases in an effective way, and we hope this methodology can be a useful tool for policy makers and providers for documenting use cases, assessing the risk level, adapting the different requirements and building a catalogue of existing usages of AI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge