Sami Khenissi

Debiasing the Cloze Task in Sequential Recommendation with Bidirectional Transformers

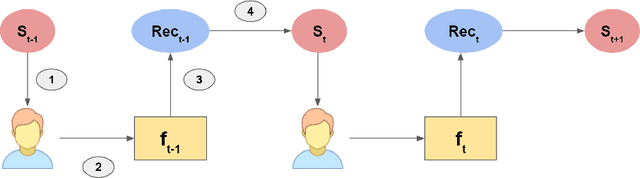

Jan 22, 2023Abstract:Bidirectional Transformer architectures are state-of-the-art sequential recommendation models that use a bi-directional representation capacity based on the Cloze task, a.k.a. Masked Language Modeling. The latter aims to predict randomly masked items within the sequence. Because they assume that the true interacted item is the most relevant one, an exposure bias results, where non-interacted items with low exposure propensities are assumed to be irrelevant. The most common approach to mitigating exposure bias in recommendation has been Inverse Propensity Scoring (IPS), which consists of down-weighting the interacted predictions in the loss function in proportion to their propensities of exposure, yielding a theoretically unbiased learning. In this work, we argue and prove that IPS does not extend to sequential recommendation because it fails to account for the temporal nature of the problem. We then propose a novel propensity scoring mechanism, which can theoretically debias the Cloze task in sequential recommendation. Finally we empirically demonstrate the debiasing capabilities of our proposed approach and its robustness to the severity of exposure bias.

* 10 pages, 3 figures, Accepted at KDD '22

Debiased Explainable Pairwise Ranking from Implicit Feedback

Jul 30, 2021

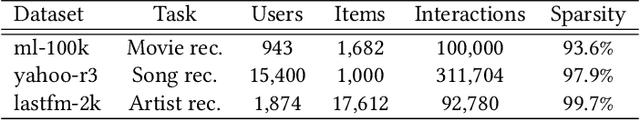

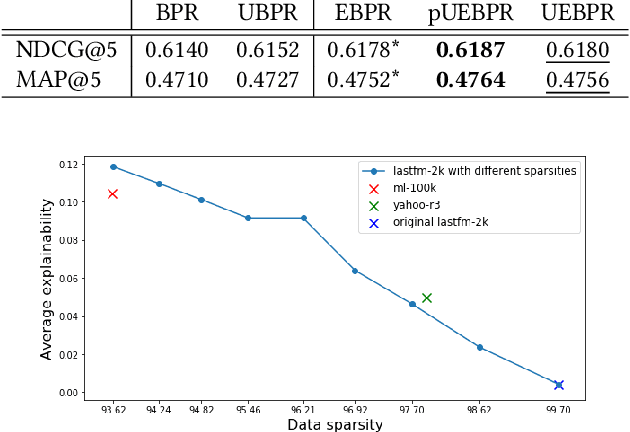

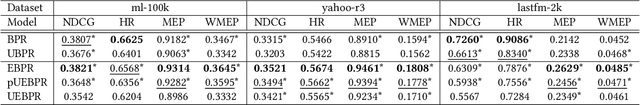

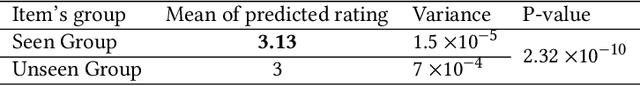

Abstract:Recent work in recommender systems has emphasized the importance of fairness, with a particular interest in bias and transparency, in addition to predictive accuracy. In this paper, we focus on the state of the art pairwise ranking model, Bayesian Personalized Ranking (BPR), which has previously been found to outperform pointwise models in predictive accuracy, while also being able to handle implicit feedback. Specifically, we address two limitations of BPR: (1) BPR is a black box model that does not explain its outputs, thus limiting the user's trust in the recommendations, and the analyst's ability to scrutinize a model's outputs; and (2) BPR is vulnerable to exposure bias due to the data being Missing Not At Random (MNAR). This exposure bias usually translates into an unfairness against the least popular items because they risk being under-exposed by the recommender system. In this work, we first propose a novel explainable loss function and a corresponding Matrix Factorization-based model called Explainable Bayesian Personalized Ranking (EBPR) that generates recommendations along with item-based explanations. Then, we theoretically quantify additional exposure bias resulting from the explainability, and use it as a basis to propose an unbiased estimator for the ideal EBPR loss. The result is a ranking model that aptly captures both debiased and explainable user preferences. Finally, we perform an empirical study on three real-world datasets that demonstrate the advantages of our proposed models.

* 11 pages, 2 figures, Accepted at RecSys '21

Theoretical Modeling of the Iterative Properties of User Discovery in a Collaborative Filtering Recommender System

Aug 21, 2020

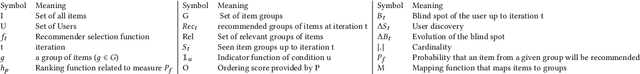

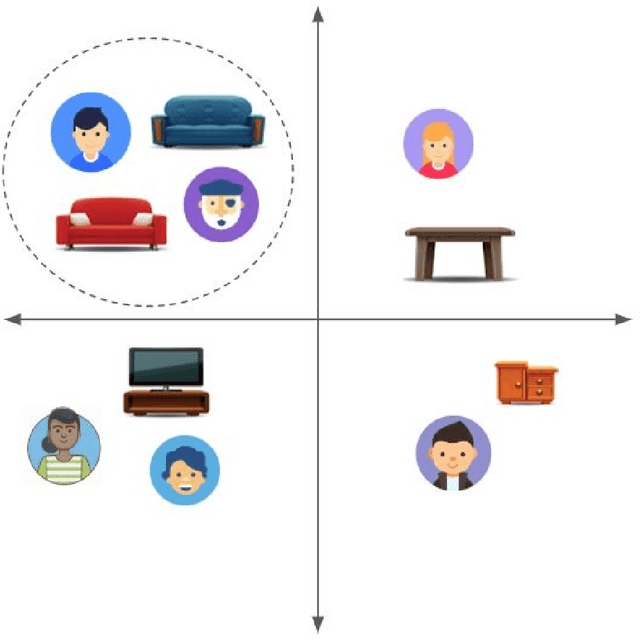

Abstract:The closed feedback loop in recommender systems is a common setting that can lead to different types of biases. Several studies have dealt with these biases by designing methods to mitigate their effect on the recommendations. However, most existing studies do not consider the iterative behavior of the system where the closed feedback loop plays a crucial role in incorporating different biases into several parts of the recommendation steps. We present a theoretical framework to model the asymptotic evolution of the different components of a recommender system operating within a feedback loop setting, and derive theoretical bounds and convergence properties on quantifiable measures of the user discovery and blind spots. We also validate our theoretical findings empirically using a real-life dataset and empirically test the efficiency of a basic exploration strategy within our theoretical framework. Our findings lay the theoretical basis for quantifying the effect of feedback loops and for designing Artificial Intelligence and machine learning algorithms that explicitly incorporate the iterative nature of feedback loops in the machine learning and recommendation process.

Modeling and Counteracting Exposure Bias in Recommender Systems

Jan 01, 2020

Abstract:What we discover and see online, and consequently our opinions and decisions, are becoming increasingly affected by automated machine learned predictions. Similarly, the predictive accuracy of learning machines heavily depends on the feedback data that we provide them. This mutual influence can lead to closed-loop interactions that may cause unknown biases which can be exacerbated after several iterations of machine learning predictions and user feedback. Machine-caused biases risk leading to undesirable social effects ranging from polarization to unfairness and filter bubbles. In this paper, we study the bias inherent in widely used recommendation strategies such as matrix factorization. Then we model the exposure that is borne from the interaction between the user and the recommender system and propose new debiasing strategies for these systems. Finally, we try to mitigate the recommendation system bias by engineering solutions for several state of the art recommender system models. Our results show that recommender systems are biased and depend on the prior exposure of the user. We also show that the studied bias iteratively decreases diversity in the output recommendations. Our debiasing method demonstrates the need for alternative recommendation strategies that take into account the exposure process in order to reduce bias. Our research findings show the importance of understanding the nature of and dealing with bias in machine learning models such as recommender systems that interact directly with humans, and are thus causing an increasing influence on human discovery and decision making

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge