Samad Sheikhaei

Local-to-Global Self-Supervised Representation Learning for Diabetic Retinopathy Grading

Oct 01, 2024Abstract:Artificial intelligence algorithms have demonstrated their image classification and segmentation ability in the past decade. However, artificial intelligence algorithms perform less for actual clinical data than those used for simulations. This research aims to present a novel hybrid learning model using self-supervised learning and knowledge distillation, which can achieve sufficient generalization and robustness. The self-attention mechanism and tokens employed in ViT, besides the local-to-global learning approach used in the hybrid model, enable the proposed algorithm to extract a high-dimensional and high-quality feature space from images. To demonstrate the proposed neural network's capability in classifying and extracting feature spaces from medical images, we use it on a dataset of Diabetic Retinopathy images, specifically the EyePACS dataset. This dataset is more complex structurally and challenging regarding damaged areas than other medical images. For the first time in this study, self-supervised learning and knowledge distillation are used to classify this dataset. In our algorithm, for the first time among all self-supervised learning and knowledge distillation models, the test dataset is 50% larger than the training dataset. Unlike many studies, we have not removed any images from the dataset. Finally, our algorithm achieved an accuracy of 79.1% in the linear classifier and 74.36% in the k-NN algorithm for multiclass classification. Compared to a similar state-of-the-art model, our results achieved higher accuracy and more effective representation spaces.

Control of computer pointer using hand gesture recognition in motion pictures

Dec 24, 2020

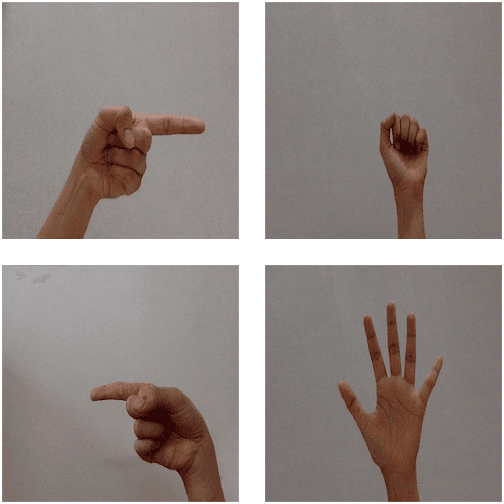

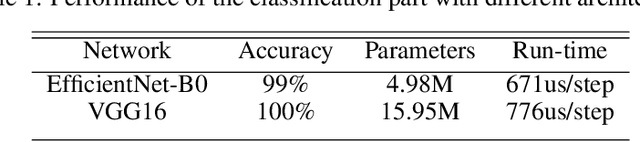

Abstract:A user interface is designed to control the computer cursor by hand detection and classification of its gesture. A hand dataset with 6720 image samples is collected, including four classes: fist, palm, pointing to the left, and pointing to the right. The images are captured from 15 persons in simple backgrounds and different perspectives and light conditions. A CNN network is trained on this dataset to predict a label for each captured image and measure the similarity of them. Finally, commands are defined to click, right-click and move the cursor. The algorithm has 91.88% accuracy and can be used in different backgrounds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge