Sam Nolen

A Study on Encodings for Neural Architecture Search

Jul 09, 2020

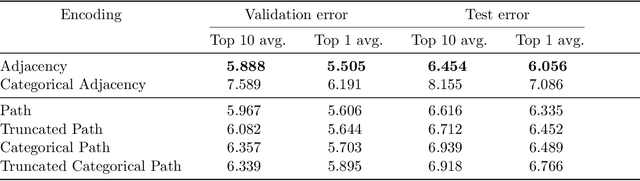

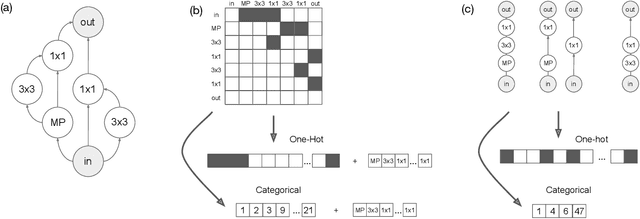

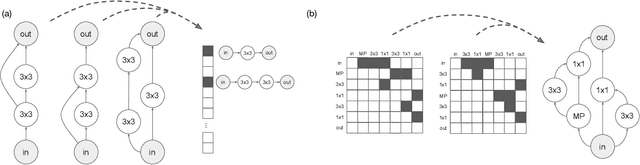

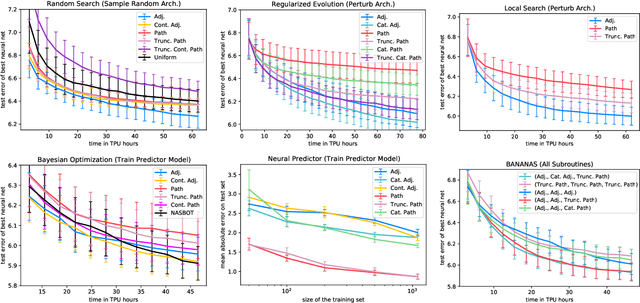

Abstract:Neural architecture search (NAS) has been extensively studied in the past few years. A popular approach is to represent each neural architecture in the search space as a directed acyclic graph (DAG), and then search over all DAGs by encoding the adjacency matrix and list of operations as a set of hyperparameters. Recent work has demonstrated that even small changes to the way each architecture is encoded can have a significant effect on the performance of NAS algorithms. In this work, we present the first formal study on the effect of architecture encodings for NAS, including a theoretical grounding and an empirical study. First we formally define architecture encodings and give a theoretical characterization on the scalability of the encodings we study Then we identify the main encoding-dependent subroutines which NAS algorithms employ, running experiments to show which encodings work best with each subroutine for many popular algorithms. The experiments act as an ablation study for prior work, disentangling the algorithmic and encoding-based contributions, as well as a guideline for future work. Our results demonstrate that NAS encodings are an important design decision which can have a significant impact on overall performance. Our code is available at https://github.com/naszilla/nas-encodings.

Local Search is State of the Art for NAS Benchmarks

May 06, 2020

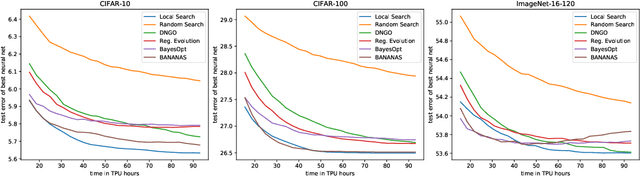

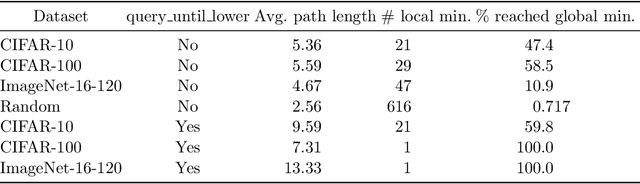

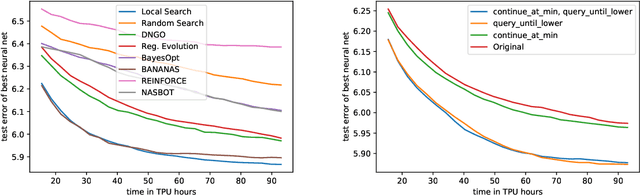

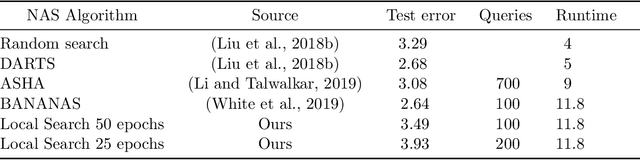

Abstract:Local search is one of the simplest families of algorithms in combinatorial optimization, yet it yields strong approximation guarantees for canonical NP-Complete problems such as the traveling salesman problem and vertex cover. While it is a ubiquitous algorithm in theoretical computer science, local search has been widely neglected in hyperparameter optimization, and has never been used to perform neural architecture search (NAS). We show that the simplest local search instantiations achieve state-of-the-art results on the most popular existing NAS benchmarks (NASBench-101 and NASBench-201). For example, on CIFAR-100 with the NASBench-201 search space, local search reaches the global optimum after training just 127 architectures on average, outperforming many popular NAS algorithms. However, local search fails to perform well on the much larger DARTS search space. We present a thorough theoretical and empirical study, explaining the success of local search on smaller, structured search spaces.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge