Salim Rukhsar

ARNN: Attentive Recurrent Neural Network for Multi-channel EEG Signals to Identify Epileptic Seizures

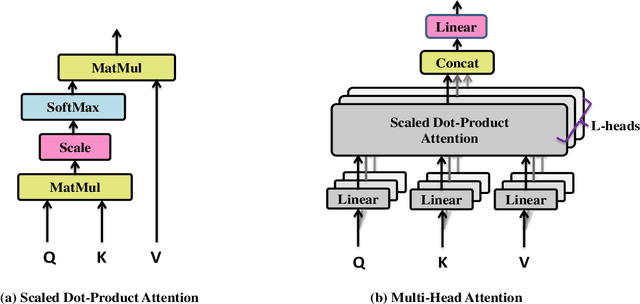

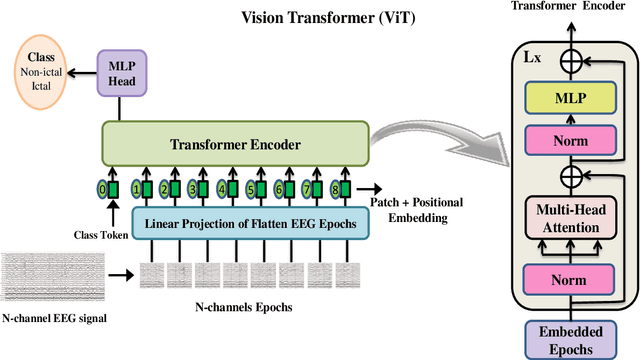

Mar 05, 2024Abstract:We proposed an Attentive Recurrent Neural Network (ARNN), which recurrently applies attention layers along a sequence and has linear complexity with respect to the sequence length. The proposed model operates on multi-channel EEG signals rather than single channel signals and leverages parallel computation. In this cell, the attention layer is a computational unit that efficiently applies self-attention and cross-attention mechanisms to compute a recurrent function over a wide number of state vectors and input signals. Our architecture is inspired in part by the attention layer and long short-term memory (LSTM) cells, and it uses long-short style gates, but it scales this typical cell up by several orders to parallelize for multi-channel EEG signals. It inherits the advantages of attention layers and LSTM gate while avoiding their respective drawbacks. We evaluated the model effectiveness through extensive experiments with heterogeneous datasets, including the CHB-MIT and UPenn and Mayos Clinic, CHB-MIT datasets. The empirical findings suggest that the ARNN model outperforms baseline methods such as LSTM, Vision Transformer (ViT), Compact Convolution Transformer (CCT), and R-Transformer (RT), showcasing superior performance and faster processing capabilities across a wide range of tasks. The code has been made publicly accessible at \url{https://github.com/Salim-Lysiun/ARNN}.

Lightweight Convolution Transformer for Cross-patient Seizure Detection in Multi-channel EEG Signals

May 07, 2023

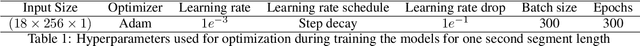

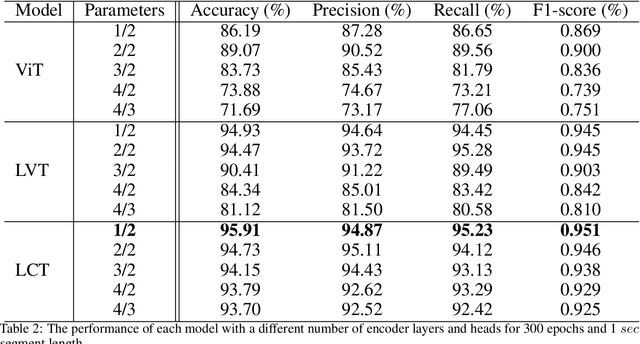

Abstract:Background: Epilepsy is a neurological illness affecting the brain that makes people more likely to experience frequent, spontaneous seizures. There has to be an accurate automated method for measuring seizure frequency and severity in order to assess the efficacy of pharmacological therapy for epilepsy. The drug quantities are often derived from patient reports which may cause significant issues owing to inadequate or inaccurate descriptions of seizures and their frequencies. Methods and materials: This study proposes a novel deep learning architecture based lightweight convolution transformer (LCT). The transformer is able to learn spatial and temporal correlated information simultaneously from the multi-channel electroencephalogram (EEG) signal to detect seizures at smaller segment lengths. In the proposed model, the lack of translation equivariance and localization of ViT is reduced using convolution tokenization, and rich information from the transformer encoder is extracted by sequence pooling instead of the learnable class token. Results: Extensive experimental results demonstrate that the proposed model of cross-patient learning can effectively detect seizures from the raw EEG signals. The accuracy and F1-score of seizure detection in the cross-patient case on the CHB-MIT dataset are shown to be 96.31% and 96.32%, respectively, at 0.5 sec segment length. In addition, the performance metrics show that the inclusion of inductive biases and attention-based pooling in the model enhances the performance and reduces the number of transformer encoder layers, which significantly reduces the computational complexity. In this research work, we provided a novel approach to enhance efficiency and simplify the architecture for multi-channel automated seizure detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge