S. A. Rizvi

Local Contrastive Learning for Medical Image Recognition

Mar 24, 2023

Abstract:The proliferation of Deep Learning (DL)-based methods for radiographic image analysis has created a great demand for expert-labeled radiology data. Recent self-supervised frameworks have alleviated the need for expert labeling by obtaining supervision from associated radiology reports. These frameworks, however, struggle to distinguish the subtle differences between different pathologies in medical images. Additionally, many of them do not provide interpretation between image regions and text, making it difficult for radiologists to assess model predictions. In this work, we propose Local Region Contrastive Learning (LRCLR), a flexible fine-tuning framework that adds layers for significant image region selection as well as cross-modality interaction. Our results on an external validation set of chest x-rays suggest that LRCLR identifies significant local image regions and provides meaningful interpretation against radiology text while improving zero-shot performance on several chest x-ray medical findings.

Histopathology DatasetGAN: Synthesizing Large-Resolution Histopathology Datasets

Jul 06, 2022

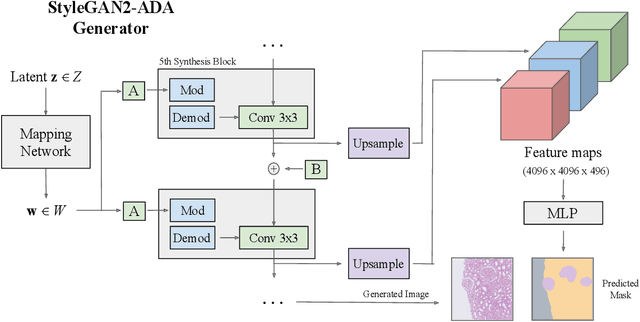

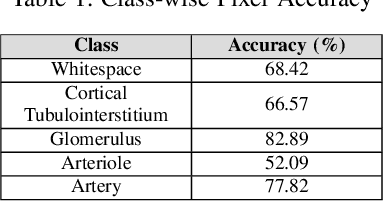

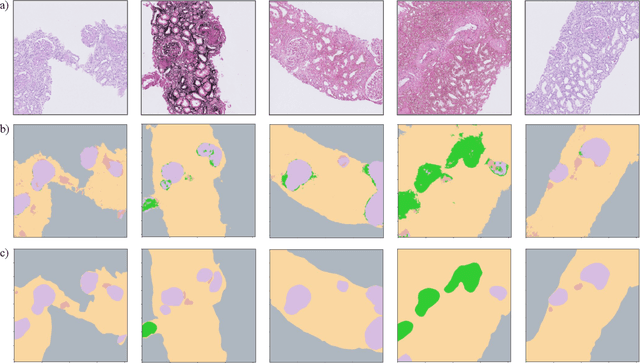

Abstract:Self-supervised learning (SSL) methods are enabling an increasing number of deep learning models to be trained on image datasets in domains where labels are difficult to obtain. These methods, however, struggle to scale to the high resolution of medical imaging datasets, where they are critical for achieving good generalization on label-scarce medical image datasets. In this work, we propose the Histopathology DatasetGAN (HDGAN) framework, an extension of the DatasetGAN semi-supervised framework for image generation and segmentation that scales well to large-resolution histopathology images. We make several adaptations from the original framework, including updating the generative backbone, selectively extracting latent features from the generator, and switching to memory-mapped arrays. These changes reduce the memory consumption of the framework, improving its applicability to medical imaging domains. We evaluate HDGAN on a thrombotic microangiopathy high-resolution tile dataset, demonstrating strong performance on the high-resolution image-annotation generation task. We hope that this work enables more application of deep learning models to medical datasets, in addition to encouraging more exploration of self-supervised frameworks within the medical imaging domain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge