Sören Christensen

Beyond Fixed Horizons: A Theoretical Framework for Adaptive Denoising Diffusions

Jan 31, 2025Abstract:We introduce a new class of generative diffusion models that, unlike conventional denoising diffusion models, achieve a time-homogeneous structure for both the noising and denoising processes, allowing the number of steps to adaptively adjust based on the noise level. This is accomplished by conditioning the forward process using Doob's $h$-transform, which terminates the process at a suitable sampling distribution at a random time. The model is particularly well suited for generating data with lower intrinsic dimensions, as the termination criterion simplifies to a first-hitting rule. A key feature of the model is its adaptability to the target data, enabling a variety of downstream tasks using a pre-trained unconditional generative model. These tasks include natural conditioning through appropriate initialization of the denoising process and classification of noisy data.

Learning to steer with Brownian noise

Oct 04, 2024Abstract:This paper considers an ergodic version of the bounded velocity follower problem, assuming that the decision maker lacks knowledge of the underlying system parameters and must learn them while simultaneously controlling. We propose algorithms based on moving empirical averages and develop a framework for integrating statistical methods with stochastic control theory. Our primary result is a logarithmic expected regret rate. To achieve this, we conduct a rigorous analysis of the ergodic convergence rates of the underlying processes and the risks of the considered estimators.

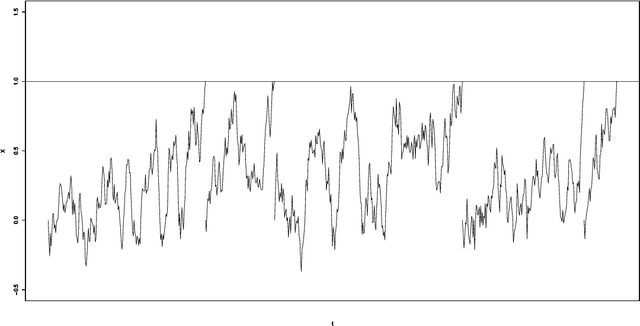

Data-driven optimal stopping: A pure exploration analysis

Dec 10, 2023Abstract:The standard theory of optimal stopping is based on the idealised assumption that the underlying process is essentially known. In this paper, we drop this restriction and study data-driven optimal stopping for a general diffusion process, focusing on investigating the statistical performance of the proposed estimator of the optimal stopping barrier. More specifically, we derive non-asymptotic upper bounds on the simple regret, along with uniform and non-asymptotic PAC bounds. Minimax optimality is verified by completing the upper bound results with matching lower bounds on the simple regret. All results are shown both under general conditions on the payoff functions and under more refined assumptions that mimic the margin condition used in binary classification, leading to an improved rate of convergence. Additionally, we investigate how our results on the simple regret transfer to the cumulative regret for a specific exploration-exploitation strategy, both with respect to lower bounds and upper bounds.

Data-driven rules for multidimensional reflection problems

Nov 11, 2023

Abstract:Over the recent past data-driven algorithms for solving stochastic optimal control problems in face of model uncertainty have become an increasingly active area of research. However, for singular controls and underlying diffusion dynamics the analysis has so far been restricted to the scalar case. In this paper we fill this gap by studying a multivariate singular control problem for reversible diffusions with controls of reflection type. Our contributions are threefold. We first explicitly determine the long-run average costs as a domain-dependent functional, showing that the control problem can be equivalently characterized as a shape optimization problem. For given diffusion dynamics, assuming the optimal domain to be strongly star-shaped, we then propose a gradient descent algorithm based on polytope approximations to numerically determine a cost-minimizing domain. Finally, we investigate data-driven solutions when the diffusion dynamics are unknown to the controller. Using techniques from nonparametric statistics for stochastic processes, we construct an optimal domain estimator, whose static regret is bounded by the minimax optimal estimation rate of the unreflected process' invariant density. In the most challenging situation, when the dynamics must be learned simultaneously to controlling the process, we develop an episodic learning algorithm to overcome the emerging exploration-exploitation dilemma and show that given the static regret as a baseline, the loss in its sublinear regret per time unit is of natural order compared to the one-dimensional case.

Is Learning in Biological Neural Networks based on Stochastic Gradient Descent? An analysis using stochastic processes

Sep 10, 2023Abstract:In recent years, there has been an intense debate about how learning in biological neural networks (BNNs) differs from learning in artificial neural networks. It is often argued that the updating of connections in the brain relies only on local information, and therefore a stochastic gradient-descent type optimization method cannot be used. In this paper, we study a stochastic model for supervised learning in BNNs. We show that a (continuous) gradient step occurs approximately when each learning opportunity is processed by many local updates. This result suggests that stochastic gradient descent may indeed play a role in optimizing BNNs.

Learning to reflect: A unifying approach for data-driven stochastic control strategies

Apr 23, 2021

Abstract:Stochastic optimal control problems have a long tradition in applied probability, with the questions addressed being of high relevance in a multitude of fields. Even though theoretical solutions are well understood in many scenarios, their practicability suffers from the assumption of known dynamics of the underlying stochastic process, raising the statistical challenge of developing purely data-driven strategies. For the mathematically separated classes of continuous diffusion processes and L\'evy processes, we show that developing efficient strategies for related singular stochastic control problems can essentially be reduced to finding rate-optimal estimators with respect to the sup-norm risk of objects associated to the invariant distribution of ergodic processes which determine the theoretical solution of the control problem. From a statistical perspective, we exploit the exponential $\beta$-mixing property as the common factor of both scenarios to drive the convergence analysis, indicating that relying on general stability properties of Markov processes is a sufficiently powerful and flexible approach to treat complex applications requiring statistical methods. We show moreover that in the L\'evy case $-$ even though per se jump processes are more difficult to handle both in statistics and control theory $-$ a fully data-driven strategy with regret of significantly better order than in the diffusion case can be constructed.

Nonparametric learning for impulse control problems

Sep 20, 2019

Abstract:One of the fundamental assumptions in stochastic control of continuous time processes is that the dynamics of the underlying (diffusion) process is known. This is, however, usually obviously not fulfilled in practice. On the other hand, over the last decades, a rich theory for nonparametric estimation of the drift (and volatility) for continuous time processes has been developed. The aim of this paper is bringing together techniques from stochastic control with methods from statistics for stochastic processes to find a way to both learn the dynamics of the underlying process and control in a reasonable way at the same time. More precisely, we study a long-term average impulse control problem, a stochastic version of the classical Faustmann timber harvesting problem. One of the problems that immediately arises is an exploration vs. exploitation-behavior as is well known for problems in machine learning. We propose a way to deal with this issue by combining exploration- and exploitation periods in a suitable way. Our main finding is that this construction can be based on the rates of convergence of estimators for the invariant density. Using this, we obtain that the average cumulated regret is of uniform order $O({T^{-1/3}})$.

Classification error in multiclass discrimination from Markov data

Sep 22, 2015Abstract:As a model for an on-line classification setting we consider a stochastic process $(X_{-n},Y_{-n})_{n}$, the present time-point being denoted by 0, with observables $ \ldots,X_{-n},X_{-n+1},\ldots, X_{-1}, X_0$ from which the pattern $Y_0$ is to be inferred. So in this classification setting, in addition to the present observation $X_0$ a number $l$ of preceding observations may be used for classification, thus taking a possible dependence structure into account as it occurs e.g. in an ongoing classification of handwritten characters. We treat the question how the performance of classifiers is improved by using such additional information. For our analysis, a hidden Markov model is used. Letting $R_l$ denote the minimal risk of misclassification using $l$ preceding observations we show that the difference $\sup_k |R_l - R_{l+k}|$ decreases exponentially fast as $l$ increases. This suggests that a small $l$ might already lead to a noticeable improvement. To follow this point we look at the use of past observations for kernel classification rules. Our practical findings in simulated hidden Markov models and in the classification of handwritten characters indicate that using $l=1$, i.e. just the last preceding observation in addition to $X_0$, can lead to a substantial reduction of the risk of misclassification. So, in the presence of stochastic dependencies, we advocate to use $ X_{-1},X_0$ for finding the pattern $Y_0$ instead of only $X_0$ as one would in the independent situation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge