Rohan S. Kumar

Generative Modeling with Quantum Neurons

Feb 01, 2023

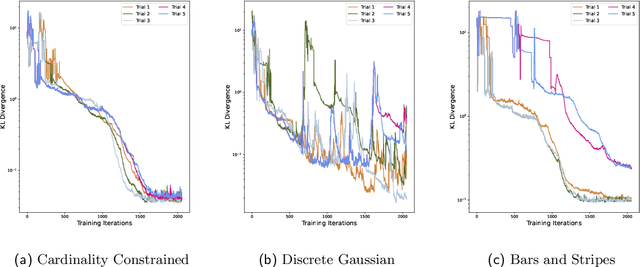

Abstract:The recently proposed Quantum Neuron Born Machine (QNBM) has demonstrated quality initial performance as the first quantum generative machine learning (ML) model proposed with non-linear activations. However, previous investigations have been limited in scope with regards to the model's learnability and simulatability. In this work, we make a considerable leap forward by providing an extensive deep dive into the QNBM's potential as a generative model. We first demonstrate that the QNBM's network representation makes it non-trivial to be classically efficiently simulated. Following this result, we showcase the model's ability to learn (express and train on) a wider set of probability distributions, and benchmark the performance against a classical Restricted Boltzmann Machine (RBM). The QNBM is able to outperform this classical model on all distributions, even for the most optimally trained RBM among our simulations. Specifically, the QNBM outperforms the RBM with an improvement factor of 75.3x, 6.4x, and 3.5x for the discrete Gaussian, cardinality-constrained, and Bars and Stripes distributions respectively. Lastly, we conduct an initial investigation into the model's generalization capabilities and use a KL test to show that the model is able to approximate the ground truth probability distribution more closely than the training distribution when given access to a limited amount of data. Overall, we put forth a stronger case in support of using the QNBM for larger-scale generative tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge