Rohan Deepak Ajwani

E-CARE: An Efficient LLM-based Commonsense-Augmented Framework for E-Commerce

Nov 06, 2025Abstract:Finding relevant products given a user query plays a pivotal role in an e-commerce platform, as it can spark shopping behaviors and result in revenue gains. The challenge lies in accurately predicting the correlation between queries and products. Recently, mining the cross-features between queries and products based on the commonsense reasoning capacity of Large Language Models (LLMs) has shown promising performance. However, such methods suffer from high costs due to intensive real-time LLM inference during serving, as well as human annotations and potential Supervised Fine Tuning (SFT). To boost efficiency while leveraging the commonsense reasoning capacity of LLMs for various e-commerce tasks, we propose the Efficient Commonsense-Augmented Recommendation Enhancer (E-CARE). During inference, models augmented with E-CARE can access commonsense reasoning with only a single LLM forward pass per query by utilizing a commonsense reasoning factor graph that encodes most of the reasoning schema from powerful LLMs. The experiments on 2 downstream tasks show an improvement of up to 12.1% on precision@5.

Reasoning on a Budget: A Survey of Adaptive and Controllable Test-Time Compute in LLMs

Jul 02, 2025Abstract:Large language models (LLMs) have rapidly progressed into general-purpose agents capable of solving a broad spectrum of tasks. However, current models remain inefficient at reasoning: they apply fixed inference-time compute regardless of task complexity, often overthinking simple problems while underthinking hard ones. This survey presents a comprehensive review of efficient test-time compute (TTC) strategies, which aim to improve the computational efficiency of LLM reasoning. We introduce a two-tiered taxonomy that distinguishes between L1-controllability, methods that operate under fixed compute budgets, and L2-adaptiveness, methods that dynamically scale inference based on input difficulty or model confidence. We benchmark leading proprietary LLMs across diverse datasets, highlighting critical trade-offs between reasoning performance and token usage. Compared to prior surveys on efficient reasoning, our review emphasizes the practical control, adaptability, and scalability of TTC methods. Finally, we discuss emerging trends such as hybrid thinking models and identify key challenges for future work towards making LLMs more computationally efficient, robust, and responsive to user constraints.

Plug and Play with Prompts: A Prompt Tuning Approach for Controlling Text Generation

Apr 08, 2024Abstract:Transformer-based Large Language Models (LLMs) have shown exceptional language generation capabilities in response to text-based prompts. However, controlling the direction of generation via textual prompts has been challenging, especially with smaller models. In this work, we explore the use of Prompt Tuning to achieve controlled language generation. Generated text is steered using prompt embeddings, which are trained using a small language model, used as a discriminator. Moreover, we demonstrate that these prompt embeddings can be trained with a very small dataset, with as low as a few hundred training examples. Our method thus offers a data and parameter efficient solution towards controlling language model outputs. We carry out extensive evaluation on four datasets: SST-5 and Yelp (sentiment analysis), GYAFC (formality) and JIGSAW (toxic language). Finally, we demonstrate the efficacy of our method towards mitigating harmful, toxic, and biased text generated by language models.

* 9 pages, 3 figures, Presented at Deployable AI Workshop at AAAI-2024

Sparse Distributed Memory using Spiking Neural Networks on Nengo

Sep 07, 2021

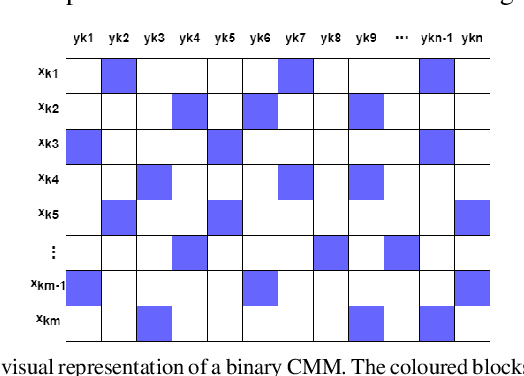

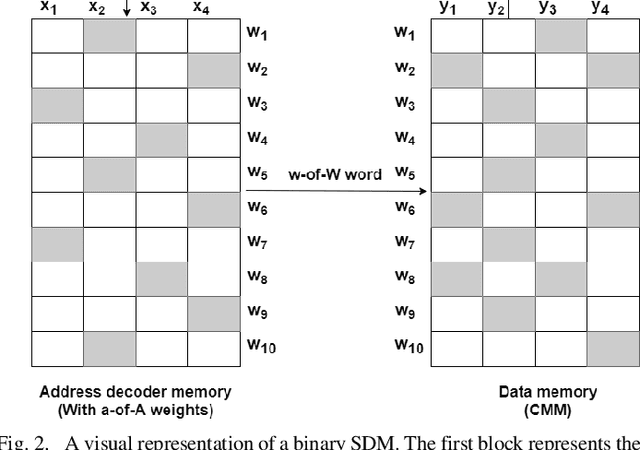

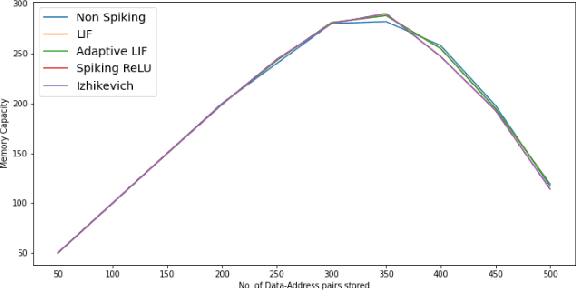

Abstract:We present a Spiking Neural Network (SNN) based Sparse Distributed Memory (SDM) implemented on the Nengo framework. We have based our work on previous work by Furber et al, 2004, implementing SDM using N-of-M codes. As an integral part of the SDM design, we have implemented Correlation Matrix Memory (CMM) using SNN on Nengo. Our SNN implementation uses Leaky Integrate and Fire (LIF) spiking neuron models on Nengo. Our objective is to understand how well SNN-based SDMs perform in comparison to conventional SDMs. Towards this, we have simulated both conventional and SNN-based SDM and CMM on Nengo. We observe that SNN-based models perform similarly as the conventional ones. In order to evaluate the performance of different SNNs, we repeated the experiment using Adaptive-LIF, Spiking Rectified Linear Unit, and Izhikevich models and obtained similar results. We conclude that it is indeed feasible to develop some types of associative memories using spiking neurons whose memory capacity and other features are similar to the performance without SNNs. Finally we have implemented an application where MNIST images, encoded with N-of-M codes, are associated with their labels and stored in the SNN-based SDM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge