Ramazan Gökberk Cinbiş

Semantics-driven Attentive Few-shot Learning over Clean and Noisy Samples

Jan 09, 2022

Abstract:Over the last couple of years few-shot learning (FSL) has attracted great attention towards minimizing the dependency on labeled training examples. An inherent difficulty in FSL is the handling of ambiguities resulting from having too few training samples per class. To tackle this fundamental challenge in FSL, we aim to train meta-learner models that can leverage prior semantic knowledge about novel classes to guide the classifier synthesis process. In particular, we propose semantically-conditioned feature attention and sample attention mechanisms that estimate the importance of representation dimensions and training instances. We also study the problem of sample noise in FSL, towards the utilization of meta-learners in more realistic and imperfect settings. Our experimental results demonstrate the effectiveness of the proposed semantic FSL model with and without sample noise.

Key Protected Classification for Collaborative Learning

Aug 27, 2019

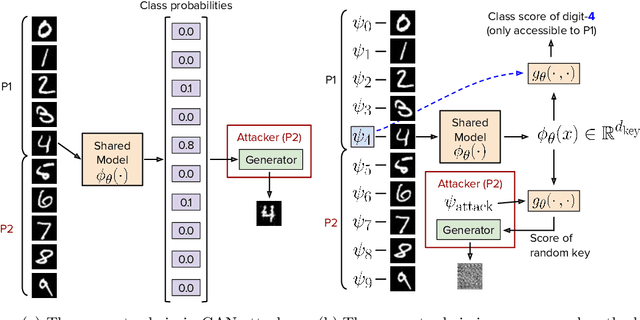

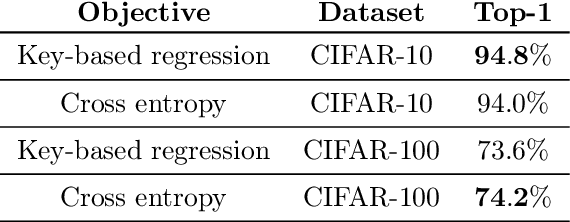

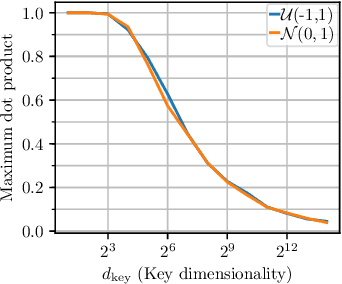

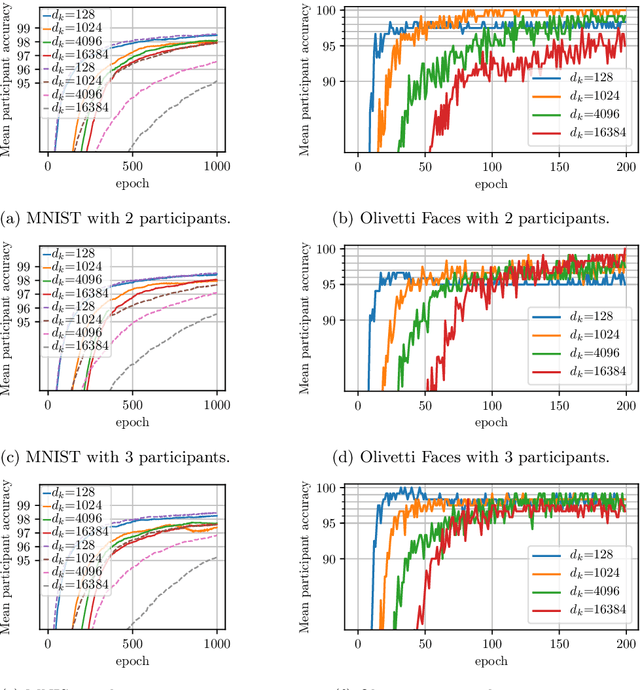

Abstract:Large-scale datasets play a fundamental role in training deep learning models. However, dataset collection is difficult in domains that involve sensitive information. Collaborative learning techniques provide a privacy-preserving solution, by enabling training over a number of private datasets that are not shared by their owners. However, recently, it has been shown that the existing collaborative learning frameworks are vulnerable to an active adversary that runs a generative adversarial network (GAN) attack. In this work, we propose a novel classification model that is resilient against such attacks by design. More specifically, we introduce a key-based classification model and a principled training scheme that protects class scores by using class-specific private keys, which effectively hides the information necessary for a GAN attack. We additionally show how to utilize high dimensional keys to improve the robustness against attacks without increasing the model complexity. Our detailed experiments demonstrate the effectiveness of the proposed technique.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge