Preetham Putha

A Diffusion-Driven Fine-Grained Nodule Synthesis Framework for Enhanced Lung Nodule Detection from Chest Radiographs

Mar 02, 2026Abstract:Early detection of lung cancer in chest radiographs (CXRs) is crucial for improving patient outcomes, yet nodule detection remains challenging due to their subtle appearance and variability in radiological characteristics like size, texture, and boundary. For robust analysis, this diversity must be well represented in training datasets for deep learning based Computer-Assisted Diagnosis (CAD) systems. However, assembling such datasets is costly and often impractical, motivating the need for realistic synthetic data generation. Existing methods lack fine-grained control over synthetic nodule generation, limiting their utility in addressing data scarcity. This paper proposes a novel diffusion-based framework with low-rank adaptation (LoRA) adapters for characteristic controlled nodule synthesis on CXRs. We begin by addressing size and shape control through nodule mask conditioned training of the base diffusion model. To achieve individual characteristic control, we train separate LoRA modules, each dedicated to a specific radiological feature. However, since nodules rarely exhibit isolated characteristics, effective multi-characteristic control requires a balanced integration of features. We address this by leveraging the dynamic composability of LoRAs and revisiting existing merging strategies. Building on this, we identify two key issues, overlapping attention regions and non-orthogonal parameter spaces. To overcome these limitations, we introduce a novel orthogonality loss term during LoRA composition training. Extensive experiments on both in-house and public datasets demonstrate improved downstream nodule detection. Radiologist evaluations confirm the fine-grained controllability of our generated nodules, and across multiple quantitative metrics, our method surpasses existing nodule generation approaches for CXRs.

DiffusionXRay: A Diffusion and GAN-Based Approach for Enhancing Digitally Reconstructed Chest Radiographs

Mar 02, 2026Abstract:Deep learning-based automated diagnosis of lung cancer has emerged as a crucial advancement that enables healthcare professionals to detect and initiate treatment earlier. However, these models require extensive training datasets with diverse case-specific properties. High-quality annotated data is particularly challenging to obtain, especially for cases with subtle pulmonary nodules that are difficult to detect even for experienced radiologists. This scarcity of well-labeled datasets can limit model performance and generalization across different patient populations. Digitally reconstructed radiographs (DRR) using CT-Scan to generate synthetic frontal chest X-rays with artificially inserted lung nodules offers one potential solution. However, this approach suffers from significant image quality degradation, particularly in the form of blurred anatomical features and loss of fine lung field structures. To overcome this, we introduce DiffusionXRay, a novel image restoration pipeline for Chest X-ray images that synergistically leverages denoising diffusion probabilistic models (DDPMs) and generative adversarial networks (GANs). DiffusionXRay incorporates a unique two-stage training process: First, we investigate two independent approaches, DDPM-LQ and GAN-based MUNIT-LQ, to generate low-quality CXRs, addressing the challenge of training data scarcity, posing this as a style transfer problem. Subsequently, we train a DDPM-based model on paired low-quality and high-quality images, enabling it to learn the nuances of X-ray image restoration. Our method demonstrates promising results in enhancing image clarity, contrast, and overall diagnostic value of chest X-rays while preserving subtle yet clinically significant artifacts, validated by both quantitative metrics and expert radiological assessment.

* Published at MICCAI 2025

Comparative Evaluation of Digital and Analog Chest Radiographs to Identify Tuberculosis using Deep Learning Model

Jul 27, 2023

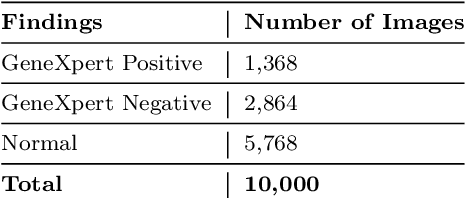

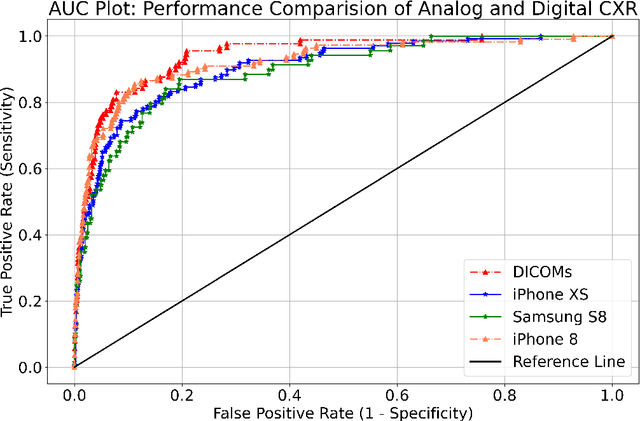

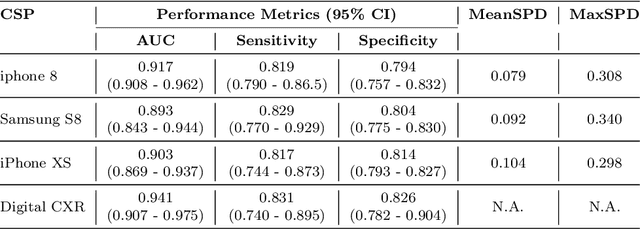

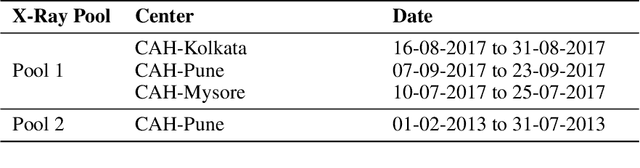

Abstract:Purpose:Chest X-ray (CXR) is an essential tool and one of the most prescribed imaging to detect pulmonary abnormalities, with a yearly estimate of over 2 billion imaging performed worldwide. However, the accurate and timely diagnosis of TB remains an unmet goal. The prevalence of TB is highest in low-middle-income countries, and the requirement of a portable, automated, and reliable solution is required. In this study, we compared the performance of DL-based devices on digital and analog CXR. The evaluated DL-based device can be used in resource-constraint settings. Methods: A total of 10,000 CXR DICOMs(.dcm) and printed photos of the films acquired with three different cellular phones - Samsung S8, iPhone 8, and iPhone XS along with their radiological report were retrospectively collected from various sites across India from April 2020 to March 2021. Results: 10,000 chest X-rays were utilized to evaluate the DL-based device in identifying radiological signs of TB. The AUC of qXR for detecting signs of tuberculosis on the original DICOMs dataset was 0.928 with a sensitivity of 0.841 at a specificity of 0.806. At an optimal threshold, the difference in the AUC of three cellular smartphones with the original DICOMs is 0.024 (2.55%), 0.048 (5.10%), and 0.038 (1.91%). The minimum difference demonstrates the robustness of the DL-based device in identifying radiological signs of TB in both digital and analog CXR.

Can Artificial Intelligence Reliably Report Chest X-Rays?: Radiologist Validation of an Algorithm trained on 1.2 Million X-Rays

Jul 19, 2018

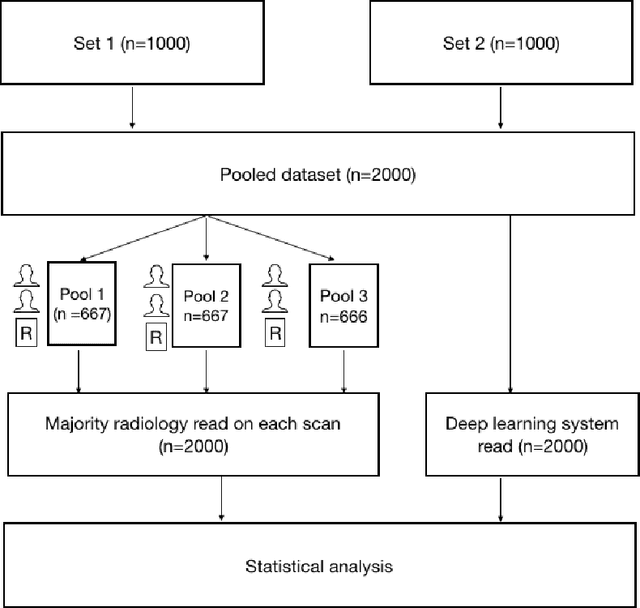

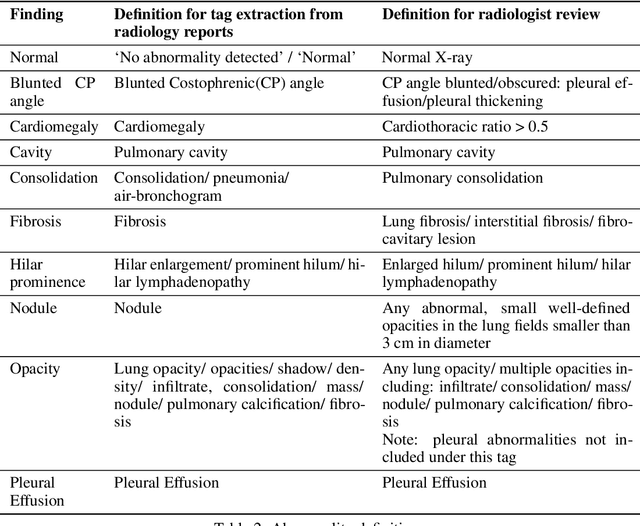

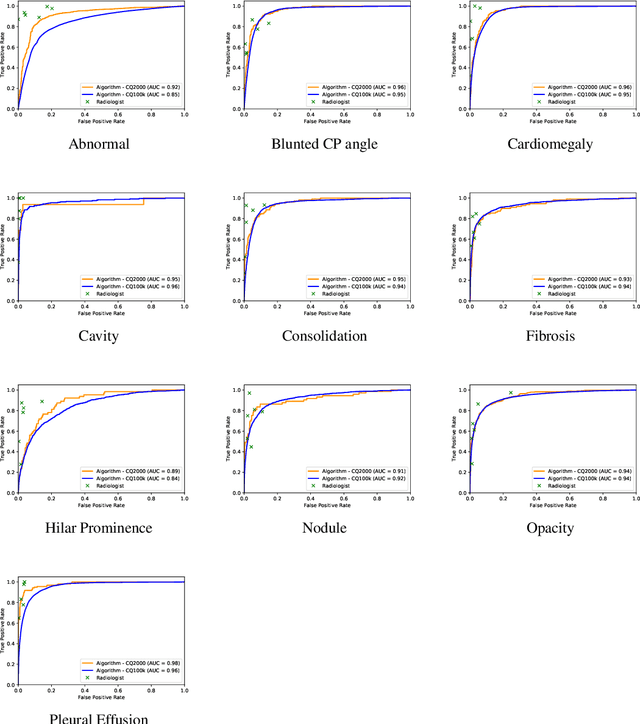

Abstract:Background and Objectives: Chest x-rays are the most commonly performed, cost-effective diagnostic imaging tests ordered by physicians. A clinically validated, automated artificial intelligence system that can reliably separate normal from abnormal would be invaluable in addressing the problem of reporting backlogs and the lack of radiologists in low-resource settings. The aim of this study was to develop and validate a deep learning system to detect chest x-ray abnormalities. Methods: A deep learning system was trained on 1.2 million x-rays and their corresponding radiology reports to identify abnormal x-rays and the following specific abnormalities: blunted costophrenic angle, calcification, cardiomegaly, cavity, consolidation, fibrosis, hilar enlargement, opacity and pleural effusion. The system was tested versus a 3-radiologist majority on an independent, retrospectively collected de-identified set of 2000 x-rays. The primary accuracy measure was area under the ROC curve (AUC), estimated separately for each abnormality as well as for normal versus abnormal reports. Results: The deep learning system demonstrated an AUC of 0.93(CI 0.92-0.94) for detection of abnormal scans, and AUC(CI) of 0.94(0.92-0.97),0.88(0.85-0.91), 0.97(0.95-0.99), 0.92(0.82-1), 0.94(0.91-0.97), 0.92(0.88-0.95), 0.89(0.84-0.94), 0.93(0.92-0.95), 0.98(0.97-1), 0.93(0.0.87-0.99) for the detection of blunted CP angle, calcification, cardiomegaly, cavity, consolidation, fibrosis,hilar enlargement, opacity and pleural effusion respectively. Conclusions and Relevance: Our study shows that a deep learning algorithm trained on a large quantity of labelled data can accurately detect abnormalities on chest x-rays. As these systems further increase in accuracy, the feasibility of using artificial intelligence to extend the reach of chest x-ray interpretation and improve reporting efficiency will increase in tandem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge