Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Prasanna B

Continual Domain Adaptation through Pruning-aided Domain-specific Weight Modulation

Apr 15, 2023Figures and Tables:

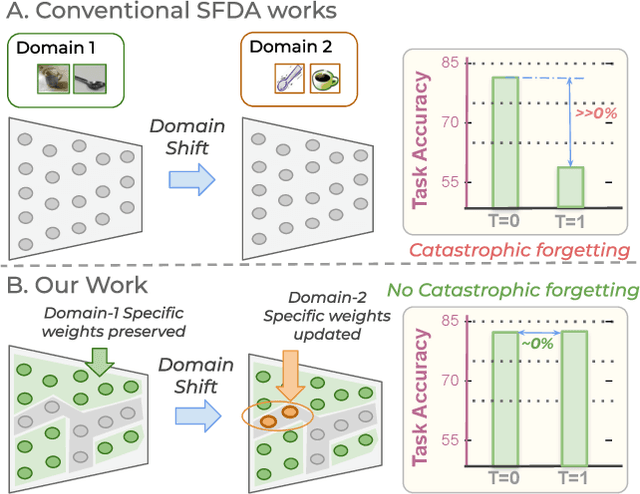

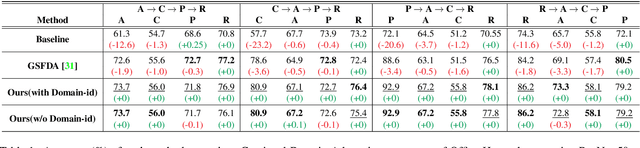

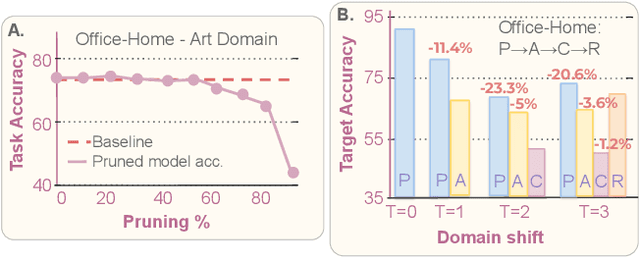

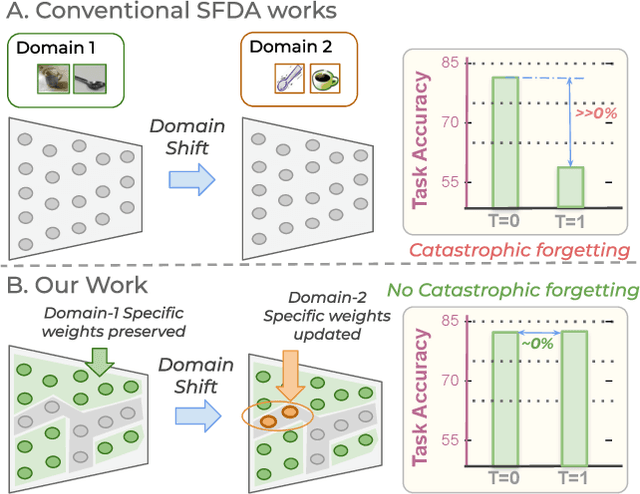

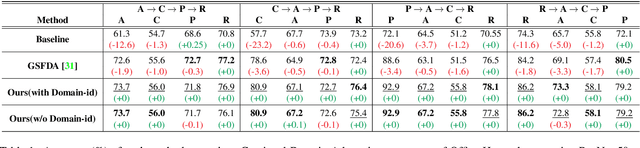

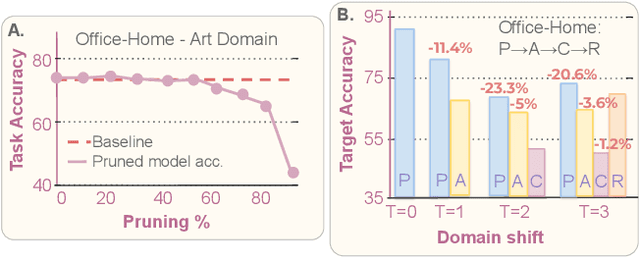

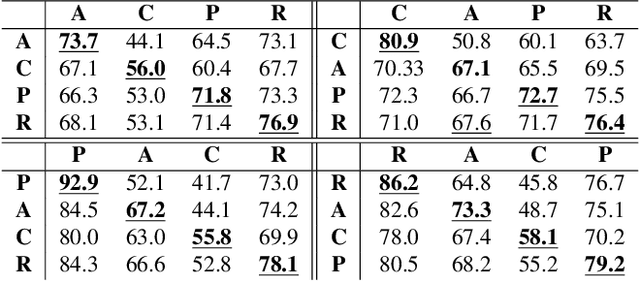

Abstract:In this paper, we propose to develop a method to address unsupervised domain adaptation (UDA) in a practical setting of continual learning (CL). The goal is to update the model on continually changing domains while preserving domain-specific knowledge to prevent catastrophic forgetting of past-seen domains. To this end, we build a framework for preserving domain-specific features utilizing the inherent model capacity via pruning. We also perform effective inference using a novel batch-norm based metric to predict the final model parameters to be used accurately. Our approach achieves not only state-of-the-art performance but also prevents catastrophic forgetting of past domains significantly. Our code is made publicly available.

* CVPR CLVision Workshop 2023, For code see

https://github.com/PrasannaB29/PACDA

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge