Pini Zilber

Imbalanced Mixed Linear Regression

Jan 29, 2023

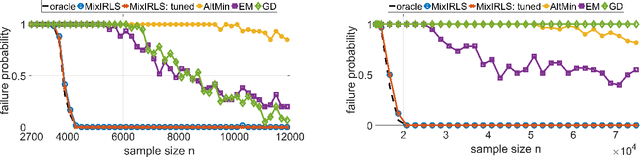

Abstract:We consider the problem of mixed linear regression (MLR), where each observed sample belongs to one of $K$ unknown linear models. In practical applications, the proportions of the $K$ components are often imbalanced. Unfortunately, most MLR methods do not perform well in such settings. Motivated by this practical challenge, in this work we propose Mix-IRLS, a novel, simple and fast algorithm for MLR with excellent performance on both balanced and imbalanced mixtures. In contrast to popular approaches that recover the $K$ models simultaneously, Mix-IRLS does it sequentially using tools from robust regression. Empirically, Mix-IRLS succeeds in a broad range of settings where other methods fail. These include imbalanced mixtures, small sample sizes, presence of outliers, and an unknown number of models $K$. In addition, Mix-IRLS outperforms competing methods on several real-world datasets, in some cases by a large margin. We complement our empirical results by deriving a recovery guarantee for Mix-IRLS, which highlights its advantage on imbalanced mixtures.

Inductive Matrix Completion: No Bad Local Minima and a Fast Algorithm

Jan 31, 2022

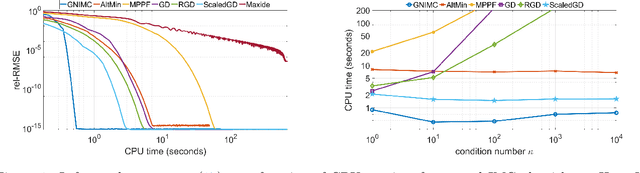

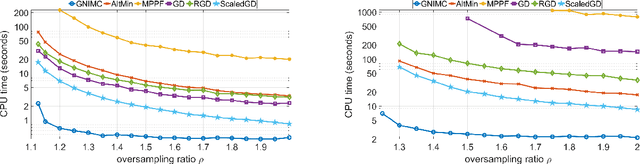

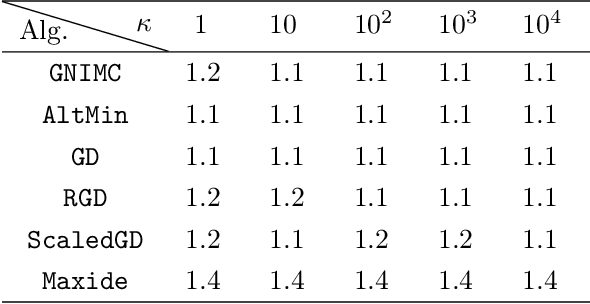

Abstract:The inductive matrix completion (IMC) problem is to recover a low rank matrix from few observed entries while incorporating prior knowledge about its row and column subspaces. In this work, we make three contributions to the IMC problem: (i) we prove that under suitable conditions, the IMC optimization landscape has no bad local minima; (ii) we derive a simple scheme with theoretical guarantees to estimate the rank of the unknown matrix; and (iii) we propose GNIMC, a simple Gauss-Newton based method to solve the IMC problem, analyze its runtime and derive recovery guarantees for it. The guarantees for GNIMC are sharper in several aspects than those available for other methods, including a quadratic convergence rate, fewer required observed entries and stability to errors or deviations from low-rank. Empirically, given entries observed uniformly at random, GNIMC recovers the underlying matrix substantially faster than several competing methods.

GNMR: A provable one-line algorithm for low rank matrix recovery

Jun 24, 2021

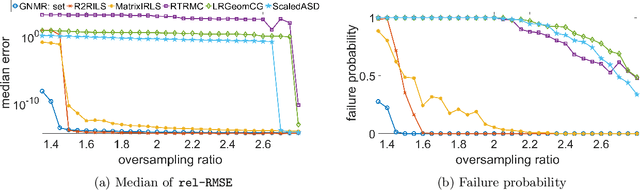

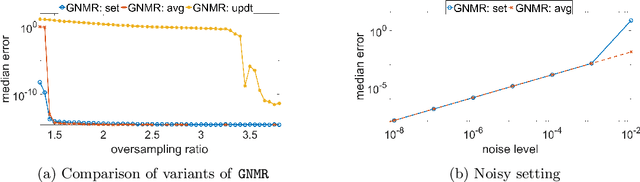

Abstract:Low rank matrix recovery problems, including matrix completion and matrix sensing, appear in a broad range of applications. In this work we present GNMR -- an extremely simple iterative algorithm for low rank matrix recovery, based on a Gauss-Newton linearization. On the theoretical front, we derive recovery guarantees for GNMR in both the matrix sensing and matrix completion settings. A key property of GNMR is that it implicitly keeps the factor matrices approximately balanced throughout its iterations. On the empirical front, we show that for matrix completion with uniform sampling, GNMR performs better than several popular methods, especially when given very few observations close to the information limit.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge