Philipp Wacker

Gradient-Free Sequential Bayesian Experimental Design via Interacting Particle Systems

Apr 17, 2025Abstract:We introduce a gradient-free framework for Bayesian Optimal Experimental Design (BOED) in sequential settings, aimed at complex systems where gradient information is unavailable. Our method combines Ensemble Kalman Inversion (EKI) for design optimization with the Affine-Invariant Langevin Dynamics (ALDI) sampler for efficient posterior sampling-both of which are derivative-free and ensemble-based. To address the computational challenges posed by nested expectations in BOED, we propose variational Gaussian and parametrized Laplace approximations that provide tractable upper and lower bounds on the Expected Information Gain (EIG). These approximations enable scalable utility estimation in high-dimensional spaces and PDE-constrained inverse problems. We demonstrate the performance of our framework through numerical experiments ranging from linear Gaussian models to PDE-based inference tasks, highlighting the method's robustness, accuracy, and efficiency in information-driven experimental design.

Connections between sequential Bayesian inference and evolutionary dynamics

Nov 25, 2024Abstract:It has long been posited that there is a connection between the dynamical equations describing evolutionary processes in biology and sequential Bayesian learning methods. This manuscript describes new research in which this precise connection is rigorously established in the continuous time setting. Here we focus on a partial differential equation known as the Kushner-Stratonovich equation describing the evolution of the posterior density in time. Of particular importance is a piecewise smooth approximation of the observation path from which the discrete time filtering equations, which are shown to converge to a Stratonovich interpretation of the Kushner-Stratonovich equation. This smooth formulation will then be used to draw precise connections between nonlinear stochastic filtering and replicator-mutator dynamics. Additionally, gradient flow formulations will be investigated as well as a form of replicator-mutator dynamics which is shown to be beneficial for the misspecified model filtering problem. It is hoped this work will spur further research into exchanges between sequential learning and evolutionary biology and to inspire new algorithms in filtering and sampling.

Ensemble-based gradient inference for particle methods in optimization and sampling

Sep 23, 2022

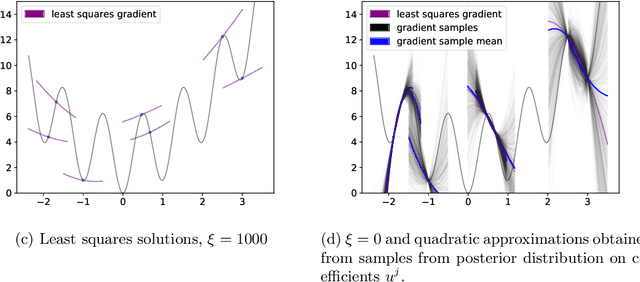

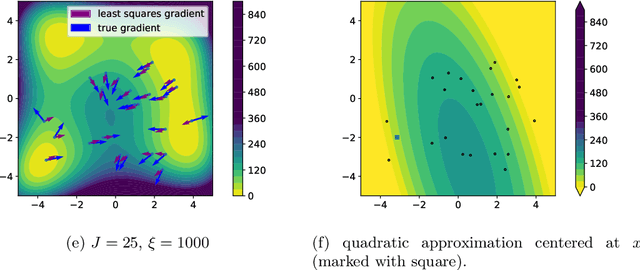

Abstract:We propose an approach based on function evaluations and Bayesian inference to extract higher-order differential information of objective functions {from a given ensemble of particles}. Pointwise evaluation $\{V(x^i)\}_i$ of some potential $V$ in an ensemble $\{x^i\}_i$ contains implicit information about first or higher order derivatives, which can be made explicit with little computational effort (ensemble-based gradient inference -- EGI). We suggest to use this information for the improvement of established ensemble-based numerical methods for optimization and sampling such as Consensus-based optimization and Langevin-based samplers. Numerical studies indicate that the augmented algorithms are often superior to their gradient-free variants, in particular the augmented methods help the ensembles to escape their initial domain, to explore multimodal, non-Gaussian settings and to speed up the collapse at the end of optimization dynamics.} The code for the numerical examples in this manuscript can be found in the paper's Github repository (https://github.com/MercuryBench/ensemble-based-gradient.git).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge