Pengwei Yang

DSPR: Dual-Stream Physics-Residual Networks for Trustworthy Industrial Time Series Forecasting

Apr 08, 2026Abstract:Accurate forecasting of industrial time series requires balancing predictive accuracy with physical plausibility under non-stationary operating conditions. Existing data-driven models often achieve strong statistical performance but struggle to respect regime-dependent interaction structures and transport delays inherent in real-world systems. To address this challenge, we propose DSPR (Dual-Stream Physics-Residual Networks), a forecasting framework that explicitly decouples stable temporal patterns from regime-dependent residual dynamics. The first stream models the statistical temporal evolution of individual variables. The second stream focuses on residual dynamics through two key mechanisms: an Adaptive Window module that estimates flow-dependent transport delays, and a Physics-Guided Dynamic Graph that incorporates physical priors to learn time-varying interaction structures while suppressing spurious correlations. Experiments on four industrial benchmarks spanning heterogeneous regimes demonstrate that DSPR consistently improves forecasting accuracy and robustness under regime shifts while maintaining strong physical plausibility. It achieves state-of-the-art predictive performance, with Mean Conservation Accuracy exceeding 99% and Total Variation Ratio reaching up to 97.2%. Beyond forecasting, the learned interaction structures and adaptive lags provide interpretable insights that are consistent with known domain mechanisms, such as flow-dependent transport delays and wind-to-power scaling behaviors. These results suggest that architectural decoupling with physics-consistent inductive biases offers an effective path toward trustworthy industrial time-series forecasting. Furthermore, DSPR's demonstrated robust performance in long-term industrial deployment bridges the gap between advanced forecasting models and trustworthy autonomous control systems.

Energy Loss Prediction in IoT Energy Services

May 16, 2023Abstract:We propose a novel Energy Loss Prediction(ELP) framework that estimates the energy loss in sharing crowdsourced energy services. Crowdsourcing wireless energy services is a novel and convenient solution to enable the ubiquitous charging of nearby IoT devices. Therefore, capturing the wireless energy sharing loss is essential for the successful deployment of efficient energy service composition techniques. We propose Easeformer, a novel attention-based algorithm to predict the battery levels of IoT devices in a crowdsourced energy sharing environment. The predicted battery levels are used to estimate the energy loss. A set of experiments were conducted to demonstrate the feasibility and effectiveness of the proposed framework. We conducted extensive experiments on real wireless energy datasets to demonstrate that our framework significantly outperforms existing methods.

Monitoring Efficiency of IoT Wireless Charging

Mar 10, 2023Abstract:Crowdsourcing wireless energy is a novel and convenient solution to charge nearby IoT devices. Several applications have been proposed to enable peer-to-peer wireless energy charging. However, none of them considered the energy efficiency of the wireless transfer of energy. In this paper, we propose an energy estimation framework that predicts the actual received energy. Our framework uses two machine learning algorithms, namely XGBoost and Neural Network, to estimate the received energy. The result shows that the Neural Network model is better than XGBoost at predicting the received energy. We train and evaluate our models by collecting a real wireless energy dataset.

Establishment of Neural Networks Robust to Label Noise

Dec 04, 2022

Abstract:Label noise is a significant obstacle in deep learning model training. It can have a considerable impact on the performance of image classification models, particularly deep neural networks, which are especially susceptible because they have a strong propensity to memorise noisy labels. In this paper, we have examined the fundamental concept underlying related label noise approaches. A transition matrix estimator has been created, and its effectiveness against the actual transition matrix has been demonstrated. In addition, we examined the label noise robustness of two convolutional neural network classifiers with LeNet and AlexNet designs. The two FashionMINIST datasets have revealed the robustness of both models. We are not efficiently able to demonstrate the influence of the transition matrix noise correction on robustness enhancements due to our inability to correctly tune the complex convolutional neural network model due to time and computing resource constraints. There is a need for additional effort to fine-tune the neural network model and explore the precision of the estimated transition model in future research.

Containminated Images Recovery by Implementing Non-negative Matrix Factorisation

Nov 08, 2022

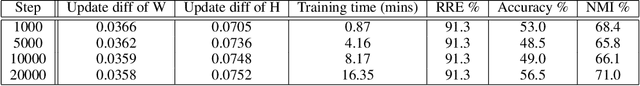

Abstract:Non-negative matrix factorisation (NMF) has been widely used to address the problem of corrupted data in images. The standard NMF algorithm minimises the Euclidean distance between the data matrix and the factorised approximation. Although this method has demonstrated good results, because it employs the squared error of each data point, the standard NMF algorithm is sensitive to outliers. In this paper, we theoretically analyse the robustness of the standard NMF, HCNMF and L2,1-NMF algorithms, and implement sets of experiments to show the robustness on real datasets, namely ORL and Extended YaleB. Our work demonstrates that different amounts of iterations are required for each algorithm to converge. Given the high computational complexity of these algorithms, our final models such as HCNMF and L2,1-NMF model do not successfully converge within the iteration parameters of this paper. Nevertheless, the experimental results still demonstrate the robustness of the aforementioned algorithms to some extent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge