Pei-Ju Lee

Subgroup Analysis via Model-based Rule Forest

Aug 27, 2024

Abstract:Machine learning models are often criticized for their black-box nature, raising concerns about their applicability in critical decision-making scenarios. Consequently, there is a growing demand for interpretable models in such contexts. In this study, we introduce Model-based Deep Rule Forests (mobDRF), an interpretable representation learning algorithm designed to extract transparent models from data. By leveraging IF-THEN rules with multi-level logic expressions, mobDRF enhances the interpretability of existing models without compromising accuracy. We apply mobDRF to identify key risk factors for cognitive decline in an elderly population, demonstrating its effectiveness in subgroup analysis and local model optimization. Our method offers a promising solution for developing trustworthy and interpretable machine learning models, particularly valuable in fields like healthcare, where understanding differential effects across patient subgroups can lead to more personalized and effective treatments.

Towards Interpretable Deep Extreme Multi-label Learning

Jul 03, 2019

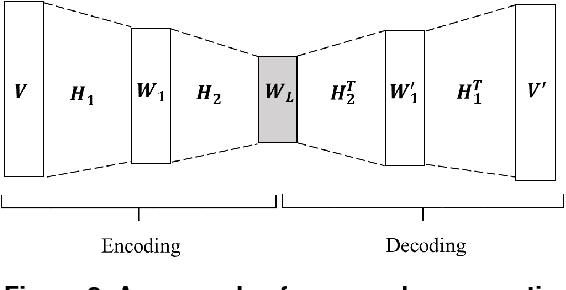

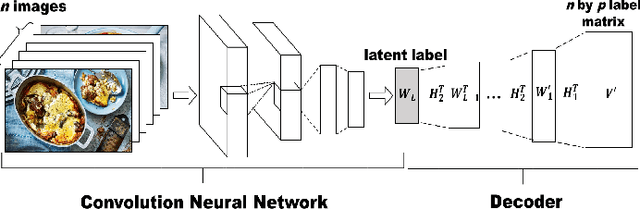

Abstract:Many Machine Learning algorithms, such as deep neural networks, have long been criticized for being "black-boxes"-a kind of models unable to provide how it arrive at a decision without further efforts to interpret. This problem has raised concerns on model applications' trust, safety, nondiscrimination, and other ethical issues. In this paper, we discuss the machine learning interpretability of a real-world application, eXtreme Multi-label Learning (XML), which involves learning models from annotated data with many pre-defined labels. We propose a two-step XML approach that combines deep non-negative autoencoder with other multi-label classifiers to tackle different data applications with a large number of labels. Our experimental result shows that the proposed approach is able to cope with many-label problems as well as to provide interpretable label hierarchies and dependencies that helps us understand how the model recognizes the existences of objects in an image.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge