Paula Lago

In Shift and In Variance: Assessing the Robustness of HAR Deep Learning Models against Variability

Mar 14, 2025

Abstract:Human Activity Recognition (HAR) using wearable inertial measurement unit (IMU) sensors can revolutionize healthcare by enabling continual health monitoring, disease prediction, and routine recognition. Despite the high accuracy of Deep Learning (DL) HAR models, their robustness to real-world variabilities remains untested, as they have primarily been trained and tested on limited lab-confined data. In this study, we isolate subject, device, position, and orientation variability to determine their effect on DL HAR models and assess the robustness of these models in real-world conditions. We evaluated the DL HAR models using the HARVAR and REALDISP datasets, providing a comprehensive discussion on the impact of variability on data distribution shifts and changes in model performance. Our experiments measured shifts in data distribution using Maximum Mean Discrepancy (MMD) and observed DL model performance drops due to variability. We concur that studied variabilities affect DL HAR models differently, and there is an inverse relationship between data distribution shifts and model performance. The compounding effect of variability was analyzed, and the implications of variabilities in real-world scenarios were highlighted. MMD proved an effective metric for calculating data distribution shifts and explained the drop in performance due to variabilities in HARVAR and REALDISP datasets. Combining our understanding of variability with evaluating its effects will facilitate the development of more robust DL HAR models and optimal training techniques. Allowing Future models to not only be assessed based on their maximum F1 score but also on their ability to generalize effectively

A State-of-the-Art Review of Computational Models for Analyzing Longitudinal Wearable Sensor Data in Healthcare

Jul 31, 2024Abstract:Wearable devices are increasingly used as tools for biomedical research, as the continuous stream of behavioral and physiological data they collect can provide insights about our health in everyday contexts. Long-term tracking, defined in the timescale of months of year, can provide insights of patterns and changes as indicators of health changes. These insights can make medicine and healthcare more predictive, preventive, personalized, and participative (The 4P's). However, the challenges in modeling, understanding and processing longitudinal data are a significant barrier to their adoption in research studies and clinical settings. In this paper, we review and discuss three models used to make sense of longitudinal data: routines, rhythms and stability metrics. We present the challenges associated with the processing and analysis of longitudinal wearable sensor data, with a special focus on how to handle the different temporal dynamics at various granularities. We then discuss current limitations and identify directions for future work. This review is essential to the advancement of computational modeling and analysis of longitudinal sensor data for pervasive healthcare.

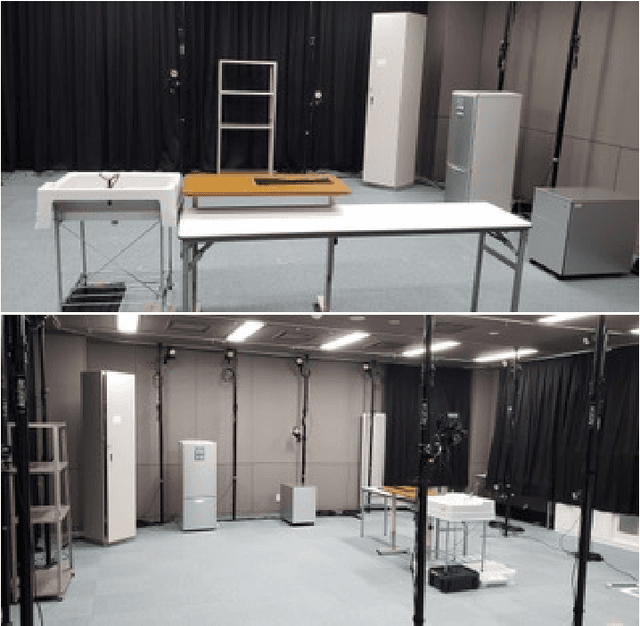

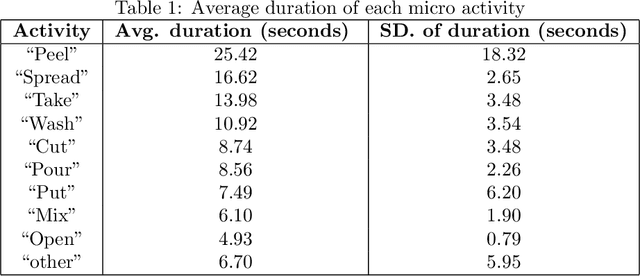

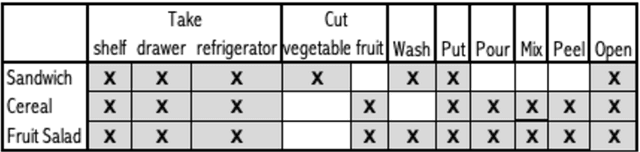

A dataset for complex activity recognition withmicro and macro activities in a cooking scenario

Jun 18, 2020

Abstract:Complex activity recognition can benefit from understanding the steps that compose them. Current datasets, however, are annotated with one label only, hindering research in this direction. In this paper, we describe a new dataset for sensor-based activity recognition featuring macro and micro activities in a cooking scenario. Three sensing systems measured simultaneously, namely a motion capture system, tracking 25 points on the body; two smartphone accelerometers, one on the hip and the other one on the forearm; and two smartwatches one on each wrist. The dataset is labeled for both the recipes (macro activities) and the steps (micro activities). We summarize the results of a baseline classification using traditional activity recognition pipelines. The dataset is designed to be easily used to test and develop activity recognition approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge