Pape M. Sylla

Generate and Verify: Semantically Meaningful Formal Analysis of Neural Network Perception Systems

Dec 16, 2020

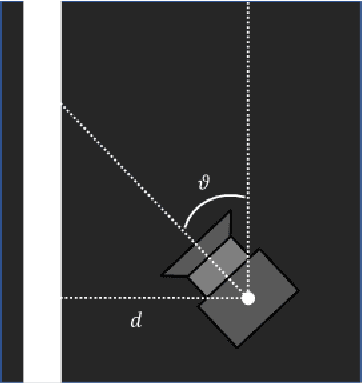

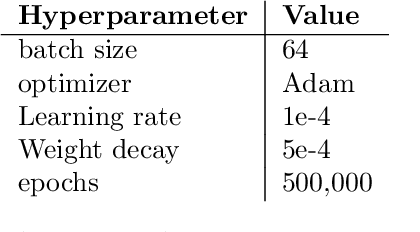

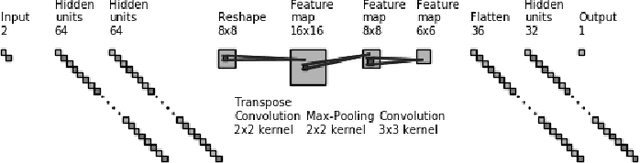

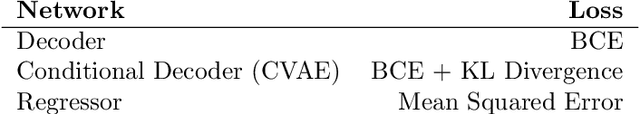

Abstract:Testing remains the primary method to evaluate the accuracy of neural network perception systems. Prior work on the formal verification of neural network perception models has been limited to notions of local adversarial robustness for classification with respect to individual image inputs. In this work, we propose a notion of global correctness for neural network perception models performing regression with respect to a generative neural network with a semantically meaningful latent space. That is, against an infinite set of images produced by a generative model over an interval of its latent space, we employ neural network verification to prove that the model will always produce estimates within some error bound of the ground truth. Where the perception model fails, we obtain semantically meaningful counter-examples which carry information on concrete states of the system of interest that can be used programmatically without human inspection of corresponding generated images. Our approach, Generate and Verify, provides a new technique to gather insight into the failure cases of neural network perception systems and provide meaningful guarantees of correct behavior in safety critical applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge