Paolo Medici

Informative Rays Selection for Few-Shot Neural Radiance Fields

Dec 29, 2023Abstract:Neural Radiance Fields (NeRF) have recently emerged as a powerful method for image-based 3D reconstruction, but the lengthy per-scene optimization limits their practical usage, especially in resource-constrained settings. Existing approaches solve this issue by reducing the number of input views and regularizing the learned volumetric representation with either complex losses or additional inputs from other modalities. In this paper, we present KeyNeRF, a simple yet effective method for training NeRF in few-shot scenarios by focusing on key informative rays. Such rays are first selected at camera level by a view selection algorithm that promotes baseline diversity while guaranteeing scene coverage, then at pixel level by sampling from a probability distribution based on local image entropy. Our approach performs favorably against state-of-the-art methods, while requiring minimal changes to existing NeRF codebases.

Learning Neural Radiance Fields from Multi-View Geometry

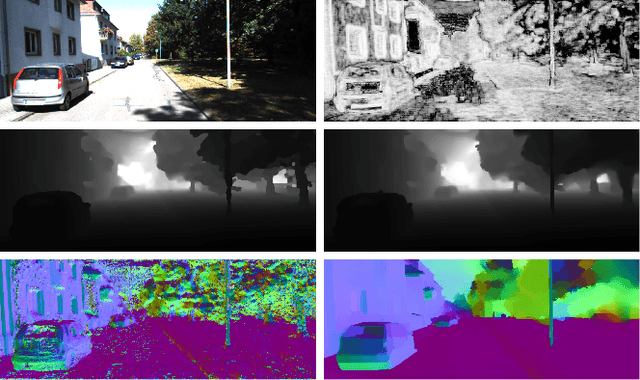

Oct 24, 2022Abstract:We present a framework, called MVG-NeRF, that combines classical Multi-View Geometry algorithms and Neural Radiance Fields (NeRF) for image-based 3D reconstruction. NeRF has revolutionized the field of implicit 3D representations, mainly due to a differentiable volumetric rendering formulation that enables high-quality and geometry-aware novel view synthesis. However, the underlying geometry of the scene is not explicitly constrained during training, thus leading to noisy and incorrect results when extracting a mesh with marching cubes. To this end, we propose to leverage pixelwise depths and normals from a classical 3D reconstruction pipeline as geometric priors to guide NeRF optimization. Such priors are used as pseudo-ground truth during training in order to improve the quality of the estimated underlying surface. Moreover, each pixel is weighted by a confidence value based on the forward-backward reprojection error for additional robustness. Experimental results on real-world data demonstrate the effectiveness of this approach in obtaining clean 3D meshes from images, while maintaining competitive performances in novel view synthesis.

Revisiting PatchMatch Multi-View Stereo for Urban 3D Reconstruction

Jul 18, 2022

Abstract:In this paper, a complete pipeline for image-based 3D reconstruction of urban scenarios is proposed, based on PatchMatch Multi-View Stereo (MVS). Input images are firstly fed into an off-the-shelf visual SLAM system to extract camera poses and sparse keypoints, which are used to initialize PatchMatch optimization. Then, pixelwise depths and normals are iteratively computed in a multi-scale framework with a novel depth-normal consistency loss term and a global refinement algorithm to balance the inherently local nature of PatchMatch. Finally, a large-scale point cloud is generated by back-projecting multi-view consistent estimates in 3D. The proposed approach is carefully evaluated against both classical MVS algorithms and monocular depth networks on the KITTI dataset, showing state of the art performances.

Efficient View Clustering and Selection for City-Scale 3D Reconstruction

Jul 18, 2022Abstract:Image datasets have been steadily growing in size, harming the feasibility and efficiency of large-scale 3D reconstruction methods. In this paper, a novel approach for scaling Multi-View Stereo (MVS) algorithms up to arbitrarily large collections of images is proposed. Specifically, the problem of reconstructing the 3D model of an entire city is targeted, starting from a set of videos acquired by a moving vehicle equipped with several high-resolution cameras. Initially, the presented method exploits an approximately uniform distribution of poses and geometry and builds a set of overlapping clusters. Then, an Integer Linear Programming (ILP) problem is formulated for each cluster to select an optimal subset of views that guarantees both visibility and matchability. Finally, local point clouds for each cluster are separately computed and merged. Since clustering is independent from pairwise visibility information, the proposed algorithm runs faster than existing literature and allows for a massive parallelization. Extensive testing on urban data are discussed to show the effectiveness and the scalability of this approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge