Oluwaseun Joseph Aribido

Example Forgetting: A Novel Approach to Explain and Interpret Deep Neural Networks in Seismic Interpretation

Feb 24, 2023Abstract:In recent years, deep neural networks have significantly impacted the seismic interpretation process. Due to the simple implementation and low interpretation costs, deep neural networks are an attractive component for the common interpretation pipeline. However, neural networks are frequently met with distrust due to their property of producing semantically incorrect outputs when exposed to sections the model was not trained on. We address this issue by explaining model behaviour and improving generalization properties through example forgetting: First, we introduce a method that effectively relates semantically malfunctioned predictions to their respectful positions within the neural network representation manifold. More concrete, our method tracks how models "forget" seismic reflections during training and establishes a connection to the decision boundary proximity of the target class. Second, we use our analysis technique to identify frequently forgotten regions within the training volume and augment the training set with state-of-the-art style transfer techniques from computer vision. We show that our method improves the segmentation performance on underrepresented classes while significantly reducing the forgotten regions in the F3 volume in the Netherlands.

Explaining Deep Models through Forgettable Learning Dynamics

Jan 10, 2023Abstract:Even though deep neural networks have shown tremendous success in countless applications, explaining model behaviour or predictions is an open research problem. In this paper, we address this issue by employing a simple yet effective method by analysing the learning dynamics of deep neural networks in semantic segmentation tasks. Specifically, we visualize the learning behaviour during training by tracking how often samples are learned and forgotten in subsequent training epochs. This further allows us to derive important information about the proximity to the class decision boundary and identify regions that pose a particular challenge to the model. Inspired by this phenomenon, we present a novel segmentation method that actively uses this information to alter the data representation within the model by increasing the variety of difficult regions. Finally, we show that our method consistently reduces the amount of regions that are forgotten frequently. We further evaluate our method in light of the segmentation performance.

Self-Supervised Delineation of Geological Structures using Orthogonal Latent Space Projection

Aug 22, 2021

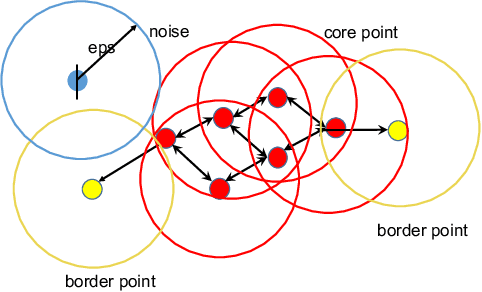

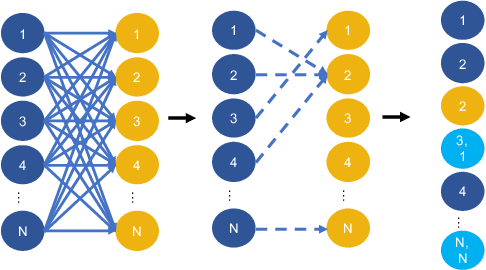

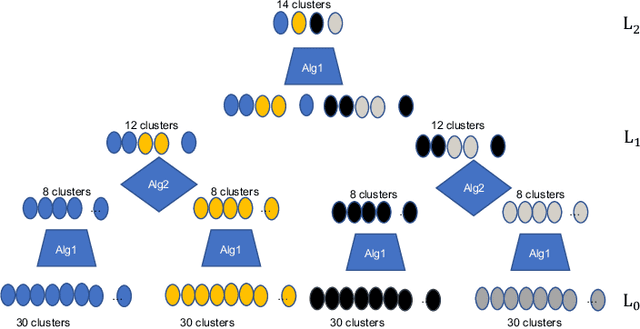

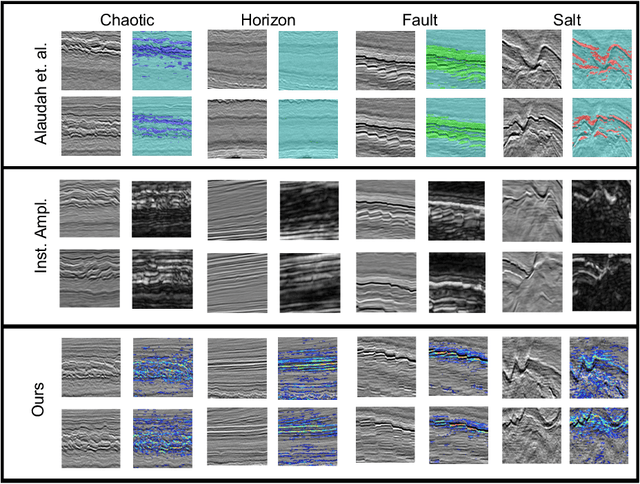

Abstract:We developed two machine learning frameworks that could assist in automated litho-stratigraphic interpretation of seismic volumes without any manual hand labeling from an experienced seismic interpreter. The first framework is an unsupervised hierarchical clustering model to divide seismic images from a volume into certain number of clusters determined by the algorithm. The clustering framework uses a combination of density and hierarchical techniques to determine the size and homogeneity of the clusters. The second framework consists of a self-supervised deep learning framework to label regions of geological interest in seismic images. It projects the latent-space of an encoder-decoder architecture unto two orthogonal subspaces, from which it learns to delineate regions of interest in the seismic images. To demonstrate an application of both frameworks, a seismic volume was clustered into various contiguous clusters, from which four clusters were selected based on distinct seismic patterns: horizons, faults, salt domes and chaotic structures. Images from the selected clusters are used to train the encoder-decoder network. The output of the encoder-decoder network is a probability map of the possibility an amplitude reflection event belongs to an interesting geological structure. The structures are delineated using the probability map. The delineated images are further used to post-train a segmentation model to extend our results to full-vertical sections. The results on vertical sections show that we can factorize a seismic volume into its corresponding structural components. Lastly, we showed that our deep learning framework could be modeled as an attribute extractor and we compared our attribute result with various existing attributes in literature and demonstrate competitive performance with them.

Self-Supervised Annotation of Seismic Images using Latent Space Factorization

Sep 26, 2020

Abstract:Annotating seismic data is expensive, laborious and subjective due to the number of years required for seismic interpreters to attain proficiency in interpretation. In this paper, we develop a framework to automate annotating pixels of a seismic image to delineate geological structural elements given image-level labels assigned to each image. Our framework factorizes the latent space of a deep encoder-decoder network by projecting the latent space to learned sub-spaces. Using constraints in the pixel space, the seismic image is further factorized to reveal confidence values on pixels associated with the geological element of interest. Details of the annotated image are provided for analysis and qualitative comparison is made with similar frameworks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge