Ohad Einav

A Market for Accuracy: Classification under Competition

Feb 25, 2025

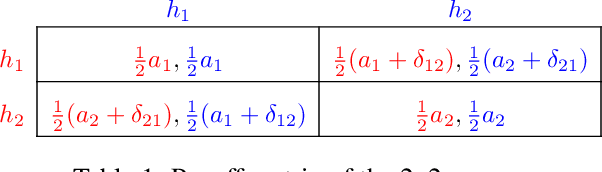

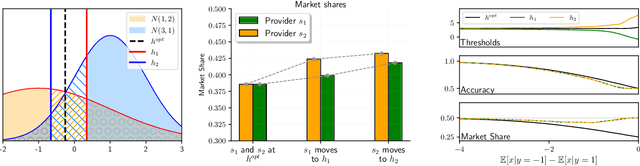

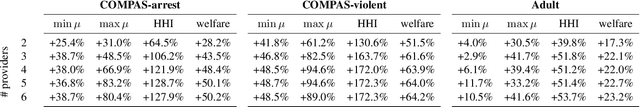

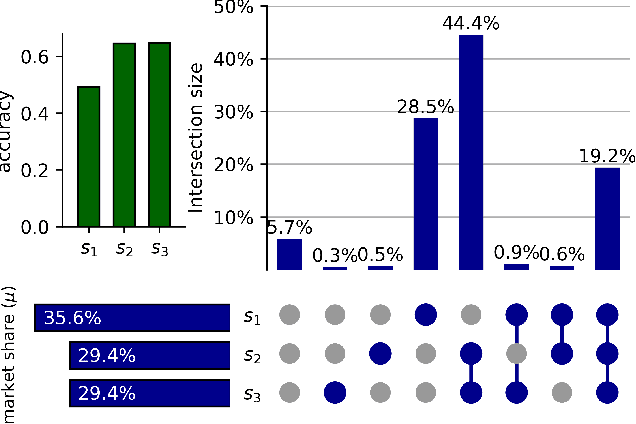

Abstract:Machine learning models play a key role for service providers looking to gain market share in consumer markets. However, traditional learning approaches do not take into account the existence of additional providers, who compete with each other for consumers. Our work aims to study learning in this market setting, as it affects providers, consumers, and the market itself. We begin by analyzing such markets through the lens of the learning objective, and show that accuracy cannot be the only consideration. We then propose a method for classification under competition, so that a learner can maximize market share in the presence of competitors. We show that our approach benefits the providers as well as the consumers, and find that the timing of market entry and model updates can be crucial. We display the effectiveness of our approach across a range of domains, from simple distributions to noisy datasets, and show that the market as a whole remains stable by converging quickly to an equilibrium.

Incentivizing Quality Text Generation via Statistical Contracts

Jun 17, 2024Abstract:While the success of large language models (LLMs) increases demand for machine-generated text, current pay-per-token pricing schemes create a misalignment of incentives known in economics as moral hazard: Text-generating agents have strong incentive to cut costs by preferring a cheaper model over the cutting-edge one, and this can be done "behind the scenes" since the agent performs inference internally. In this work, we approach this issue from an economic perspective, by proposing a pay-for-performance, contract-based framework for incentivizing quality. We study a principal-agent game where the agent generates text using costly inference, and the contract determines the principal's payment for the text according to an automated quality evaluation. Since standard contract theory is inapplicable when internal inference costs are unknown, we introduce cost-robust contracts. As our main theoretical contribution, we characterize optimal cost-robust contracts through a direct correspondence to optimal composite hypothesis tests from statistics, generalizing a result of Saig et al. (NeurIPS'23). We evaluate our framework empirically by deriving contracts for a range of objectives and LLM evaluation benchmarks, and find that cost-robust contracts sacrifice only a marginal increase in objective value compared to their cost-aware counterparts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge