Nima Moradi

Predicting Vulnerability to Malware Using Machine Learning Models: A Study on Microsoft Windows Machines

Jan 05, 2025

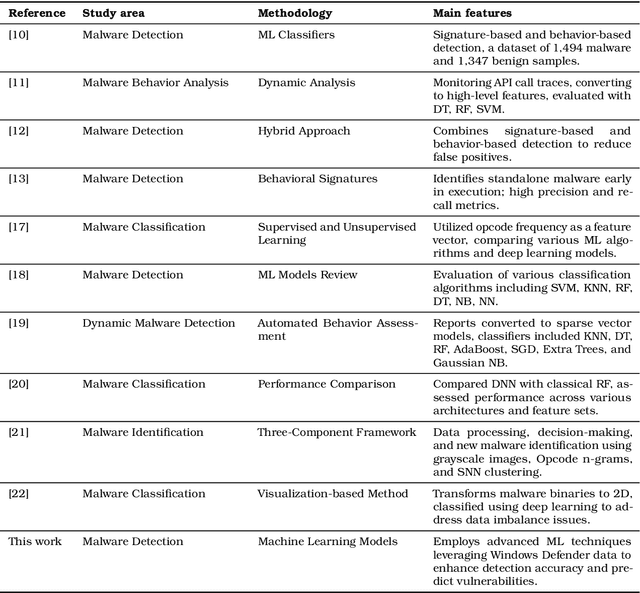

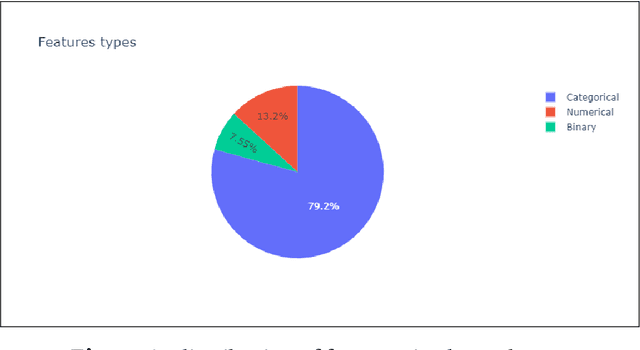

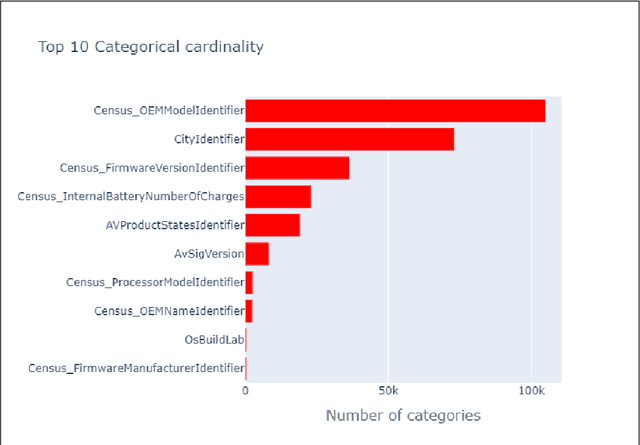

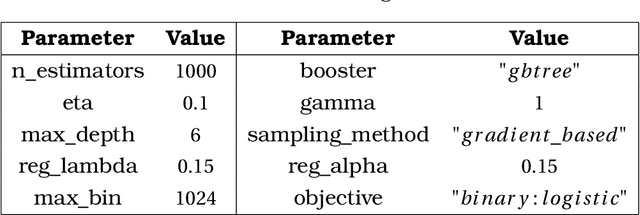

Abstract:In an era of escalating cyber threats, malware poses significant risks to individuals and organizations, potentially leading to data breaches, system failures, and substantial financial losses. This study addresses the urgent need for effective malware detection strategies by leveraging Machine Learning (ML) techniques on extensive datasets collected from Microsoft Windows Defender. Our research aims to develop an advanced ML model that accurately predicts malware vulnerabilities based on the specific conditions of individual machines. Moving beyond traditional signature-based detection methods, we incorporate historical data and innovative feature engineering to enhance detection capabilities. This study makes several contributions: first, it advances existing malware detection techniques by employing sophisticated ML algorithms; second, it utilizes a large-scale, real-world dataset to ensure the applicability of findings; third, it highlights the importance of feature analysis in identifying key indicators of malware infections; and fourth, it proposes models that can be adapted for enterprise environments, offering a proactive approach to safeguarding extensive networks against emerging threats. We aim to improve cybersecurity resilience, providing critical insights for practitioners in the field and addressing the evolving challenges posed by malware in a digital landscape. Finally, discussions on results, insights, and conclusions are presented.

Feed-Forward Neural Networks as a Mixed-Integer Program

Feb 09, 2024Abstract:Deep neural networks (DNNs) are widely studied in various applications. A DNN consists of layers of neurons that compute affine combinations, apply nonlinear operations, and produce corresponding activations. The rectified linear unit (ReLU) is a typical nonlinear operator, outputting the max of its input and zero. In scenarios like max pooling, where multiple input values are involved, a fixed-parameter DNN can be modeled as a mixed-integer program (MIP). This formulation, with continuous variables representing unit outputs and binary variables for ReLU activation, finds applications across diverse domains. This study explores the formulation of trained ReLU neurons as MIP and applies MIP models for training neural networks (NNs). Specifically, it investigates interactions between MIP techniques and various NN architectures, including binary DNNs (employing step activation functions) and binarized DNNs (with weights and activations limited to $-1,0,+1$). The research focuses on training and evaluating proposed approaches through experiments on handwritten digit classification models. The comparative study assesses the performance of trained ReLU NNs, shedding light on the effectiveness of MIP formulations in enhancing training processes for NNs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge