Nihar Talele

Mesh-based Tools to Analyze Deep Reinforcement Learning Policies for Underactuated Biped Locomotion

Mar 29, 2019

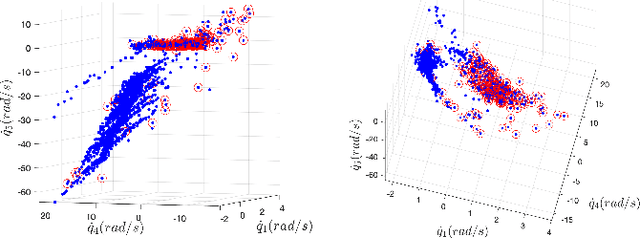

Abstract:In this paper, we present a mesh-based approach to analyze stability and robustness of the policies obtained via deep reinforcement learning for various biped gaits of a five-link planar model. Intuitively, one would expect that including perturbations and/or other types of noise during training would likely result in more robustness of the resulting control policy. However, one would like to have a quantitative and computationally-efficient means of evaluating the degree to which this might be so. Rather than relying on Monte Carlo simulations, our goal is to provide more sophisticated tools to assess robustness properties of such policies. Our work is motivated by the twin hypotheses that contraction of dynamics, when achievable, can simplify control and that control policies obtained via deep learning may therefore exhibit tendency to contract to lower-dimensional manifolds within the full state space, as a result. The tractability of our mesh-based tools in this work provides some evidence that this may be so.

Toward Efficient and Robust Biped Walking Optimization

Jul 26, 2018Abstract:Practical bipedal robot locomotion needs to be both energy efficient and robust to variability and uncertainty. In this paper, we build upon recent works in trajectory optimization for robot locomotion with two primary goals. First, we wish to demonstrate the importance of (a) considering and quantifying not only energy efficiency but also robustness of gaits, and (b) optimization not only of nominal motion trajectories but also of robot design parameters and feedback control policies. As a second, complementary focus, we present results from optimization studies on a 5-link planar walking model, to provide preliminary data on particular trade-offs and general trends in improving efficiency versus robustness. In addressing important, open challenges, we focus in particular on discussions of the effects of choices made (a) in formulating what is always, necessarily only an approximate optimization, in choosing metrics for performance, and (b) in structuring and tuning feedback control.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge