Necdet Gurkan

Replicating Human Social Perception in Generative AI: Evaluating the Valence-Dominance Model

Mar 05, 2025

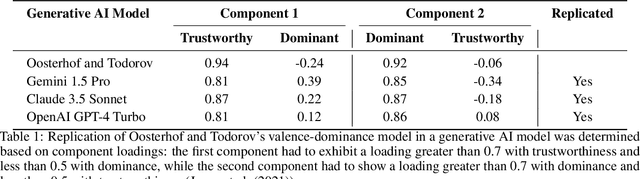

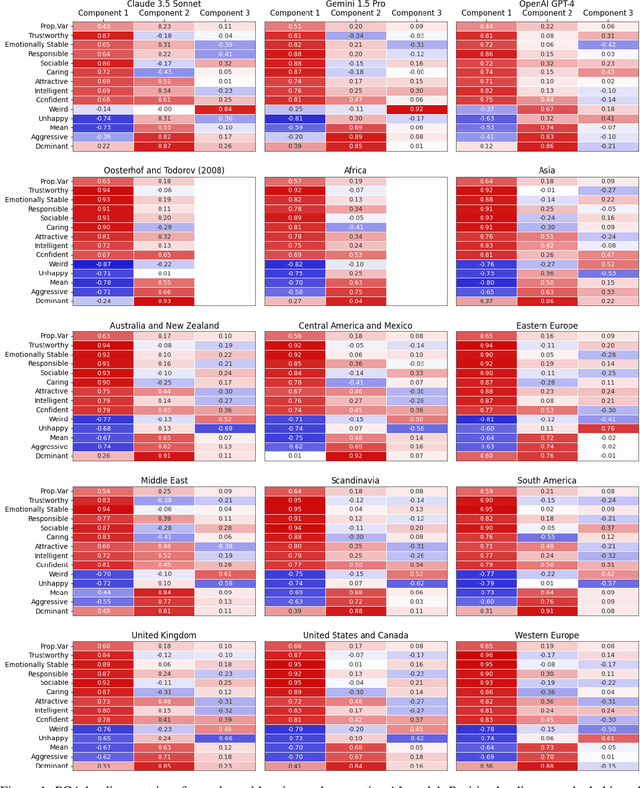

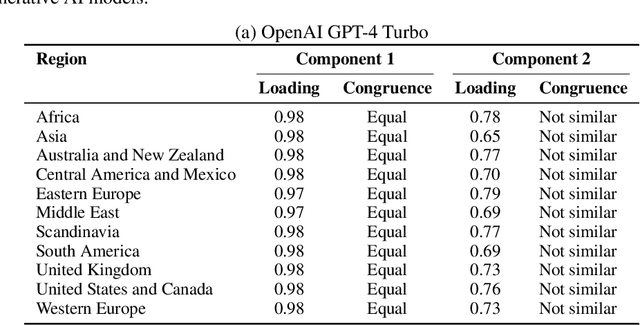

Abstract:As artificial intelligence (AI) continues to advance--particularly in generative models--an open question is whether these systems can replicate foundational models of human social perception. A well-established framework in social cognition suggests that social judgments are organized along two primary dimensions: valence (e.g., trustworthiness, warmth) and dominance (e.g., power, assertiveness). This study examines whether multimodal generative AI systems can reproduce this valence-dominance structure when evaluating facial images and how their representations align with those observed across world regions. Through principal component analysis (PCA), we found that the extracted dimensions closely mirrored the theoretical structure of valence and dominance, with trait loadings aligning with established definitions. However, many world regions and generative AI models also exhibited a third component, the nature and significance of which warrant further investigation. These findings demonstrate that multimodal generative AI systems can replicate key aspects of human social perception, raising important questions about their implications for AI-driven decision-making and human-AI interactions.

Evaluation of Human and Machine Face Detection using a Novel Distinctive Human Appearance Dataset

Nov 02, 2021

Abstract:Face detection is a long-standing challenge in the field of computer vision, with the ultimate goal being to accurately localize human faces in an unconstrained environment. There are significant technical hurdles in making these systems accurate due to confounding factors related to pose, image resolution, illumination, occlusion, and viewpoint [44]. That being said, with recent developments in machine learning, face-detection systems have achieved extraordinary accuracy, largely built on data-driven deep-learning models [70]. Though encouraging, a critical aspect that limits face-detection performance and social responsibility of deployed systems is the inherent diversity of human appearance. Every human appearance reflects something unique about a person, including their heritage, identity, experiences, and visible manifestations of self-expression. However, there are questions about how well face-detection systems perform when faced with varying face size and shape, skin color, body modification, and body ornamentation. Towards this goal, we collected the Distinctive Human Appearance dataset, an image set that represents appearances with low frequency and that tend to be undersampled in face datasets. Then, we evaluated current state-of-the-art face-detection models in their ability to detect faces in these images. The evaluation results show that face-detection algorithms do not generalize well to these diverse appearances. Evaluating and characterizing the state of current face-detection models will accelerate research and development towards creating fairer and more accurate face-detection systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge