N. Sukumar

A Wachspress-based transfinite formulation for exactly enforcing Dirichlet boundary conditions on convex polygonal domains in physics-informed neural networks

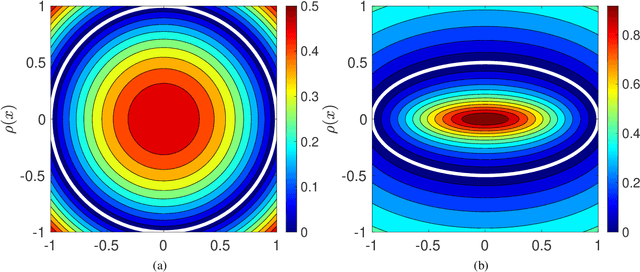

Jan 05, 2026Abstract:In this paper, we present a Wachspress-based transfinite formulation on convex polygonal domains for exact enforcement of Dirichlet boundary conditions in physics-informed neural networks. This approach leverages prior advances in geometric design such as blending functions and transfinite interpolation over convex domains. For prescribed Dirichlet boundary function $\mathcal{B}$, the transfinite interpolant of $\mathcal{B}$, $g : \bar P \to C^0(\bar P)$, $\textit{lifts}$ functions from the boundary of a two-dimensional polygonal domain to its interior. The trial function is expressed as the difference between the neural network's output and the extension of its boundary restriction into the interior of the domain, with $g$ added to it. This ensures kinematic admissibility of the trial function in the deep Ritz method. Wachspress coordinates for an $n$-gon are used in the transfinite formula, which generalizes bilinear Coons transfinite interpolation on rectangles to convex polygons. The neural network trial function has a bounded Laplacian, thereby overcoming a limitation in a previous contribution where approximate distance functions were used to exactly enforce Dirichlet boundary conditions. For a point $\boldsymbol{x} \in \bar{P}$, Wachspress coordinates, $\boldsymbolλ : \bar P \to [0,1]^n$, serve as a geometric feature map for the neural network: $\boldsymbolλ$ encodes the boundary edges of the polygonal domain. This offers a framework for solving problems on parametrized convex geometries using neural networks. The accuracy of physics-informed neural networks and deep Ritz is assessed on forward, inverse, and parametrized geometric Poisson boundary-value problems.

Variational formulation based on duality to solve partial differential equations: Use of B-splines and machine learning approximants

Dec 02, 2024

Abstract:Many partial differential equations (PDEs) such as Navier--Stokes equations in fluid mechanics, inelastic deformation in solids, and transient parabolic and hyperbolic equations do not have an exact, primal variational structure. Recently, a variational principle based on the dual (Lagrange multiplier) field was proposed. The essential idea in this approach is to treat the given PDE as constraints, and to invoke an arbitrarily chosen auxiliary potential with strong convexity properties to be optimized. This leads to requiring a convex dual functional to be minimized subject to Dirichlet boundary conditions on dual variables, with the guarantee that even PDEs that do not possess a variational structure in primal form can be solved via a variational principle. The vanishing of the first variation of the dual functional is, up to Dirichlet boundary conditions on dual fields, the weak form of the primal PDE problem with the dual-to-primal change of variables incorporated. We derive the dual weak form for the linear, one-dimensional, transient convection-diffusion equation. A Galerkin discretization is used to obtain the discrete equations, with the trial and test functions chosen as linear combination of either RePU activation functions (shallow neural network) or B-spline basis functions; the corresponding stiffness matrix is symmetric. For transient problems, a space-time Galerkin implementation is used with tensor-product B-splines as approximating functions. Numerical results are presented for the steady-state and transient convection-diffusion equation, and transient heat conduction. The proposed method delivers sound accuracy for ODEs and PDEs and rates of convergence are established in the $L^2$ norm and $H^1$ seminorm for the steady-state convection-diffusion problem.

Exact imposition of boundary conditions with distance functions in physics-informed deep neural networks

Apr 17, 2021

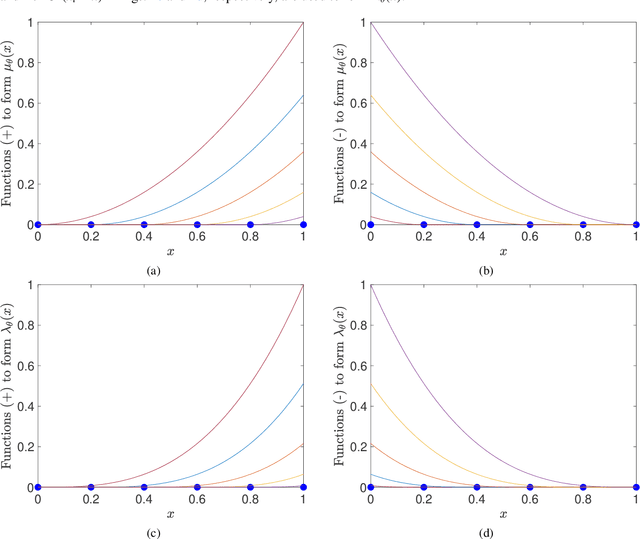

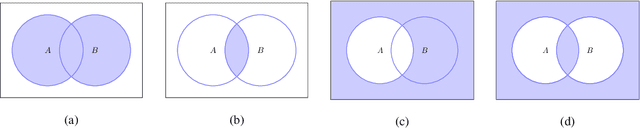

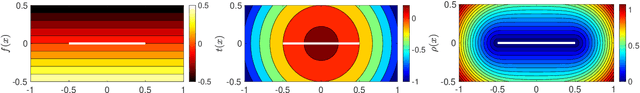

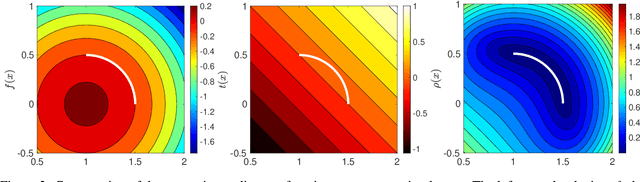

Abstract:In this paper, we introduce a new approach based on distance fields to exactly impose boundary conditions in physics-informed deep neural networks. The challenges in satisfying Dirichlet boundary conditions in meshfree and particle methods are well-known. This issue is also pertinent in the development of physics informed neural networks (PINN) for the solution of partial differential equations. We introduce geometry-aware trial functions in artifical neural networks to improve the training in deep learning for partial differential equations. To this end, we use concepts from constructive solid geometry (R-functions) and generalized barycentric coordinates (mean value potential fields) to construct $\phi$, an approximate distance function to the boundary of a domain. To exactly impose homogeneous Dirichlet boundary conditions, the trial function is taken as $\phi$ multiplied by the PINN approximation, and its generalization via transfinite interpolation is used to a priori satisfy inhomogeneous Dirichlet (essential), Neumann (natural), and Robin boundary conditions on complex geometries. In doing so, we eliminate modeling error associated with the satisfaction of boundary conditions in a collocation method and ensure that kinematic admissibility is met pointwise in a Ritz method. We present numerical solutions for linear and nonlinear boundary-value problems over domains with affine and curved boundaries. Benchmark problems in 1D for linear elasticity, advection-diffusion, and beam bending; and in 2D for the Poisson equation, biharmonic equation, and the nonlinear Eikonal equation are considered. The approach extends to higher dimensions, and we showcase its use by solving a Poisson problem with homogeneneous Dirichlet boundary conditions over the 4D hypercube. This study provides a pathway for meshfree analysis to be conducted on the exact geometry without domain discretization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge