N. Lakmal Deshapriya

pLitterStreet: Street Level Plastic Litter Detection and Mapping

Jan 26, 2024Abstract:Plastic pollution is a critical environmental issue, and detecting and monitoring plastic litter is crucial to mitigate its impact. This paper presents the methodology of mapping street-level litter, focusing primarily on plastic waste and the location of trash bins. Our methodology involves employing a deep learning technique to identify litter and trash bins from street-level imagery taken by a camera mounted on a vehicle. Subsequently, we utilized heat maps to visually represent the distribution of litter and trash bins throughout cities. Additionally, we provide details about the creation of an open-source dataset ("pLitterStreet") which was developed and utilized in our approach. The dataset contains more than 13,000 fully annotated images collected from vehicle-mounted cameras and includes bounding box labels. To evaluate the effectiveness of our dataset, we tested four well known state-of-the-art object detection algorithms (Faster R-CNN, RetinaNet, YOLOv3, and YOLOv5), achieving an average precision (AP) above 40%. While the results show average metrics, our experiments demonstrated the reliability of using vehicle-mounted cameras for plastic litter mapping. The "pLitterStreet" can also be a valuable resource for researchers and practitioners to develop and further improve existing machine learning models for detecting and mapping plastic litter in an urban environment. The dataset is open-source and more details about the dataset and trained models can be found at https://github.com/gicait/pLitter.

Centroid-UNet: Detecting Centroids in Aerial Images

Dec 13, 2021

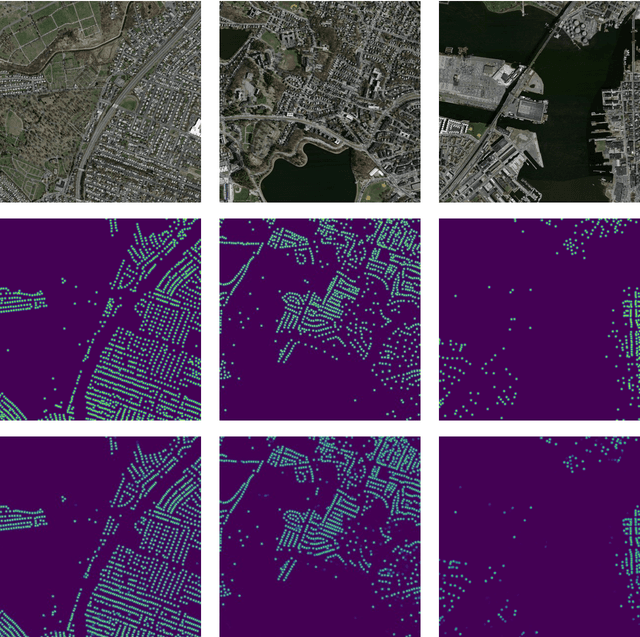

Abstract:In many applications of aerial/satellite image analysis (remote sensing), the generation of exact shapes of objects is a cumbersome task. In most remote sensing applications such as counting objects requires only location estimation of objects. Hence, locating object centroids in aerial/satellite images is an easy solution for tasks where the object's exact shape is not necessary. Thus, this study focuses on assessing the feasibility of using deep neural networks for locating object centroids in satellite images. Name of our model is Centroid-UNet. The Centroid-UNet model is based on classic U-Net semantic segmentation architecture. We modified and adapted the U-Net semantic segmentation architecture into a centroid detection model preserving the simplicity of the original model. Furthermore, we have tested and evaluated our model with two case studies involving aerial/satellite images. Those two case studies are building centroid detection case study and coconut tree centroid detection case study. Our evaluation results have reached comparably good accuracy compared to other methods, and also offer simplicity. The code and models developed under this study are also available in the Centroid-UNet GitHub repository: https://github.com/gicait/centroid-unet

* Proccedings of the 42nd Asian Conference on Remote Sensing, 2021, Can Tho city, Vietnam

Vec2Instance: Parameterization for Deep Instance Segmentation

Oct 06, 2020

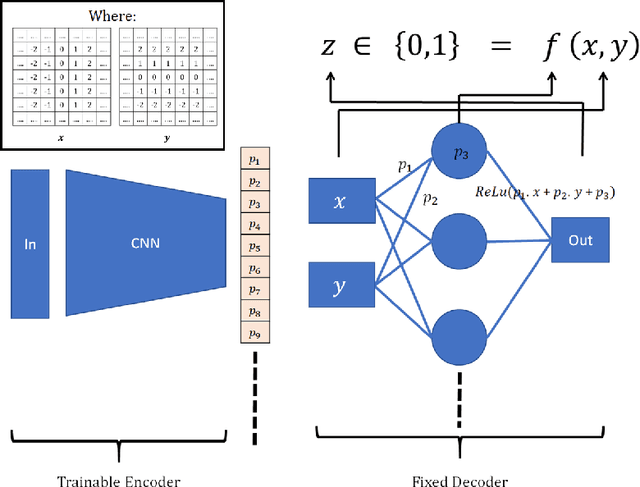

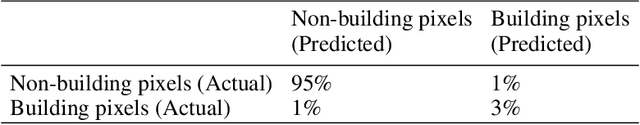

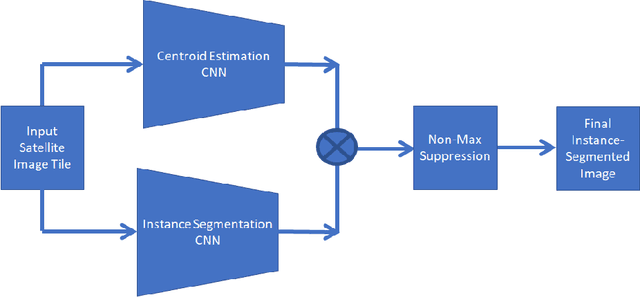

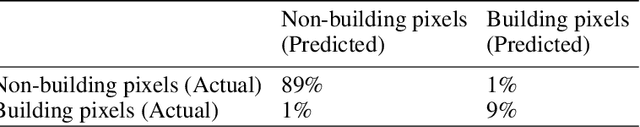

Abstract:Current advances in deep learning is leading to human-level accuracy in computer vision tasks such as object classification, localization, semantic segmentation, and instance segmentation. In this paper, we describe a new deep convolutional neural network architecture called Vec2Instance for instance segmentation. Vec2Instance provides a framework for parametrization of instances, allowing convolutional neural networks to efficiently estimate the complex shapes of instances around their centroids. We demonstrate the feasibility of the proposed architecture with respect to instance segmentation tasks on satellite images, which have a wide range of applications. Moreover, we demonstrate the usefulness of the new method for extracting building foot-prints from satellite images. Total pixel-wise accuracy of our approach is 89\%, near the accuracy of the state-of-the-art Mask RCNN (91\%). Vec2Instance is an alternative approach to complex instance segmentation pipelines, offering simplicity and intuitiveness. The code developed under this study is available in the Vec2Instance GitHub repository, https://github.com/lakmalnd/Vec2Instance

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge