Mohammed M. Abdelgwad

DynRank: Improving Passage Retrieval with Dynamic Zero-Shot Prompting Based on Question Classification

Nov 30, 2024

Abstract:This paper presents DynRank, a novel framework for enhancing passage retrieval in open-domain question-answering systems through dynamic zero-shot question classification. Traditional approaches rely on static prompts and pre-defined templates, which may limit model adaptability across different questions and contexts. In contrast, DynRank introduces a dynamic prompting mechanism, leveraging a pre-trained question classification model that categorizes questions into fine-grained types. Based on these classifications, contextually relevant prompts are generated, enabling more effective passage retrieval. We integrate DynRank into existing retrieval frameworks and conduct extensive experiments on multiple QA benchmark datasets.

Arabic aspect based sentiment analysis using BERT

Jul 28, 2021

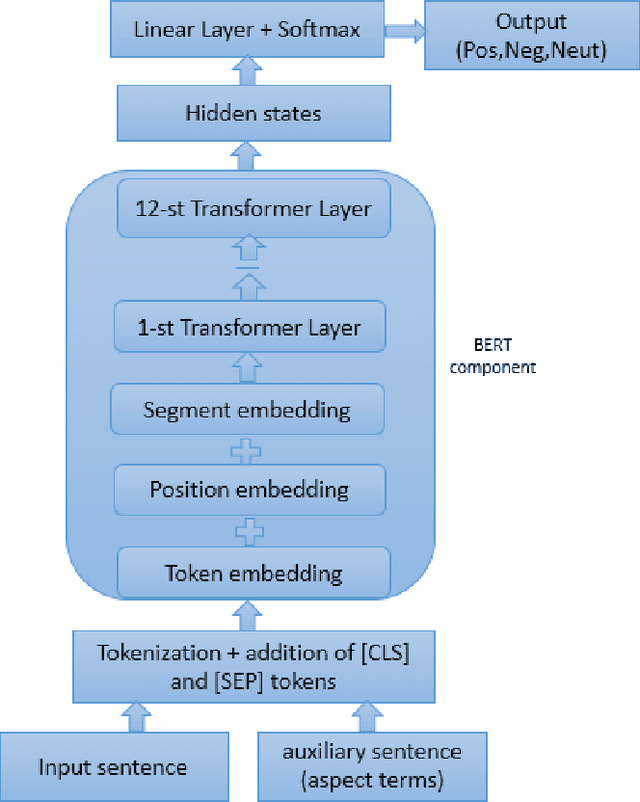

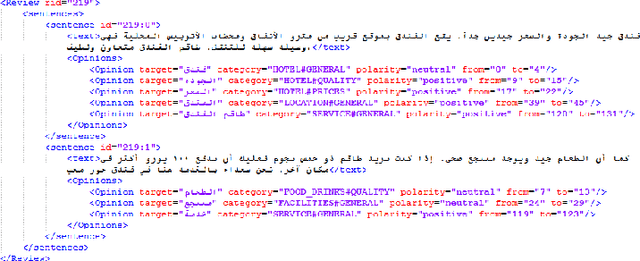

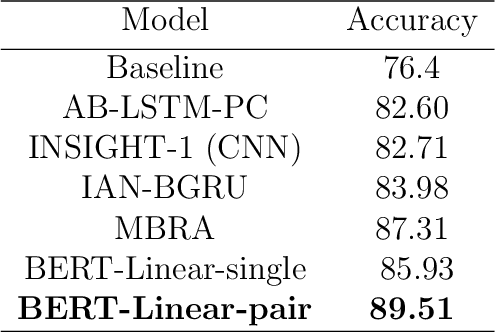

Abstract:Aspect-based sentiment analysis(ABSA) is a textual analysis methodology that defines the polarity of opinions on certain aspects related to specific targets. The majority of research on ABSA is in English, with a small amount of work available in Arabic. Most previous Arabic research has relied on deep learning models that depend primarily on context-independent word embeddings (e.g.word2vec), where each word has a fixed representation independent of its context. This article explores the modeling capabilities of contextual embeddings from pre-trained language models, such as BERT, and making use of sentence pair input on Arabic ABSA tasks. In particular, we are building a simple but effective BERT-based neural baseline to handle this task. Our BERT architecture with a simple linear classification layer surpassed the state-of-the-art works, according to the experimental results on the benchmarked Arabic hotel reviews dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge