Mohammad A. Salahuddin

AutoML4ETC: Automated Neural Architecture Search for Real-World Encrypted Traffic Classification

Aug 09, 2023Abstract:Deep learning (DL) has been successfully applied to encrypted network traffic classification in experimental settings. However, in production use, it has been shown that a DL classifier's performance inevitably decays over time. Re-training the model on newer datasets has been shown to only partially improve its performance. Manually re-tuning the model architecture to meet the performance expectations on newer datasets is time-consuming and requires domain expertise. We propose AutoML4ETC, a novel tool to automatically design efficient and high-performing neural architectures for encrypted traffic classification. We define a novel, powerful search space tailored specifically for the near real-time classification of encrypted traffic using packet header bytes. We show that with different search strategies over our search space, AutoML4ETC generates neural architectures that outperform the state-of-the-art encrypted traffic classifiers on several datasets, including public benchmark datasets and real-world TLS and QUIC traffic collected from the Orange mobile network. In addition to being more accurate, AutoML4ETC's architectures are significantly more efficient and lighter in terms of the number of parameters. Finally, we make AutoML4ETC publicly available for future research.

Generalizable Resource Scaling of 5G Slices using Constrained Reinforcement Learning

Jun 15, 2023

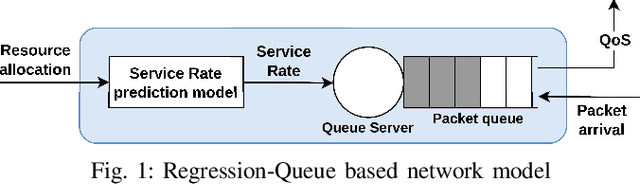

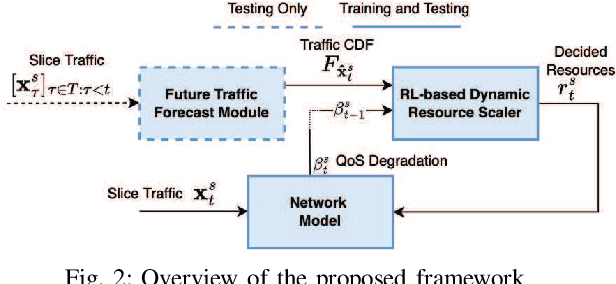

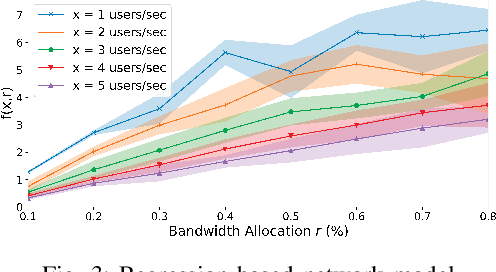

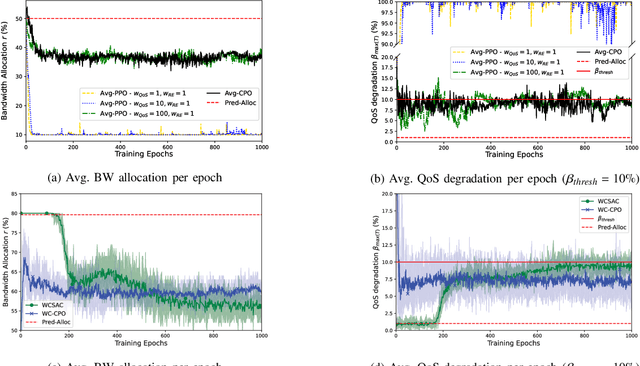

Abstract:Network slicing is a key enabler for 5G to support various applications. Slices requested by service providers (SPs) have heterogeneous quality of service (QoS) requirements, such as latency, throughput, and jitter. It is imperative that the 5G infrastructure provider (InP) allocates the right amount of resources depending on the slice's traffic, such that the specified QoS levels are maintained during the slice's lifetime while maximizing resource efficiency. However, there is a non-trivial relationship between the QoS and resource allocation. In this paper, this relationship is learned using a regression-based model. We also leverage a risk-constrained reinforcement learning agent that is trained offline using this model and domain randomization for dynamically scaling slice resources while maintaining the desired QoS level. Our novel approach reduces the effects of network modeling errors since it is model-free and does not require QoS metrics to be mathematically formulated in terms of traffic. In addition, it provides robustness against uncertain network conditions, generalizes to different real-world traffic patterns, and caters to various QoS metrics. The results show that the state-of-the-art approaches can lead to QoS degradation as high as 44.5% when tested on previously unseen traffic. On the other hand, our approach maintains the QoS degradation below a preset 10% threshold on such traffic, while minimizing the allocated resources. Additionally, we demonstrate that the proposed approach is robust against varying network conditions and inaccurate traffic predictions.

A Graph-Based Machine Learning Approach for Bot Detection

Feb 22, 2019

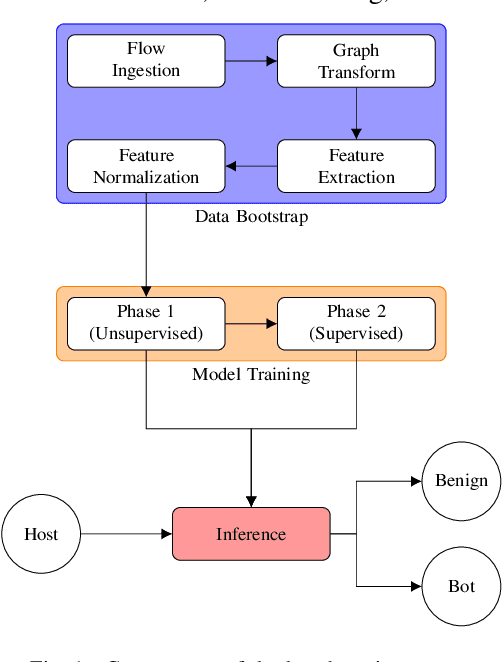

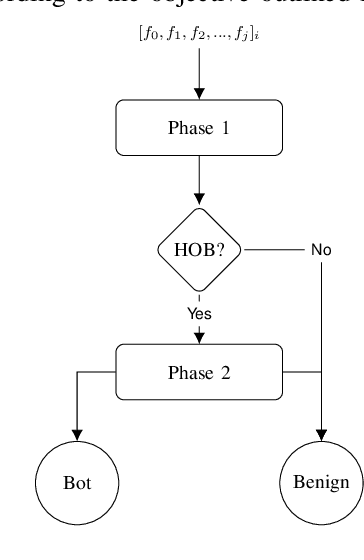

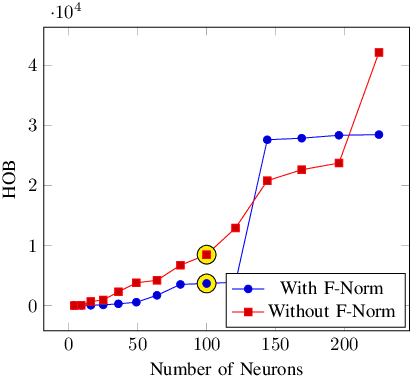

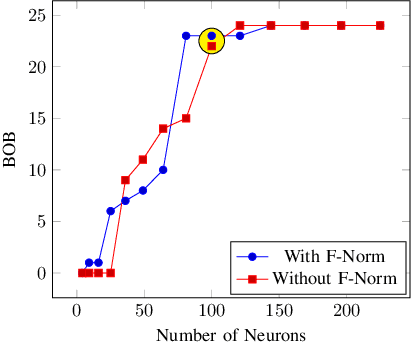

Abstract:Bot detection using machine learning (ML), with network flow-level features, has been extensively studied in the literature. However, existing flow-based approaches typically incur a high computational overhead and do not completely capture the network communication patterns, which can expose additional aspects of malicious hosts. Recently, bot detection systems which leverage communication graph analysis using ML have gained attention to overcome these limitations. A graph-based approach is rather intuitive, as graphs are true representations of network communications. In this paper, we propose a two-phased, graph-based bot detection system which leverages both unsupervised and supervised ML. The first phase prunes presumable benign hosts, while the second phase achieves bot detection with high precision. Our system detects multiple types of bots and is robust to zero-day attacks. It also accommodates different network topologies and is suitable for large-scale data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge