Mert Nakıp

Renewable energy management in smart home environment via forecast embedded scheduling based on Recurrent Trend Predictive Neural Network

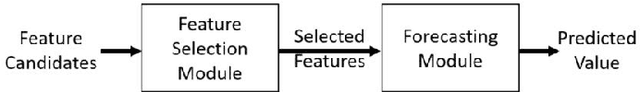

Jul 06, 2023Abstract:Smart home energy management systems help the distribution grid operate more efficiently and reliably, and enable effective penetration of distributed renewable energy sources. These systems rely on robust forecasting, optimization, and control/scheduling algorithms that can handle the uncertain nature of demand and renewable generation. This paper proposes an advanced ML algorithm, called Recurrent Trend Predictive Neural Network based Forecast Embedded Scheduling (rTPNN-FES), to provide efficient residential demand control. rTPNN-FES is a novel neural network architecture that simultaneously forecasts renewable energy generation and schedules household appliances. By its embedded structure, rTPNN-FES eliminates the utilization of separate algorithms for forecasting and scheduling and generates a schedule that is robust against forecasting errors. This paper also evaluates the performance of the proposed algorithm for an IoT-enabled smart home. The evaluation results reveal that rTPNN-FES provides near-optimal scheduling $37.5$ times faster than the optimization while outperforming state-of-the-art forecasting techniques.

Decentralized Online Federated G-Network Learning for Lightweight Intrusion Detection

Jun 22, 2023Abstract:Cyberattacks are increasingly threatening networked systems, often with the emergence of new types of unknown (zero-day) attacks and the rise of vulnerable devices. While Machine Learning (ML)-based Intrusion Detection Systems (IDSs) have been shown to be extremely promising in detecting these attacks, the need to learn large amounts of labelled data often limits the applicability of ML-based IDSs to cybersystems that only have access to private local data. To address this issue, this paper proposes a novel Decentralized and Online Federated Learning Intrusion Detection (DOF-ID) architecture. DOF-ID is a collaborative learning system that allows each IDS used for a cybersystem to learn from experience gained in other cybersystems in addition to its own local data without violating the data privacy of other systems. As the performance evaluation results using public Kitsune and Bot-IoT datasets show, DOF-ID significantly improves the intrusion detection performance in all collaborating nodes simultaneously with acceptable computation time for online learning.

Online Self-Supervised Learning in Machine Learning Intrusion Detection for the Internet of Things

Jun 22, 2023Abstract:This paper proposes a novel Self-Supervised Intrusion Detection (SSID) framework, which enables a fully online Machine Learning (ML) based Intrusion Detection System (IDS) that requires no human intervention or prior off-line learning. The proposed framework analyzes and labels incoming traffic packets based only on the decisions of the IDS itself using an Auto-Associative Deep Random Neural Network, and on an online estimate of its statistically measured trustworthiness. The SSID framework enables IDS to adapt rapidly to time-varying characteristics of the network traffic, and eliminates the need for offline data collection. This approach avoids human errors in data labeling, and human labor and computational costs of model training and data collection. The approach is experimentally evaluated on public datasets and compared with well-known ML models, showing that this SSID framework is very useful and advantageous as an accurate and online learning ML-based IDS for IoT systems.

Associated Random Neural Networks for Collective Classification of Nodes in Botnet Attacks

Mar 23, 2023Abstract:Botnet attacks are a major threat to networked systems because of their ability to turn the network nodes that they compromise into additional attackers, leading to the spread of high volume attacks over long periods. The detection of such Botnets is complicated by the fact that multiple network IP addresses will be simultaneously compromised, so that Collective Classification of compromised nodes, in addition to the already available traditional methods that focus on individual nodes, can be useful. Thus this work introduces a collective Botnet attack classification technique that operates on traffic from an n-node IP network with a novel Associated Random Neural Network (ARNN) that identifies the nodes which are compromised. The ARNN is a recurrent architecture that incorporates two mutually associated, interconnected and architecturally identical n-neuron random neural networks, that act simultneously as mutual critics to reach the decision regarding which of n nodes have been compromised. A novel gradient learning descent algorithm is presented for the ARNN, and is shown to operate effectively both with conventional off-line training from prior data, and with on-line incremental training without prior off-line learning. Real data from a 107 node packet network is used with over 700,000 packets to evaluate the ARNN, showing that it provides accurate predictions. Comparisons with other well-known state of the art methods using the same learning and testing datasets, show that the ARNN offers significantly better performance.

Engagement Detection with Multi-Task Training in E-Learning Environments

Apr 08, 2022

Abstract:Recognition of user interaction, in particular engagement detection, became highly crucial for online working and learning environments, especially during the COVID-19 outbreak. Such recognition and detection systems significantly improve the user experience and efficiency by providing valuable feedback. In this paper, we propose a novel Engagement Detection with Multi-Task Training (ED-MTT) system which minimizes mean squared error and triplet loss together to determine the engagement level of students in an e-learning environment. The performance of this system is evaluated and compared against the state-of-the-art on a publicly available dataset as well as videos collected from real-life scenarios. The results show that ED-MTT achieves 6% lower MSE than the best state-of-the-art performance with highly acceptable training time and lightweight feature extraction.

Curse of Small Sample Size in Forecasting of the Active Cases in COVID-19 Outbreak

Nov 06, 2020

Abstract:During the COVID-19 pandemic, a massive number of attempts on the predictions of the number of cases and the other future trends of this pandemic have been made. However, they fail to predict, in a reliable way, the medium and long term evolution of fundamental features of COVID-19 outbreak within acceptable accuracy. This paper gives an explanation for the failure of machine learning models in this particular forecasting problem. The paper shows that simple linear regression models provide high prediction accuracy values reliably but only for a 2-weeks period and that relatively complex machine learning models, which have the potential of learning long term predictions with low errors, cannot achieve to obtain good predictions with possessing a high generalization ability. It is suggested in the paper that the lack of a sufficient number of samples is the source of low prediction performance of the forecasting models. The reliability of the forecasting results about the active cases is measured in terms of the cross-validation prediction errors, which are used as expectations for the generalization errors of the forecasters. To exploit the information, which is of most relevant with the active cases, we perform feature selection over a variety of variables. We apply different feature selection methods, namely the Pairwise Correlation, Recursive Feature Selection, and feature selection by using the Lasso regression and compare them to each other and also with the models not employing any feature selection. Furthermore, we compare Linear Regression, Multi-Layer Perceptron, and Long-Short Term Memory models each of which is used for prediction active cases together with the mentioned feature selection methods. Our results show that the accurate forecasting of the active cases with high generalization ability is possible up to 3 days only because of the small sample size of COVID-19 data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge