Mehrasa Ahmadipour

EVaR-Optimal Arm Identification in Bandits

Oct 06, 2025

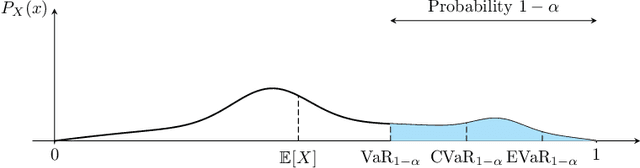

Abstract:We study the fixed-confidence best arm identification (BAI) problem within the multi-armed bandit (MAB) framework under the Entropic Value-at-Risk (EVaR) criterion. Our analysis considers a nonparametric setting, allowing for general reward distributions bounded in [0,1]. This formulation addresses the critical need for risk-averse decision-making in high-stakes environments, such as finance, moving beyond simple expected value optimization. We propose a $\delta$-correct, Track-and-Stop based algorithm and derive a corresponding lower bound on the expected sample complexity, which we prove is asymptotically matched. The implementation of our algorithm and the characterization of the lower bound both require solving a complex convex optimization problem and a related, simpler non-convex one.

Identifying the Best Transition Law

Feb 17, 2025Abstract:Motivated by recursive learning in Markov Decision Processes, this paper studies best-arm identification in bandit problems where each arm's reward is drawn from a multinomial distribution with a known support. We compare the performance { reached by strategies including notably LUCB without and with use of this knowledge. } In the first case, we use classical non-parametric approaches for the confidence intervals. In the second case, where a probability distribution is to be estimated, we first use classical deviation bounds (Hoeffding and Bernstein) on each dimension independently, and then the Empirical Likelihood method (EL-LUCB) on the joint probability vector. The effectiveness of these methods is demonstrated through simulations on scenarios with varying levels of structural complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge