Mehmet Arda Eren

Sample Efficient Robot Learning in Supervised Effect Prediction Tasks

Dec 03, 2024

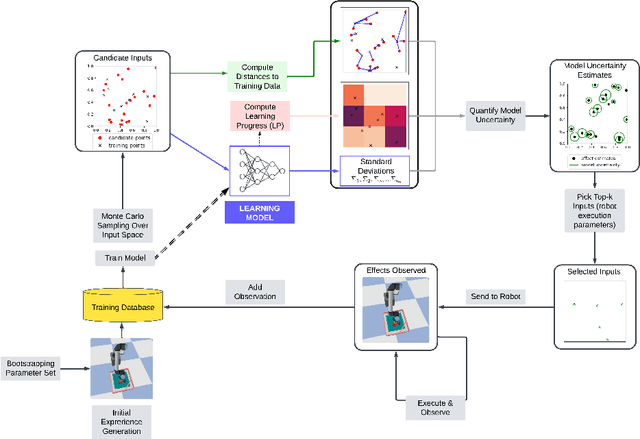

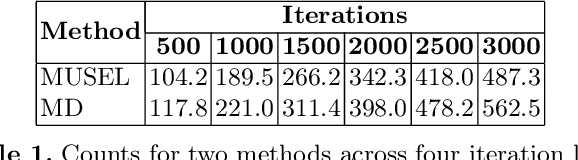

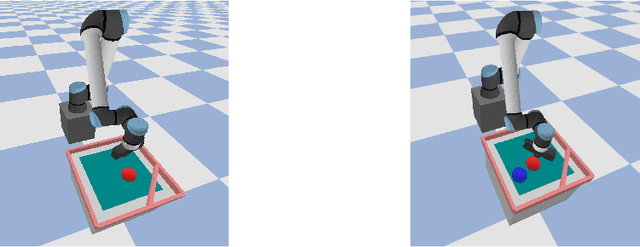

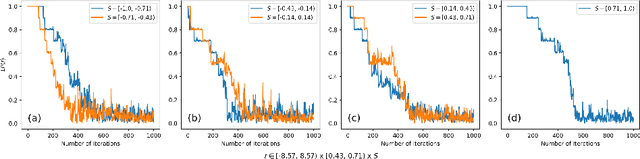

Abstract:In self-supervised robot learning, robots actively explore their environments and generate data by acting on entities in the environment. Therefore, an exploration policy is desired that ensures sample efficiency to minimize robot execution costs while still providing accurate learning. For this purpose, the robotic community has adopted Intrinsic Motivation (IM)-based approaches such as Learning Progress (LP). On the machine learning front, Active Learning (AL) has been used successfully, especially for classification tasks. In this work, we develop a novel AL framework geared towards robotics regression tasks, such as action-effect prediction and, more generally, for world model learning, which we call MUSEL - Model Uncertainty for Sample Efficient Learning. MUSEL aims to extract model uncertainty from the total uncertainty estimate given by a suitable learning engine by making use of earning progress and input diversity and use it to improve sample efficiency beyond the state-of-the-art action-effect prediction methods. We demonstrate the feasibility of our model by using a Stochastic Variational Gaussian Process (SVGP) as the learning engine and testing the system on a set of robotic experiments in simulation. The efficacy of MUSEL is demonstrated by comparing its performance to standard methods used in robot action-effect learning. In a robotic tabletop environment in which a robot manipulator is tasked with learning the effect of its actions, the experiments show that MUSEL facilitates higher accuracy in learning action effects while ensuring sample efficiency.

Context-Based Echo State Networks with Prediction Confidence for Human-Robot Shared Control

Nov 30, 2024

Abstract:In this paper, we propose a novel lightweight learning from demonstration (LfD) model based on reservoir computing that can learn and generate multiple movement trajectories with prediction intervals, which we call as Context-based Echo State Network with prediction confidence (CESN+). CESN+ can generate movement trajectories that may go beyond the initial LfD training based on a desired set of conditions while providing confidence on its generated output. To assess the abilities of CESN+, we first evaluate its performance against Conditional Neural Movement Primitives (CNMP), a comparable framework that uses a conditional neural process to generate movement primitives. Our findings indicate that CESN+ not only outperforms CNMP but is also faster to train and demonstrates impressive performance in generating trajectories for extrapolation cases. In human-robot shared control applications, the confidence of the machine generated trajectory is a key indicator of how to arbitrate control sharing. To show the usability of the CESN+ for human-robot adaptive shared control, we have designed a proof-of-concept human-robot shared control task and tested its efficacy in adapting the sharing weight between the human and the robot by comparing it to a fixed-weight control scheme. The simulation experiments show that with CESN+ based adaptive sharing the total human load in shared control can be significantly reduced. Overall, the developed CESN+ model is a strong lightweight LfD system with desirable properties such fast training and ability to extrapolate to the new task parameters while producing robust prediction intervals for its output.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge