Matt Swann

Adversarial Machine Learning -- Industry Perspectives

Feb 04, 2020

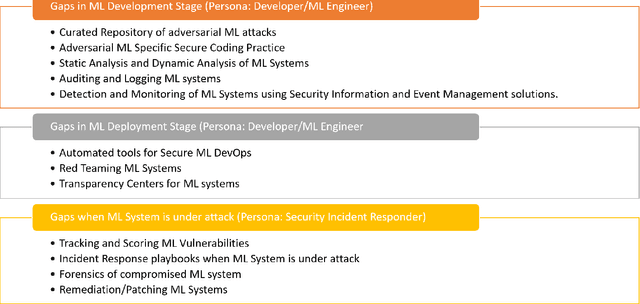

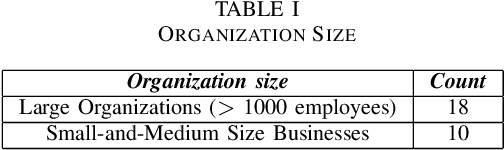

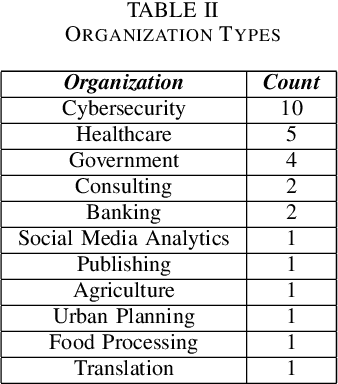

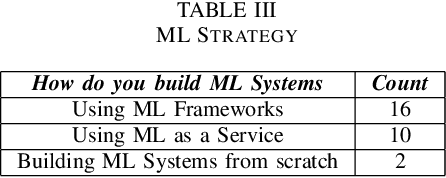

Abstract:Based on interviews with 28 organizations, we found that industry practitioners are not equipped with tactical and strategic tools to protect, detect and respond to attacks on their Machine Learning (ML) systems. We leverage the insights from the interviews and we enumerate the gaps in perspective in securing machine learning systems when viewed in the context of traditional software security development. We write this paper from the perspective of two personas: developers/ML engineers and security incident responders who are tasked with securing ML systems as they are designed, developed and deployed ML systems. The goal of this paper is to engage researchers to revise and amend the Security Development Lifecycle for industrial-grade software in the adversarial ML era.

Practical Machine Learning for Cloud Intrusion Detection: Challenges and the Way Forward

Sep 20, 2017

Abstract:Operationalizing machine learning based security detections is extremely challenging, especially in a continuously evolving cloud environment. Conventional anomaly detection does not produce satisfactory results for analysts that are investigating security incidents in the cloud. Model evaluation alone presents its own set of problems due to a lack of benchmark datasets. When deploying these detections, we must deal with model compliance, localization, and data silo issues, among many others. We pose the problem of "attack disruption" as a way forward in the security data science space. In this paper, we describe the framework, challenges, and open questions surrounding the successful operationalization of machine learning based security detections in a cloud environment and provide some insights on how we have addressed them.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge