Mateus de Souza Monteiro

Evidence-based explanation to promote fairness in AI systems

Mar 03, 2020

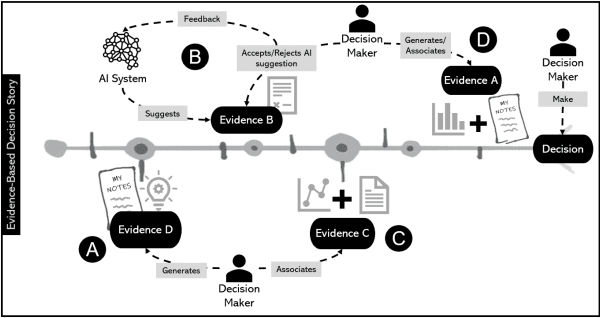

Abstract:As Artificial Intelligence (AI) technology gets more intertwined with every system, people are using AI to make decisions on their everyday activities. In simple contexts, such as Netflix recommendations, or in more complex context like in judicial scenarios, AI is part of people's decisions. People make decisions and usually, they need to explain their decision to others or in some matter. It is particularly critical in contexts where human expertise is central to decision-making. In order to explain their decisions with AI support, people need to understand how AI is part of that decision. When considering the aspect of fairness, the role that AI has on a decision-making process becomes even more sensitive since it affects the fairness and the responsibility of those people making the ultimate decision. We have been exploring an evidence-based explanation design approach to 'tell the story of a decision'. In this position paper, we discuss our approach for AI systems using fairness sensitive cases in the literature.

Do ML Experts Discuss Explainability for AI Systems? A discussion case in the industry for a domain-specific solution

Feb 27, 2020

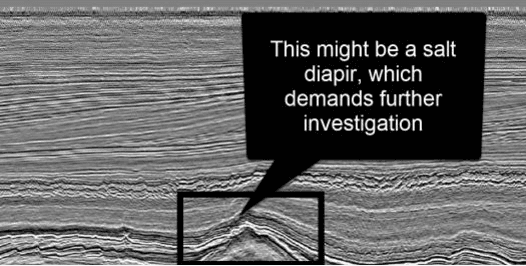

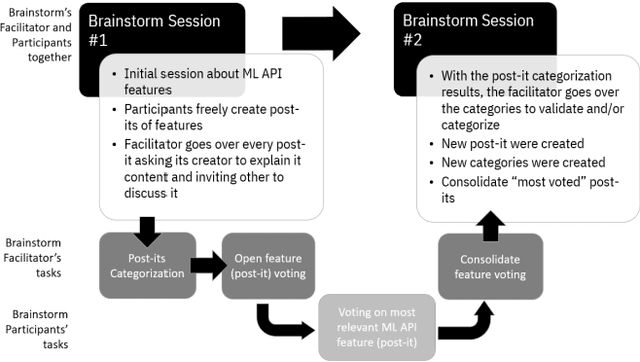

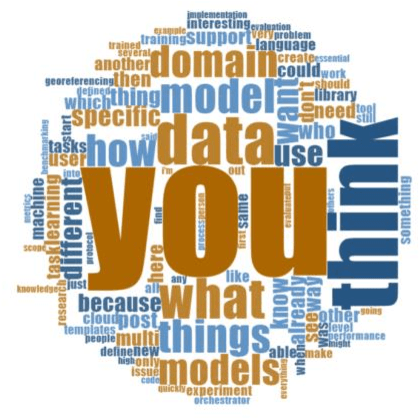

Abstract:The application of Artificial Intelligence (AI) tools in different domains are becoming mandatory for all companies wishing to excel in their industries. One major challenge for a successful application of AI is to combine the machine learning (ML) expertise with the domain knowledge to have the best results applying AI tools. Domain specialists have an understanding of the data and how it can impact their decisions. ML experts have the ability to use AI-based tools dealing with large amounts of data and generating insights for domain experts. But without a deep understanding of the data, ML experts are not able to tune their models to get optimal results for a specific domain. Therefore, domain experts are key users for ML tools and the explainability of those AI tools become an essential feature in that context. There are a lot of efforts to research AI explainability for different contexts, users and goals. In this position paper, we discuss interesting findings about how ML experts can express concerns about AI explainability while defining features of an ML tool to be developed for a specific domain. We analyze data from two brainstorm sessions done to discuss the functionalities of an ML tool to support geoscientists (domain experts) on analyzing seismic data (domain-specific data) with ML resources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge