Maryam Najafi

BERT-DRE: BERT with Deep Recursive Encoder for Natural Language Sentence Matching

Nov 04, 2021

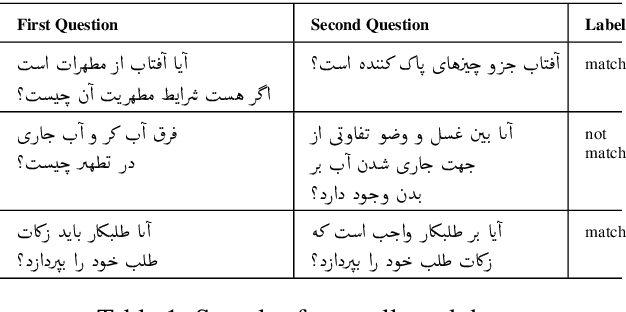

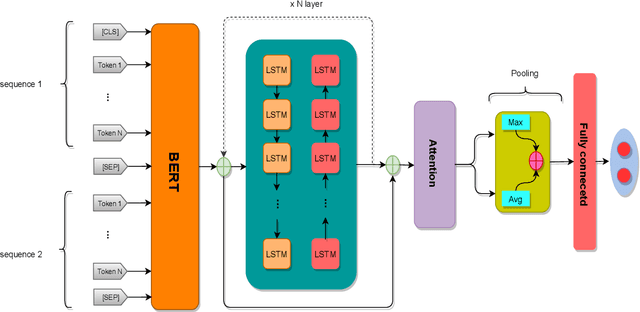

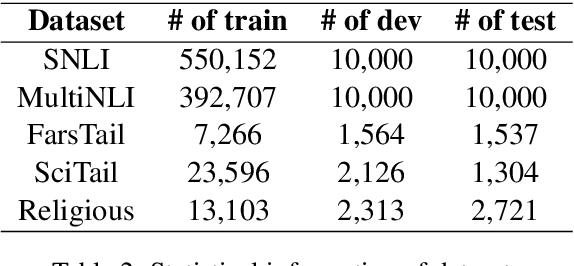

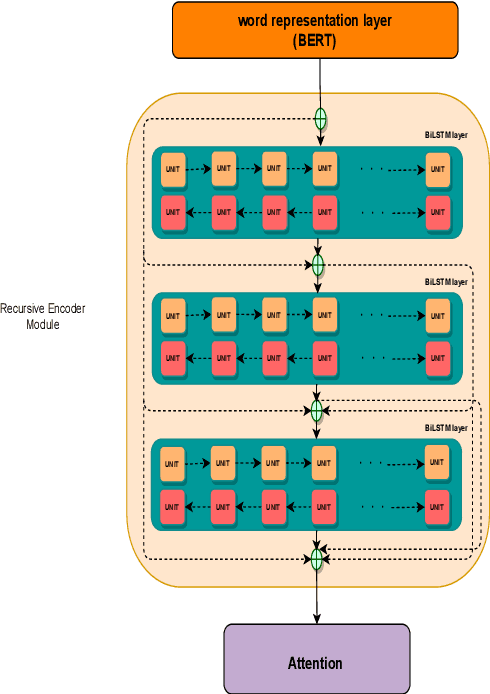

Abstract:This paper presents a deep neural architecture, for Natural Language Sentence Matching (NLSM) by adding a deep recursive encoder to BERT so called BERT with Deep Recursive Encoder (BERT-DRE). Our analysis of model behavior shows that BERT still does not capture the full complexity of text, so a deep recursive encoder is applied on top of BERT. Three Bi-LSTM layers with residual connection are used to design a recursive encoder and an attention module is used on top of this encoder. To obtain the final vector, a pooling layer consisting of average and maximum pooling is used. We experiment our model on four benchmarks, SNLI, FarsTail, MultiNLI, SciTail, and a novel Persian religious questions dataset. This paper focuses on improving the BERT results in the NLSM task. In this regard, comparisons between BERT-DRE and BERT are conducted, and it is shown that in all cases, BERT-DRE outperforms BERT. The BERT algorithm on the religious dataset achieved an accuracy of 89.70%, and BERT-DRE architectures improved to 90.29% using the same dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge