Maryam Mehrnezhad

Measuring Online Hate on 4chan using Pre-trained Deep Learning Models

Mar 30, 2025

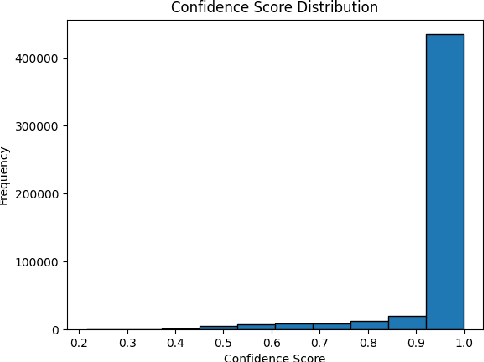

Abstract:Online hate speech can harmfully impact individuals and groups, specifically on non-moderated platforms such as 4chan where users can post anonymous content. This work focuses on analysing and measuring the prevalence of online hate on 4chan's politically incorrect board (/pol/) using state-of-the-art Natural Language Processing (NLP) models, specifically transformer-based models such as RoBERTa and Detoxify. By leveraging these advanced models, we provide an in-depth analysis of hate speech dynamics and quantify the extent of online hate non-moderated platforms. The study advances understanding through multi-class classification of hate speech (racism, sexism, religion, etc.), while also incorporating the classification of toxic content (e.g., identity attacks and threats) and a further topic modelling analysis. The results show that 11.20% of this dataset is identified as containing hate in different categories. These evaluations show that online hate is manifested in various forms, confirming the complicated and volatile nature of detection in the wild.

Verifiable Fairness: Privacy-preserving Computation of Fairness for Machine Learning Systems

Sep 12, 2023

Abstract:Fair machine learning is a thriving and vibrant research topic. In this paper, we propose Fairness as a Service (FaaS), a secure, verifiable and privacy-preserving protocol to computes and verify the fairness of any machine learning (ML) model. In the deisgn of FaaS, the data and outcomes are represented through cryptograms to ensure privacy. Also, zero knowledge proofs guarantee the well-formedness of the cryptograms and underlying data. FaaS is model--agnostic and can support various fairness metrics; hence, it can be used as a service to audit the fairness of any ML model. Our solution requires no trusted third party or private channels for the computation of the fairness metric. The security guarantees and commitments are implemented in a way that every step is securely transparent and verifiable from the start to the end of the process. The cryptograms of all input data are publicly available for everyone, e.g., auditors, social activists and experts, to verify the correctness of the process. We implemented FaaS to investigate performance and demonstrate the successful use of FaaS for a publicly available data set with thousands of entries.

A Practical Deep Learning-Based Acoustic Side Channel Attack on Keyboards

Aug 02, 2023

Abstract:With recent developments in deep learning, the ubiquity of micro-phones and the rise in online services via personal devices, acoustic side channel attacks present a greater threat to keyboards than ever. This paper presents a practical implementation of a state-of-the-art deep learning model in order to classify laptop keystrokes, using a smartphone integrated microphone. When trained on keystrokes recorded by a nearby phone, the classifier achieved an accuracy of 95%, the highest accuracy seen without the use of a language model. When trained on keystrokes recorded using the video-conferencing software Zoom, an accuracy of 93% was achieved, a new best for the medium. Our results prove the practicality of these side channel attacks via off-the-shelf equipment and algorithms. We discuss a series of mitigation methods to protect users against these series of attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge